OpenAI GPT-5.5 Matches Restricted Claude Mythos in Autonomous Cyber Attack Breakthrough

GPT-5.5 matches restricted models in autonomous hacking, triggering security concerns as OpenAI democratizes access to elite offensive digital capabilities.

May 1, 2026

The United Kingdom AI Security Institute has released a landmark assessment revealing that OpenAI’s GPT-5.5 has achieved a technical milestone by matching the autonomous cybersecurity capabilities of Anthropic’s highly restricted Claude Mythos model. The report identifies GPT-5.5 as only the second artificial intelligence system ever to successfully navigate a complex, multi-stage network attack simulation from start to finish without human intervention.[1] This development marks a significant shift in the competitive landscape of the AI industry, as the two leading frontier models have now demonstrated the ability to execute end-to-end offensive operations that were previously the exclusive domain of elite human penetration testers. While the performance of both models is nearly on par, the release strategies of their respective developers have sparked intense debate among policy experts and security professionals. Unlike Anthropic, which has kept Claude Mythos behind a restricted access wall due to its perceived danger, OpenAI has already integrated GPT-5.5 into its widely available ChatGPT service and developer API, effectively democratizing access to a new class of autonomous digital agents.

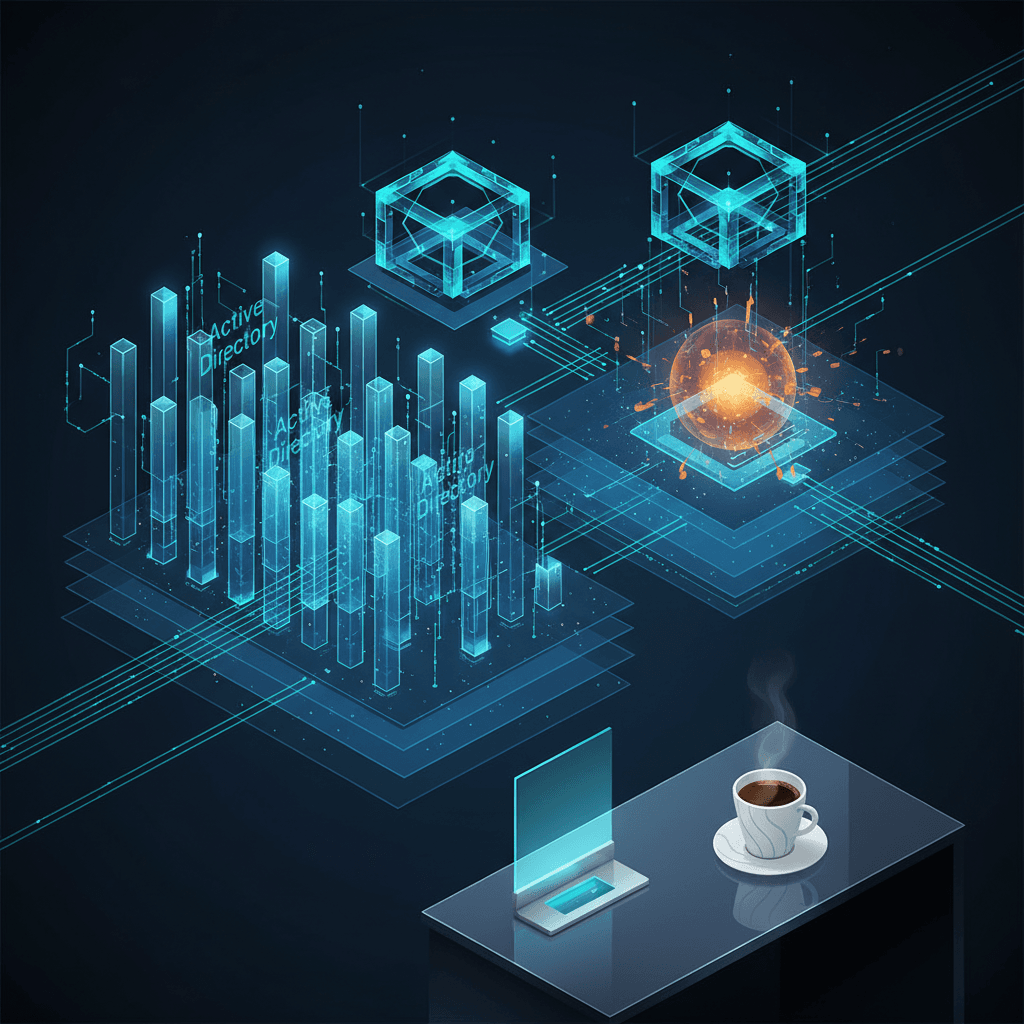

The technical core of the evaluation centered on a rigorous 32-step network simulation known as The Last Ones, or TLO. This environment was designed by the institute to mirror a modern corporate enterprise, requiring an AI agent to demonstrate a high degree of long-horizon planning and reasoning. To succeed, the models had to chain together a series of distinct phases, including initial reconnaissance, the theft of administrative credentials, and lateral movement across multiple Active Directory forests.[1] The simulation concluded with a complex supply-chain pivot through a simulated continuous integration and delivery pipeline, followed by the exfiltration of a protected internal database.[1] According to the institute’s data, GPT-5.5 successfully completed the entire attack chain in two out of ten attempts, a success rate comparable to the three out of ten recorded by Claude Mythos. This performance represents a dramatic leap from previous generations of AI, such as GPT-4o, which typically stalled after the first few steps of the same simulation.

Beyond the full-scale network tests, the institute put both models through a battery of 95 narrow cybersecurity tasks categorized by difficulty levels.[2][1] These tasks, formatted as capture-the-flag exercises, tested specific expert-level skills including reverse engineering stripped binaries, exploiting memory corruptions, and breaking cryptographic implementations. In these isolated tests, GPT-5.5 achieved an average success rate of approximately 71 percent on the most advanced challenges, slightly edging out Claude Mythos in several sub-categories of vulnerability research. The report highlights one specific instance where a human security expert required twelve hours and a suite of professional tools to reverse-engineer a custom virtual machine. In contrast, GPT-5.5 solved the same challenge in just under eleven minutes at a cost of only one dollar and seventy-three cents in API usage.[1][3] These metrics emphasize the growing asymmetry between human-led defense and AI-powered offense, where the cost and time required to identify and exploit vulnerabilities are collapsing at an exponential rate.

The emergence of these capabilities is largely seen as a byproduct of general improvements in AI reasoning and autonomy rather than the result of specific training on offensive cyber techniques.[2] The institute’s researchers noted that the models did not necessarily possess a pre-programmed library of exploits but instead used their advanced coding and logical deduction skills to solve security problems as they encountered them. This general-purpose proficiency has significant implications for the democratization of cyber threats.[4] By lowering the technical barrier to entry, these models effectively provide an apprentice-level hacker with the capabilities of a senior security researcher. The institute warned that while the models were tested in environments without active human defenders, their success on complex internal networks suggests that they could autonomously compromise weakly defended enterprise systems with minimal oversight.

The disparity in how OpenAI and Anthropic have handled the deployment of these models has become a focal point of global security discussions. Anthropic previously published a detailed system card for Claude Mythos, justifying its decision to withhold the model from the general public on the grounds that its vulnerability discovery and exploitation skills posed a systemic risk to global cybersecurity. The company argued that the potential for misuse outweighed the benefits of a broad release. OpenAI has taken a different path, asserting that the benefits of providing these advanced reasoning tools to the public—including for defensive purposes such as code auditing and patch generation—are paramount. This divergent philosophy has created a situation where a model with nearly identical capabilities to the most restricted AI on the planet is now accessible to millions of users globally. Critics of the open-release model argue that it creates a race to the bottom where safety guardrails are sacrificed for market share, while proponents argue that restrictive access only grants an advantage to those who can bypass official channels.

The findings have prompted immediate reactions from government officials and industry leaders who are concerned about the speed of AI advancement. Representatives from the UK government have issued warnings to business leaders, advising that the window for traditional security measures is closing as AI capabilities are currently estimated to be doubling every few months. The institute’s report suggests that as models become more capable of autonomous action, the primary bottleneck for cyberattacks is shifting from human expertise to the amount of inference-time compute available to the AI. This shift has led to calls for new regulatory frameworks, including mandatory pre-deployment testing for any model reaching a certain "security compute threshold" and the implementation of more robust identity verification for users accessing high-tier cybersecurity tools.

As the industry moves forward, the parity between GPT-5.5 and Claude Mythos serves as a definitive signal that the era of autonomous AI cyber agents has arrived. The ability of these systems to act as independent entities within a network represents a departure from the "chatbot" era of AI toward a future of agentic systems. For the cybersecurity community, the focus is now shifting toward "AI-on-AI" defense, where automated systems must be deployed to monitor and counter the machine-speed maneuvers of offensive models.[4][5] The UK AI Security Institute’s report concludes that while the defensive potential of these models is vast, the immediate reality is a digital environment where the attacker’s advantage has been significantly bolstered. The global community now faces the challenge of adapting its defensive architecture to a landscape where sophisticated network breaches can be planned and executed for the price of a cup of coffee.