Meta's LeCun Declares LLMs a Dead End, Urges Shift to Sensory World Models

Yann LeCun declares LLMs a dead end for AGI, championing "world models" over token prediction for true intelligence.

December 15, 2025

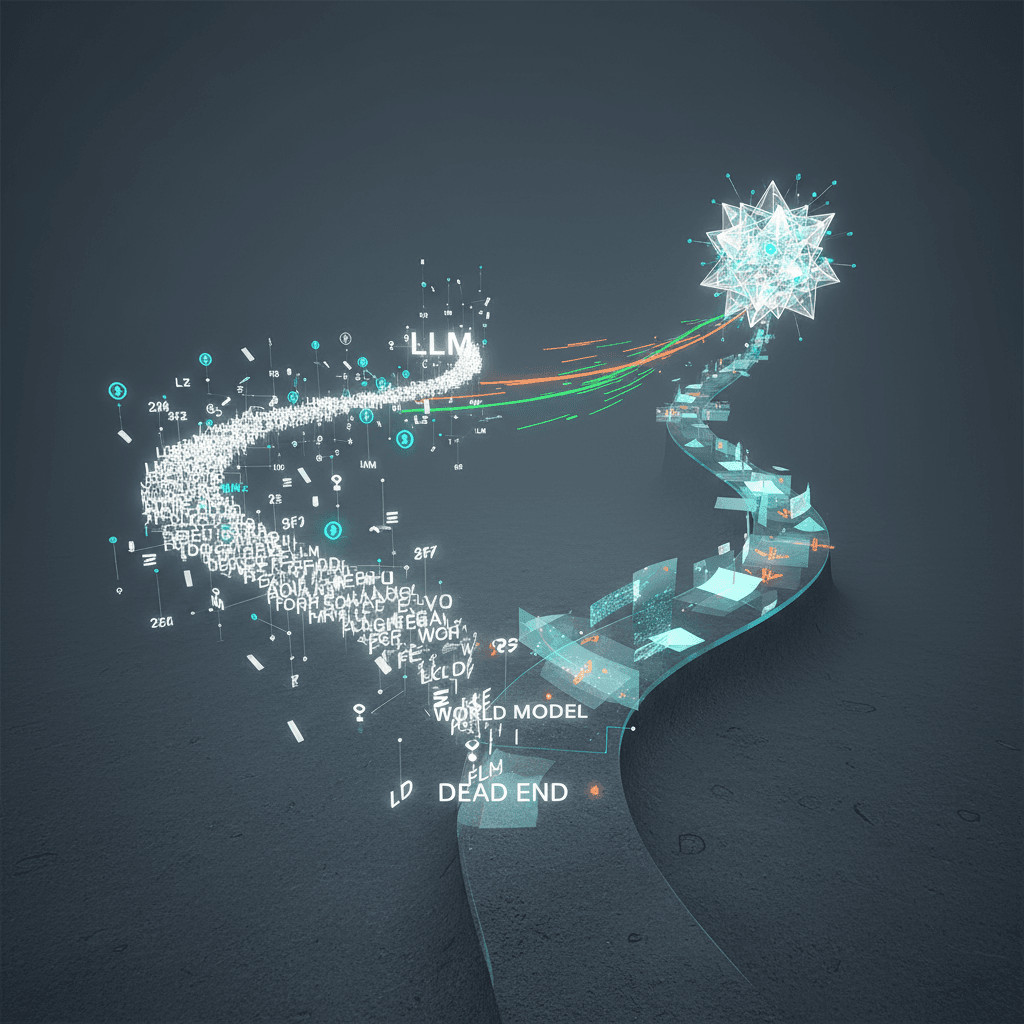

A fierce debate is raging at the heart of the artificial intelligence community, questioning the very foundation upon which today's most impressive AI systems are built. While Large Language Models (LLMs) like ChatGPT have captured the public imagination with their linguistic prowess, some of the field's most respected pioneers argue they are a dead end on the path to true artificial general intelligence (AGI). Leading this charge is Meta's Chief AI Scientist, Yann LeCun, who contends that the core mechanism of these models—predicting the next word, or "token," in a sequence—is a fundamental limitation that prevents them from achieving human-like understanding, reasoning, and planning. This critique challenges the dominant paradigm in AI development and points toward a future that may lie beyond simply scaling up existing architectures.

The crux of LeCun's argument, articulated in various forums and debates, is that autoregressive LLMs are mathematically "doomed."[1] He posits that because these models generate responses one token at a time, any small probability of error at each step compounds exponentially.[1] The longer the generated text, the higher the likelihood that the answer will diverge from a correct or coherent path, a problem he deems not fixable without a major redesign.[1] This inherent unreliability, he argues, makes them unsuitable for critical applications that demand factual accuracy and controllability.[1] Beyond the statistical fragility, LeCun emphasizes a more profound conceptual flaw: LLMs lack a genuine understanding of the world. They are trained on vast amounts of text, but this data is a mere shadow of reality.[2] As a result, they have no grounding in physical reality, cannot grasp cause and effect, and are unable to reason about situations not explicitly described in their training data.[3] This leads to what is known as Moravec's paradox, where AI can perform complex tasks like writing code but struggles with simple things a young child understands intuitively about the physical world.[1][4]

This fundamental disconnect from the real world manifests in several key limitations that prevent LLMs from achieving human-level intelligence.[5] According to LeCun, true intelligence requires four key characteristics that current models lack: an understanding of the physical world, persistent memory, the ability to reason, and the capacity for hierarchical planning.[5][6] LLMs operate in a transient state, without the ability to truly learn from interactions or accumulate knowledge over time like humans do.[3] Their reasoning is often a clever mimicry of patterns in text rather than a deliberative, System 2-style thought process.[2] When an LLM produces an output, it is a reactive, fixed computation for each token, not a process of deeper contemplation or planning.[2][5] This is why LeCun has advised PhD students to look beyond LLMs, viewing them as an "off-ramp on the road to human-level AI."[3][7] The current industry focus on scaling these models, he believes, is sucking the oxygen out of the room, diverting resources from more promising, foundational research.[7][5]

In place of token prediction, LeCun champions an alternative path centered on what he calls "world models."[8][4] He proposes objective-driven AI systems that learn not from text alone, but from sensory data like video, allowing them to build an internal, predictive simulation of how the world works.[3][8][9] This is analogous to how human infants learn—by observing and interacting with their environment, they develop an intuitive understanding of physics and causality long before they master language.[10] To achieve this, LeCun and his team at Meta have been developing Joint Embedding Predictive Architectures (JEPA).[1][11] Unlike generative models that try to predict every pixel in the next frame of a video—a computationally expensive and inefficient task—JEPAs work by learning abstract representations of the world.[11][2] They predict the future state of the world within this abstract space, which is a more manageable and promising approach to building systems that can genuinely understand, reason, and plan.[11][10] This would allow an AI to simulate the potential outcomes of its actions, a crucial step towards creating intelligent agents that can interact safely and effectively with the real world.[10]

The implications of this debate extend far beyond academic circles, carrying significant weight for the future direction of the multi-billion dollar AI industry. While companies like OpenAI and Google continue to invest heavily in scaling ever-larger language models, LeCun's critique suggests this strategy may eventually hit a wall.[7] If he is correct, the next major breakthroughs in AI will not come from refining existing LLMs but from entirely new architectures designed to learn from a richer, more diverse set of data that includes video and other sensory inputs.[5] This shift would have a ripple effect across the industry, impacting everything from the development of autonomous robots and self-driving cars to the creation of truly helpful AI assistants.[4] While the incredible capabilities of current LLMs are undeniable, the case against relying solely on token prediction serves as a crucial reminder that the path to artificial general intelligence is likely to be more complex than simply predicting the next word. The focus may need to shift from building word models to constructing true world models.[3]

Sources

[1]

[3]

[5]

[6]

[8]

[9]

[10]

[11]