Meta faces massive EU privacy probe as Kenyan workers review private smart glasses footage

A hidden pipeline of private user footage to Kenyan annotators exposes Meta’s AI to ethical backlash and European scrutiny.

March 3, 2026

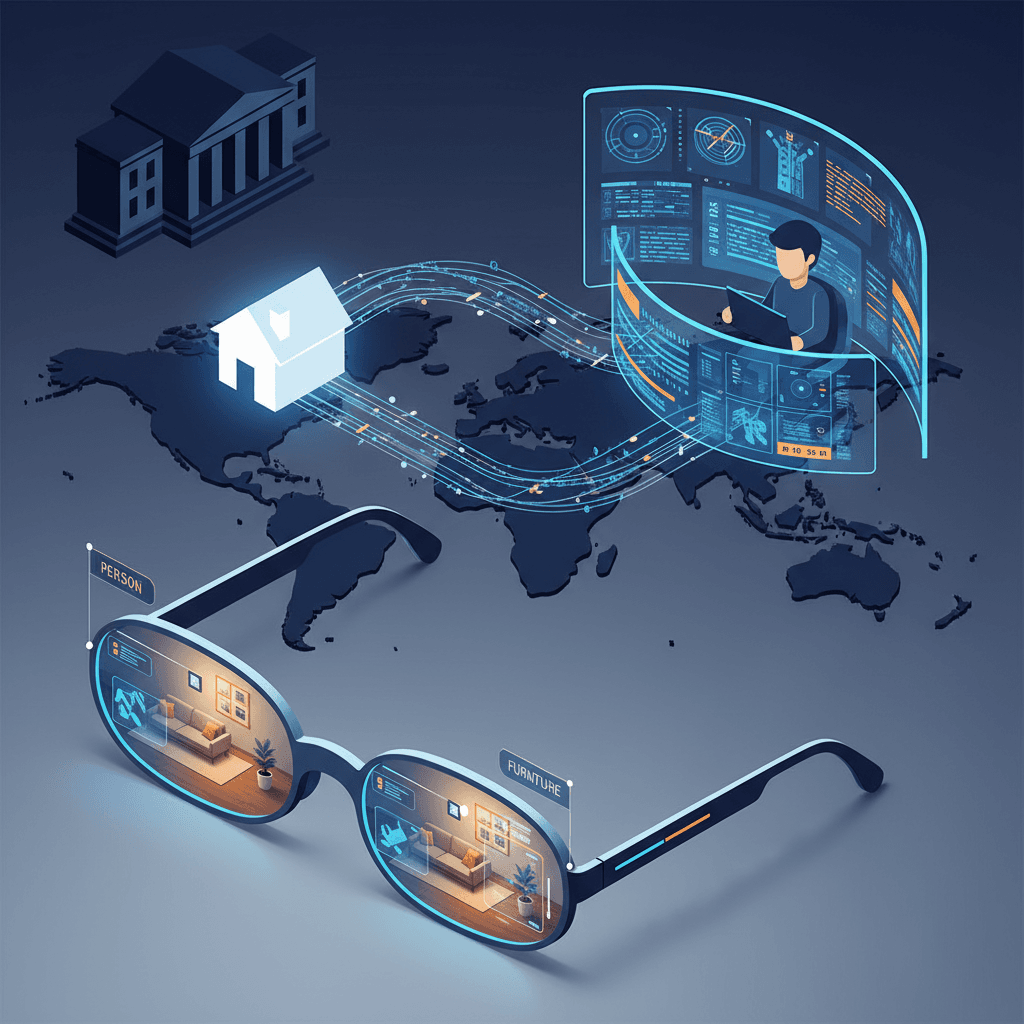

The rapid expansion of artificial intelligence into wearable technology has reached a critical juncture as Meta faces intense scrutiny over the privacy practices surrounding its popular Ray-Ban smart glasses.[1][2][3][4] While the company has marketed the device as a seamless fusion of fashion and cutting-edge AI, a series of investigative reports has revealed a troubling pipeline of private data flowing from the homes of Western users to data processing centers in Nairobi, Kenya. This revelation has not only sparked an ethical debate regarding the "ghost work" that powers modern AI but has also placed Meta on a collision course with European privacy regulators who are increasingly wary of how personal data is exported and processed across international borders.

The controversy stems from an investigation by Swedish news outlets Svenska Dagbladet and Goteborgs-Posten, which uncovered that human contractors in Kenya are tasked with reviewing and labeling raw footage captured by the glasses.[3][5][6] These workers, employed by the third-party firm Sama, serve as the human-in-the-loop essential for training Meta’s multimodal AI systems. To improve the "Look and Ask" feature—which allows users to ask the AI questions about what they are seeing in real-time—the system must first be trained on vast amounts of visual data. However, the investigation found that the material being reviewed by Kenyan annotators is often deeply personal and captured in environments where users have the highest expectation of privacy. Workers reported seeing individuals in states of undress, intimate sexual encounters, and even sensitive financial documents like bank cards and statements.[3]

Central to Meta's defense is the claim that it employs robust safeguards to protect user identity, including automated tools designed to blur faces and sensitive information before human reviewers see the footage. Yet, the testimony from Nairobi-based workers suggests these technical guardrails are frequently ineffective.[3] Multiple annotators described instances where the anonymization software failed to execute, leaving faces clearly visible and identifiable. In other cases, the "look" function was triggered accidentally or left running, capturing footage of users in private moments without their explicit awareness.[3] This discrepancy between Meta’s public-facing privacy promises and the reality of its data pipeline has raised fundamental questions about the transparency of AI training models and whether users truly understand the level of human intervention involved in "cloud processing."[3]

The legal implications of these findings are particularly acute in the European Union, where the General Data Protection Regulation (GDPR) sets a high bar for the handling of personal data.[7][4] Under EU law, the transfer of sensitive information to "third countries" that do not have a recognized adequacy status—a list that currently does not include Kenya—requires rigorous contractual safeguards and clear, informed consent from the data subjects.[5][4] European privacy watchdogs, led by the Irish Data Protection Commission, have begun looking into whether Meta’s data exports comply with these standards.[4] Lawmakers in the European Parliament have already voiced concerns that Meta may be bypassing strict consent requirements by burying the details of human review deep within complex terms of service agreements. If regulators determine that Meta failed to provide sufficient transparency or that its anonymization techniques are inadequate, the company could face fines totaling billions of dollars, alongside potential orders to halt data processing in the region.

This is not the first time Meta and its contractor, Sama, have been at the center of a labor and privacy firestorm.[8] Sama was previously the primary content moderation partner for Meta in Africa, a relationship that ended amid a flurry of lawsuits and allegations of exploitative working conditions.[9][8] Former employees in Nairobi have described the psychological toll of reviewing graphic and disturbing content for wages as low as $1.50 to $2.00 per hour. The transition from content moderation to AI data labeling has changed the nature of the work, but many of the underlying issues remain. Workers now find themselves acting as silent observers of private lives, often feeling like voyeurs in a process they are powerless to change. The ethical burden of this work is exacerbated by the fact that many of these contractors reportedly feel pressured to meet high productivity quotas, leaving little room for the careful handling of the sensitive material they encounter.

The scale of the privacy risk is growing in tandem with the device's commercial success. Industry data suggests that Meta sold over seven million pairs of its smart glasses in 2025 alone, a massive increase from the two million units sold in previous years.[3] As the user base grows, so does the volume of visual and auditory data being uploaded to Meta’s servers. Unlike smartphones, which are generally held in the hand and directed intentionally, smart glasses are designed for passive, "always-on" engagement. This shift in form factor creates a new set of challenges for bystanders who may be recorded without their knowledge.[10][3] While Meta has included a small LED light on the frames to signal when a recording is in progress, critics argue this is an insufficient warning in public spaces and does nothing to protect the privacy of those within the wearer’s own household.

From a broader industry perspective, the Meta scandal highlights a systemic tension within the AI sector: the need for massive datasets versus the right to individual privacy. To remain competitive with rivals like Apple and Google, Meta must continuously refine its AI’s ability to understand the physical world. This requires high-quality, human-labeled data that covers a wide range of real-world scenarios. However, the current model of exporting this task to low-wage jurisdictions with fewer regulatory hurdles is becoming increasingly untenable. If the European Commission moves to tighten the rules on AI data labeling, it could force a radical shift in how these technologies are developed, potentially moving toward "on-device" processing where data never leaves the user’s hardware. While this would be a victory for privacy, it would also represent a significant technical hurdle for companies that currently rely on the power of the cloud.

The fallout from the Kenyan data labeling revelations is likely to define the next phase of wearable AI regulation. For years, tech companies have benefited from a lack of specific laws governing the intersection of AI, cameras, and person-to-person interaction in the physical world. That era of ambiguity is rapidly ending. As consumer trust wavers, the industry faces a choice: double down on opaque data pipelines that prioritize rapid development, or embrace a more transparent, privacy-first model that grants users genuine control over their digital footprint. For Meta, the stakes are particularly high. After spending years trying to rehabilitate its image following the Cambridge Analytica scandal, the company now finds itself defending the very core of its next-generation hardware strategy against charges that it has once again prioritized growth over the fundamental rights of its users.

The final outcome of this controversy will likely hinge on the findings of the Irish Data Protection Commission and other national watchdogs across Europe. If they determine that Meta’s "privacy by design" is more of a marketing slogan than a technical reality, it could trigger a series of cascading legal challenges that fundamentally alter the landscape of the AI industry. Beyond the legalities, there is the enduring question of the human cost. As long as the world’s most advanced AI systems require the invisible labor of workers in Nairobi to function, the industry will remain haunted by the ethical contradictions of the digital age. The vision of a future where AI understands our every need is enticing to many, but the current reality—where that understanding is built on the backs of underpaid workers viewing our most private moments—is a price that many consumers and regulators may ultimately find too high to pay.