DeepMind Veo 3.1 shifts AI video: Creators now direct clips with reference images.

Veo 3.1 leverages visual references, 4K resolution, and directorial controls to solve AI video's consistency problem.

January 13, 2026

Google DeepMind has unveiled a substantial upgrade to its advanced video generation model, Veo 3.1, introducing a suite of features centered on greater creative control and professional-grade output quality. The key innovation is the enhancement of the "Ingredients to Video" function, which allows users to leverage reference images for highly consistent and dynamic video clips. This development signifies a pivot in the AI video landscape, shifting the process from simple text-based generation toward a more visually directed and nuanced form of digital filmmaking, positioning Veo 3.1 as a formidable contender in the rapidly evolving market.[1][2]

The core of the Veo 3.1 update is its improved ability to translate visual input into a moving sequence. The "Ingredients to Video" feature, now more expressive and consistent, enables creators to upload up to three distinct reference images to guide the final output. These images can serve as visual anchors for a specific character, a detailed background scene, or an overall artistic style, all of which the model analyzes and synthesizes into a single, cohesive video. This multi-image input is a direct solution to a pervasive challenge in early generative AI models: maintaining the visual identity of a character or object across multiple frames, which previously suffered from flickering or distortion. By anchoring the generation to precise visual cues, Veo 3.1 ensures better fidelity to the creator's vision and produces sequences with fluid continuity in lighting, shadows, and motion, making the resulting clips feel more natural and engaging.[1][3][4][2]

Beyond creative control, the update addresses the increasingly mobile-first and high-resolution demands of modern content production. The model now supports native vertical outputs, a critical addition for creators targeting platforms like YouTube Shorts and other short-form video ecosystems. This portrait-mode generation, often in the 9:16 aspect ratio, allows for content to be built from the ground up for mobile viewing without the compromises of cropping or resizing an initially landscape video. Furthermore, Veo 3.1 introduces state-of-the-art upscaling capabilities, offering final output resolutions of up to 1080p and 4K. This focus on high-fidelity production caters specifically to professional workflows, marketing campaigns, and large-screen viewing, where sharp textures and stunning clarity are paramount. The availability of both standard and "Fast" model variants in the API, with varying price points per second, also reflects a strategic move to optimize for both maximum quality in professional projects and cost efficiency in rapid iteration or storyboarding phases.[5][6][7][2]

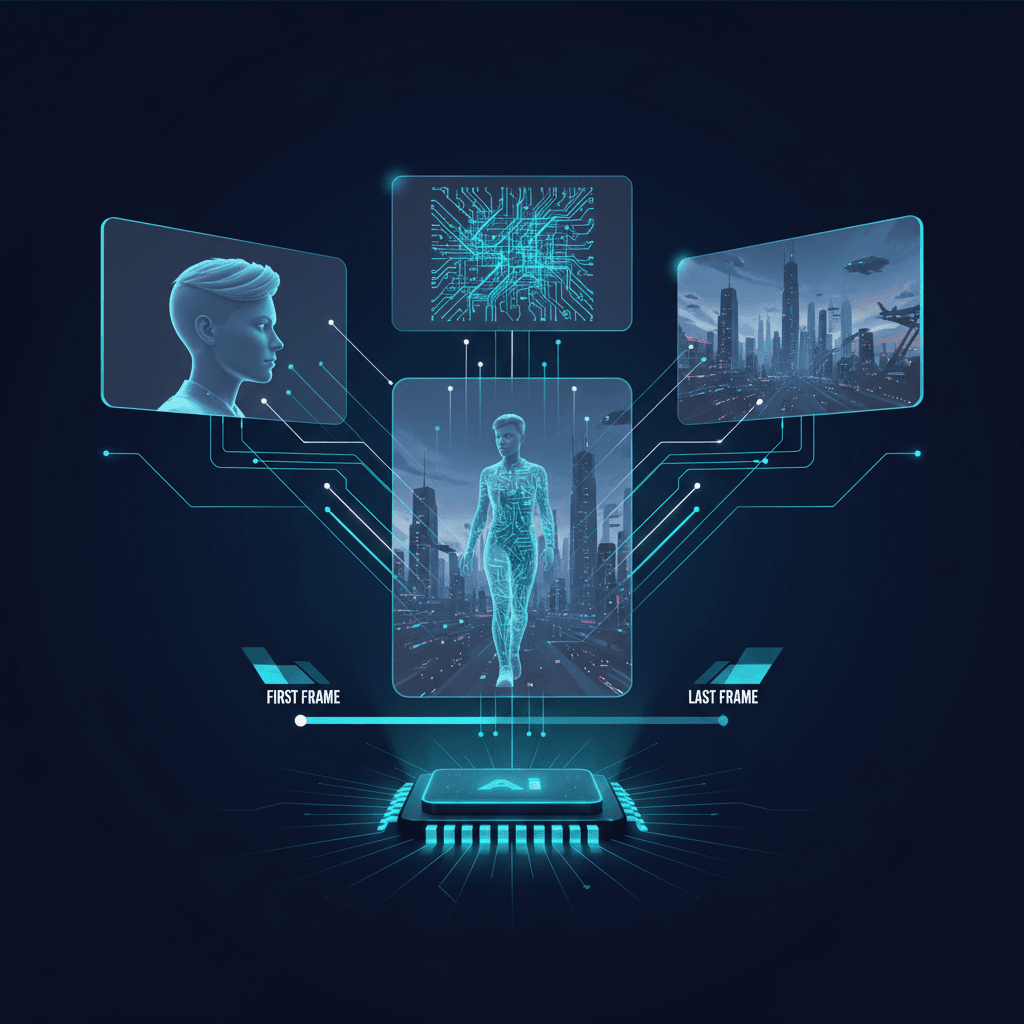

The enhanced control mechanisms in Veo 3.1 extend beyond static image references, offering users a more directorial toolset. The model also features "First and Last Frame" support, which gives creators the ability to define both the beginning and end state of a video segment. By providing a starting and an ending image, the model is directed to generate the motion and transition between the two frames, complete with synchronized audio. This capability is invaluable for creating cinematic effects such as product reveals, transformations, or seamless time-lapses. Coupled with the native audio generation—which produces synchronized dialogue, sound effects, and background music alongside the visuals—Veo 3.1’s toolset significantly reduces the need for external audio production and complex post-generation editing. The update also includes "Scene Extension," a feature allowing users to chain generated clips together, maintaining visual and audio continuity to create videos lasting a minute or more, breaking the constraints of short-form AI video.[3][8][7][9]

This comprehensive update holds significant implications for the broader AI industry and the competitive landscape of generative media. Veo 3.1, which is accessible across multiple Google platforms including the Gemini app, YouTube Create, Flow, the Gemini API, and Vertex AI, solidifies Google DeepMind's position in a market that has seen intense competition from models like OpenAI's Sora and Runway's Gen-3. By prioritizing granular creative control, character consistency, and broadcast-ready resolution, Google is directly addressing the primary pain points that have limited the professional adoption of earlier AI video tools. The introduction of SynthID watermarking on all generated videos also signals a commitment to ethical and transparent content creation, ensuring that the proliferation of increasingly realistic AI-generated media is accompanied by verifiable identification. The blending of professional tools, enterprise-level APIs, and consumer-friendly applications demonstrates a strategic move to capture the entire spectrum of the content creation market, from casual social media storytellers to large-scale generative film studios.[1][4][6][7][10]

Sources

[1]

[2]

[6]

[7]

[8]

[9]

[10]