ByteDance AI Video Gains Memory, Creates Cohesive Cinematic Stories

How StoryMem’s visual memory mechanism delivers consistent characters and scenes in minute-long AI narratives.

January 3, 2026

The release of StoryMem, a new AI video generation framework developed by ByteDance researchers in collaboration with Nanyang Technological University, addresses one of the most persistent and frustrating limitations of current generative video models: maintaining visual consistency across multiple scenes and shots. For years, AI-generated videos, while often visually impressive in short bursts, have been plagued by the phenomenon of "shapeshifting," where main characters, objects, or even entire environments subtly or dramatically change their appearance between cuts, destroying narrative coherence and cinematic quality. StoryMem introduces a novel "visual memory" mechanism that promises to overcome this hurdle, enabling the creation of cohesive, multi-shot narratives that can extend to a minute in length or more.[1][2][3][4]

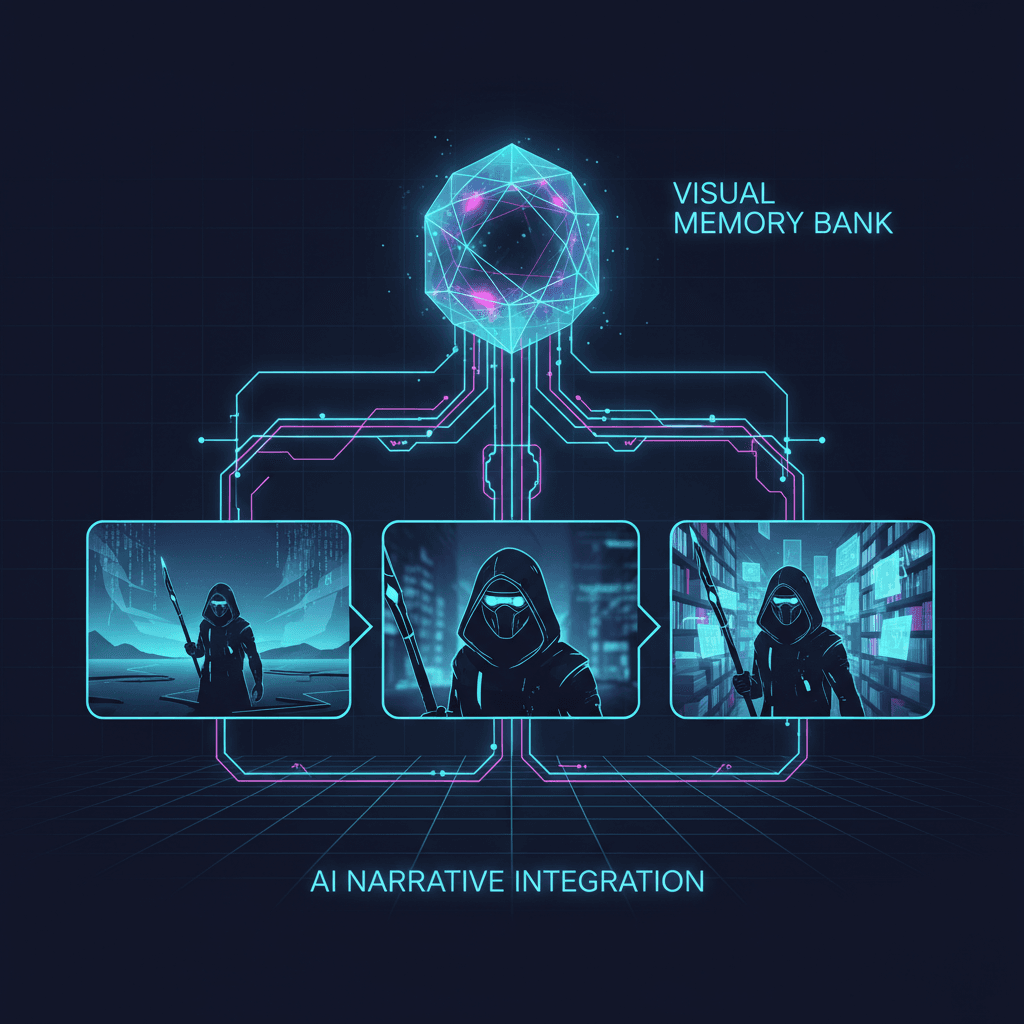

The core innovation is the Memory-to-Video, or M2V, design, a paradigm inspired by the way human memory selectively retains and retrieves salient visual information. Instead of attempting to model an entire long video sequence jointly, which is computationally expensive and difficult to train for, StoryMem reformulates the problem as an iterative process of shot synthesis conditioned on an explicit, dynamically updated memory bank. This compact memory bank stores keyframes extracted from the historical generated shots, which then serve as a persistent visual reference for the model when it synthesizes the next scene.[5][6][7][4] This autoregressive approach allows the system to transform existing, high-quality single-shot video diffusion models, specifically the ByteDance Wan2.2 base model, into storytellers capable of long-form, multi-shot videos.[1][8][2][9]

The technical implementation of the M2V design is what lends StoryMem its efficiency and effectiveness. The stored memory keyframes are encoded and then injected into the single-shot video diffusion model using a combination of latent concatenation and negative RoPE shifts. This mechanism essentially cues the model on what the character, costume, and environmental details should look like, even as the camera angle, focal length, or overall scene changes dramatically. Crucially, the model is adapted for this memory conditioning using only lightweight LoRA fine-tuning, preserving the high visual quality of the base model while gaining significant long-range consistency.[5][6][8][7][10] This strategy avoids the need for large-scale retraining on scarce multi-shot video data, a common bottleneck for other approaches that try to model all shots simultaneously.[5][7] Furthermore, the memory bank itself is managed by a sophisticated update strategy that includes semantic keyframe selection and aesthetic preference filtering. This ensures that the memory bank remains compact, relevant, and only stores high-quality, non-redundant visual information, mimicking the efficiency of human recall.[5][11][2]

The impact of StoryMem's consistency gains is significant, as evidenced by its performance against existing state-of-the-art models. In extensive experiments conducted using the newly introduced ST-Bench—a diverse benchmark for multi-shot video storytelling comprising 300 story prompts—StoryMem demonstrated superior cross-shot consistency. The framework reportedly achieved an improvement of approximately 28.7% in cross-shot consistency over the baseline Wan model and outperformed the previous state-of-the-art method, HoloCine, by a noticeable margin of 9.4%.[1][3] This leap forward allows the generation of compelling narratives, such as a minute-long video depicting a complex character journey, where the protagonist's face and attire remain identical across a sequence of scenes, from wide shots to close-ups.[1][12] The release of the code, models, and the ST-Bench dataset as an open-source framework also signals a commitment to accelerating research in the broader AI community, positioning StoryMem as a critical tool for developers seeking to build upon this foundational consistency breakthrough.[1][13][14]

The implications of StoryMem extend beyond a mere technical fix; the system pushes AI video technology closer to a truly practical tool for cinematic storytelling. The ability to generate coherent, minute-long narratives from a script with consistent characters and scenes opens new avenues for content creators, independent filmmakers, and the advertising industry. Instead of spending time manually editing out or trying to correct visual inconsistencies in generated footage, users can now focus on refining their story structure and directorial prompts. This development effectively bridges the gap between impressive, isolated short clips and the demanding requirements of a sequential, narrative-driven medium. While challenges remain, such as occasional artifacts from the underlying video model and potential confusion in complex multi-character scenes, StoryMem represents a key inflection point where AI-generated video shifts from a novelty to a more viable element in the professional content creation pipeline.[1][6][2][4] The framework’s lightweight and efficient design, leveraging existing diffusion models, suggests that future AI filmmaking tools will prioritize intelligent memory and story-level coherence over sheer computational power, fundamentally changing the landscape of generative art and media production.[2][7][4]

Sources

[1]

[2]

[4]

[5]

[6]

[7]

[9]

[10]

[11]

[12]

[13]

[14]