AI Agents Face Memory Crisis; New Hierarchical Architectures Unlock Next-Gen Persistence

Beyond the KV cache: engineering tiered, stateful memory is the new frontier for scaling long-running AI agents.

January 7, 2026

The proliferation of agentic artificial intelligence, which represents a critical evolution from reactive, single-turn chatbots toward autonomous, multi-step systems, has revealed a fundamental constraint in the current AI infrastructure: a bottleneck in memory architecture. As foundation models scale towards trillions of parameters and context windows extend to millions of tokens, the computational cost of retaining an agent's history and state is escalating faster than the capacity to process it, creating a severe operational and economic hurdle for organizations deploying these sophisticated systems. This challenge is forcing the industry to move beyond monolithic model scaling to architecting entirely new, tiered memory systems that enable persistence, efficiency, and coherence for long-running AI agents.

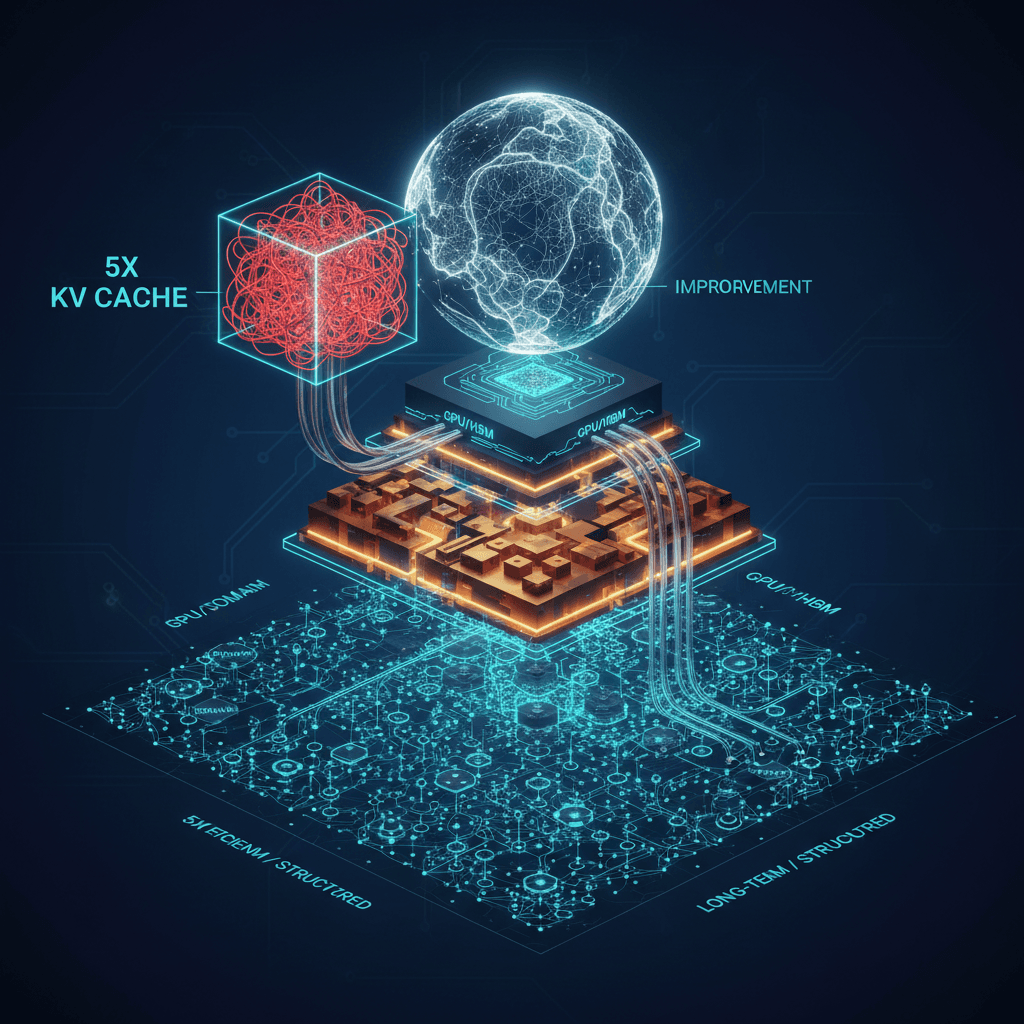

The core of the problem lies in the Key-Value (KV) cache, which acts as the short-term, working memory for transformer-based models. To avoid recomputing the entire conversation history with every new token generated, the model stores its previous states in this cache, which is held in scarce, high-bandwidth GPU memory (HBM). For simple interactions, this is effective, but in agentic workflows, the KV cache must function as persistent memory across multiple steps, tools, and sessions, growing linearly with the sequence length. This growth forces a binary and unscalable choice for enterprises: either occupy the prohibitively expensive HBM for vast context, or relegate the data to slow, general-purpose storage, which introduces latency that makes real-time agentic interactions impossible. This inability to efficiently manage long-term context leads to common failure modes such as "context distraction," where the agent struggles to differentiate between recent, relevant information and older, semantically similar but contextually irrelevant data, resulting in incoherent behavior and reduced reliability.[1][2][3]

In response to this architectural disparity, the industry is pioneering a shift toward hierarchical and adaptive memory frameworks. This new paradigm treats memory as a structured, multi-level resource rather than a flat, undifferentiated database. One emerging solution involves creating a distinct, purpose-built memory tier. For instance, platforms like the Inference Context Memory Storage (ICMS) are introducing an intermediate layer—sometimes referred to as a G3.5 tier—an Ethernet-attached flash layer designed explicitly for gigascale inference. This new tier integrates storage directly into the compute pod, offering petabytes of shared capacity per pod that is significantly faster than standard storage but cheaper than HBM. By intelligently prestaging the necessary context back to the GPU before it is needed, this intermediate memory layer can boost tokens-per-second (TPS) throughput by up to five times for long-context workloads while simultaneously delivering a five-fold improvement in power efficiency compared to traditional storage methods. This reclassification of the KV cache as a unique, "ephemeral but latency-sensitive" data type is fundamentally changing how data centers are designed and how Chief Information Officers must approach capacity planning.[2]

Beyond the hardware layer, the logical architecture of agent memory is also undergoing a profound transformation. Basic Retrieval-Augmented Generation (RAG), which uses flat vector storage to retrieve relevant documents, proves insufficient for the demands of complex, multi-step agentic reasoning. To overcome the limitations of flat vector search, a new generation of systems employs hierarchical memory architectures, which organize information into layers of increasing abstraction. Frameworks like H-MEM organize memory into layers such as Domain, Category, Memory Trace, and Episode, using intelligent routing to avoid brute-force scanning of the entire knowledge base. This structured approach, often combined with knowledge graphs, ensures factual coherence and allows for multi-hop, temporal reasoning, which is essential for an agent that must maintain state across days or weeks. Knowledge graphs, in particular, address the inherent fuzziness of vector similarity by establishing precise relationships between entities, making them indispensable for high-stakes applications in regulated industries like finance or healthcare where factual grounding must be exact.[1][4][5][6]

The ultimate goal of this memory engineering revolution is to endow agents with cognitive capabilities akin to human memory, supporting stateful interactions, task continuity, and behavior adaptation. This includes the implementation of selective forgetting mechanisms, which prune low-value, accumulated noise to prevent context distraction and manage costs, treating memory as a strategic, not unbounded, resource. Furthermore, for multi-agent systems, the architectural challenge extends to creating shared memory spaces with procedural memory transfer, preventing computational waste from duplicated work and ensuring all coordinating agents maintain a consistent view of the reality they operate within. The entire AI industry is now recognizing that "memory engineering" is becoming as critical as prompt engineering for designing scalable, enterprise-grade AI systems, marking the rise of a "memory-first" architecture as the foundational pillar for the next generation of intelligent agents. The future of AI scaling is therefore not solely dependent on producing even larger models, but on engineering smarter, more persistent, and context-aware memory systems that can efficiently manage the vast, evolving information space of an autonomous agent.[1][7][8][9][10][3]