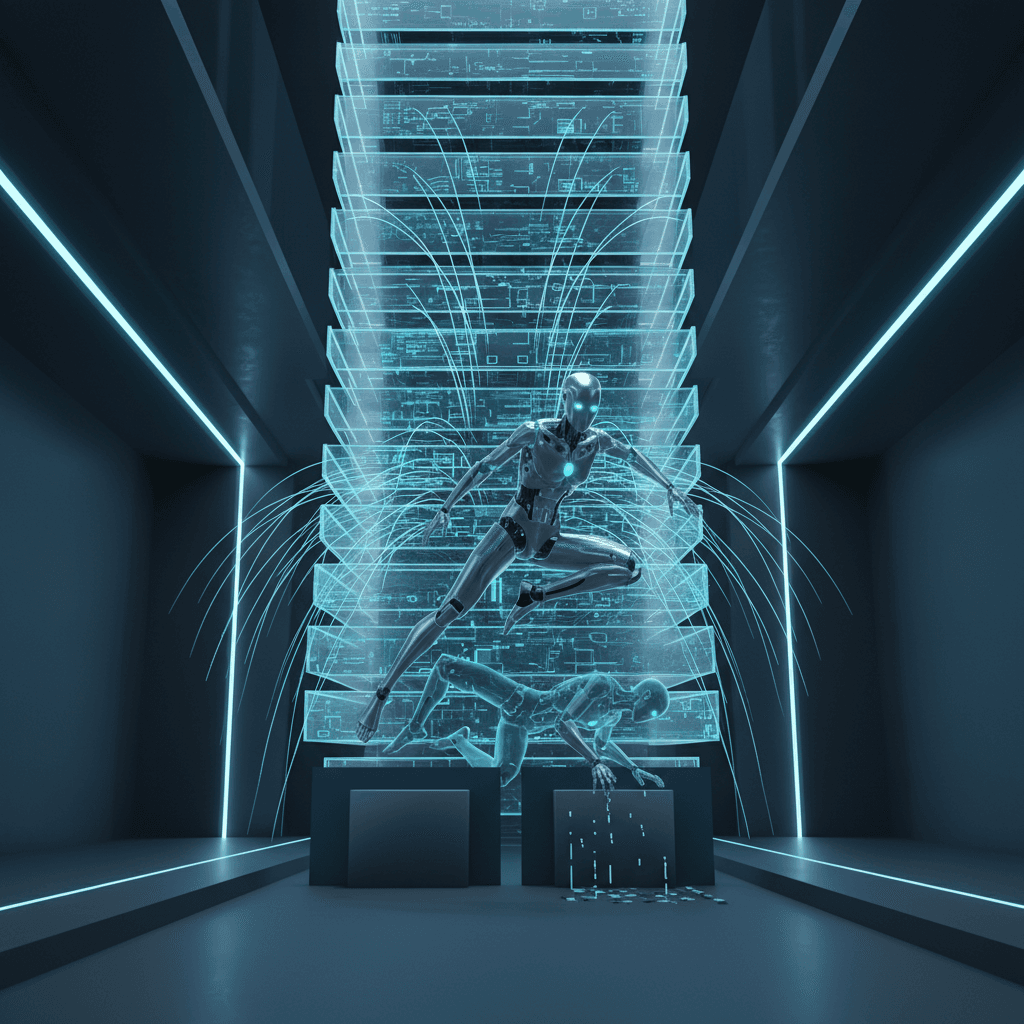

Scaling reinforcement learning to 1,024 layers transforms stumbling robots into agile parkour masters

Scaling neural networks to 1,024 layers yields 50x performance gains, allowing autonomous agents to master parkour through self-supervised learning.

March 15, 2026

For years, the reinforcement learning (RL) industry has operated under a quiet consensus: when it comes to the neural networks powering autonomous agents, shallow is better. While the world of large language models and computer vision has been transformed by a scaling revolution that pushes network depths into the hundreds or even thousands of layers, reinforcement learning has remained largely stagnant.[1][2][3][4] Most state-of-the-art RL agents today still rely on simple multilayer perceptrons consisting of a mere two to five layers.[5] The prevailing wisdom suggested that because RL provides such sparse feedback compared to the vast amount of data available in supervised learning, deeper networks would only lead to instability, vanishing gradients, and diminishing returns.

However, a landmark study from researchers at Princeton University and the Warsaw University of Technology has fundamentally challenged this paradigm. By scaling network depth up to 1,024 layers—a jump of several orders of magnitude over standard architectures—the team achieved performance gains ranging from 2x to over 50x.[6][7][4] More importantly, the researchers observed a qualitative shift in how these agents solve problems.[2][8][3][4][9] Agents that previously struggled to take a few steps or simply collapsed in the face of obstacles began to exhibit sophisticated, parkour-like behaviors, navigating complex environments with a level of agility and strategic foresight that was previously thought to be unattainable through raw scaling alone.[1]

The core of this breakthrough lies in shifting the fundamental training objective of the reinforcement learning agent.[10] Traditional RL is typically driven by a reward signal, such as a score in a game or a binary success marker in a robotics task. This reward-based approach creates a bottleneck; the ratio of feedback to the number of parameters in a deep network is often too low to allow the system to converge. To solve this, the research team turned to Contrastive Reinforcement Learning (CRL).[9] Unlike traditional methods, CRL is a self-supervised approach where the agent learns representations of its environment by predicting the relationship between states, actions, and future outcomes without requiring human-engineered rewards. This self-supervised signal provides a much richer and more continuous stream of data, allowing the network to exploit the representational capacity of massive depth without the instability that typically plagues deep reward-driven systems.

To make these 1,000-layer networks functionally viable, the researchers incorporated architectural stabilizers common in other areas of deep learning but often omitted in robotics research. These included residual connections, which allow gradients to flow through the network more easily, as well as layer normalization and Swish activation functions. While these components are standard in vision and language models, their integration into RL agents proved to be the key that unlocked the benefits of depth. Interestingly, the team discovered that while the "critic" networks—the components of the agent that evaluate the value of certain states—could be scaled to the full 1,024 layers, the "actor" networks responsible for executing actions were more stable when capped at 512 layers.[5] This hybrid approach allowed the agent to possess an incredibly deep understanding of its environment while maintaining a stable policy for movement.

The results of this scaling were most visible in the "Humanoid U-Maze" environment, a challenging simulation where a humanoid agent must navigate a complex maze and overcome structural obstacles. At a depth of four layers, the agent exhibited the "face-planting" behavior common in modern RL; it would stumble, fail to perceive the wall in its path, and ultimately collapse. However, as the researchers increased the depth, they witnessed the emergence of "critical depth" thresholds.[8][5][9] At certain depths, the agent's performance did not just improve linearly; it jumped.[8][10][5][11][12] A 256-layer agent, for example, did not just walk better than its 64-layer counterpart; it learned an entirely new behavior: vaulting acrobatically over walls to reach its goal faster. This suggests that depth provides the agent with the "abstract reasoning" capacity needed to understand complex spatial relationships and temporal sequences, effectively moving from simple locomotion to purposeful navigation and parkour.

In quantitative terms, the gains were staggering. On robotic manipulation and locomotion tasks, the deep agents consistently outperformed their shallow counterparts by massive margins.[12] In long-horizon navigation tasks, such as the Ant Big Maze, the deeper models achieved 20x improvements in success rates. On more complex humanoid-based tasks, the performance gain reached 50x.[5][12] The researchers also noted that scaling depth was far more parameter-efficient than scaling width.[5] Increasing the width of a network—the number of neurons per layer—results in a quadratic growth of parameters, which is computationally expensive and often leads to overfitting. In contrast, scaling depth leads to linear parameter growth while providing superior generalization capabilities. The 1,024-layer agents were not just memorizing the mazes they were trained in; they were significantly better at reaching novel goals they had never encountered before, proving that depth is a primary driver of generalization in reinforcement learning.

The implications for the AI and robotics industries are profound. For decades, the primary hurdle in robotics has been the need for intensive human supervision, manual reward engineering, and millions of trials to learn even simple tasks. If reinforcement learning can truly scale with depth in a self-supervised manner, it opens the door to a "Foundation Model" for robotics. Much like GPT-4 was trained on vast amounts of text to understand the nuances of language, future robotic agents could be trained on massive amounts of raw sensory data using these ultra-deep networks. Such agents would not need to be told how to walk or jump; instead, they would learn the physics and strategies of their environment through self-supervision, eventually developing the complex motor skills required for real-world deployment in logistics, search and rescue, or household assistance.

Furthermore, this research signals a shift in focus from algorithm design to architectural scaling within the RL community. For years, researchers have sought the perfect "loss function" or a more efficient optimizer to improve agent performance. This study suggests that the architecture itself might have been the missing link. By adopting the same scaling laws that powered the LLM boom, reinforcement learning may finally be entering its own "scaling moment." The transition from agents that face-plant to agents that perform parkour represents more than just a clever simulation; it is a proof of concept that the path to general-purpose artificial intelligence involves giving these systems the structural depth they need to perceive, understand, and interact with the world in all its complexity.

As the industry moves forward, the focus will likely turn to the "distillation" of these deep agents. While a 1,024-layer network is highly effective for training, it is computationally heavy for real-time inference on a mobile robot. The next phase of research will likely involve "deep teacher, shallow student" paradigms, where the high-level strategies and parkour skills learned by these massive models are distilled into smaller, more efficient networks that can run on low-power hardware. Regardless of the hardware constraints, the fundamental discovery remains: depth is not a liability in reinforcement learning, but its greatest untapped resource. The era of the shallow agent is likely coming to an end, replaced by deep, self-supervised systems that can move, think, and adapt with human-like agility.

Sources

[1]

[2]

[4]

[5]

[7]

[8]

[10]

[11]

[12]