OpenAI labels safety expert Stuart Russell a doomer to block testimony in Musk lawsuit

OpenAI brands safety experts as alarmists in court, signaling a pivot from the existential risks it once publicly championed.

February 28, 2026

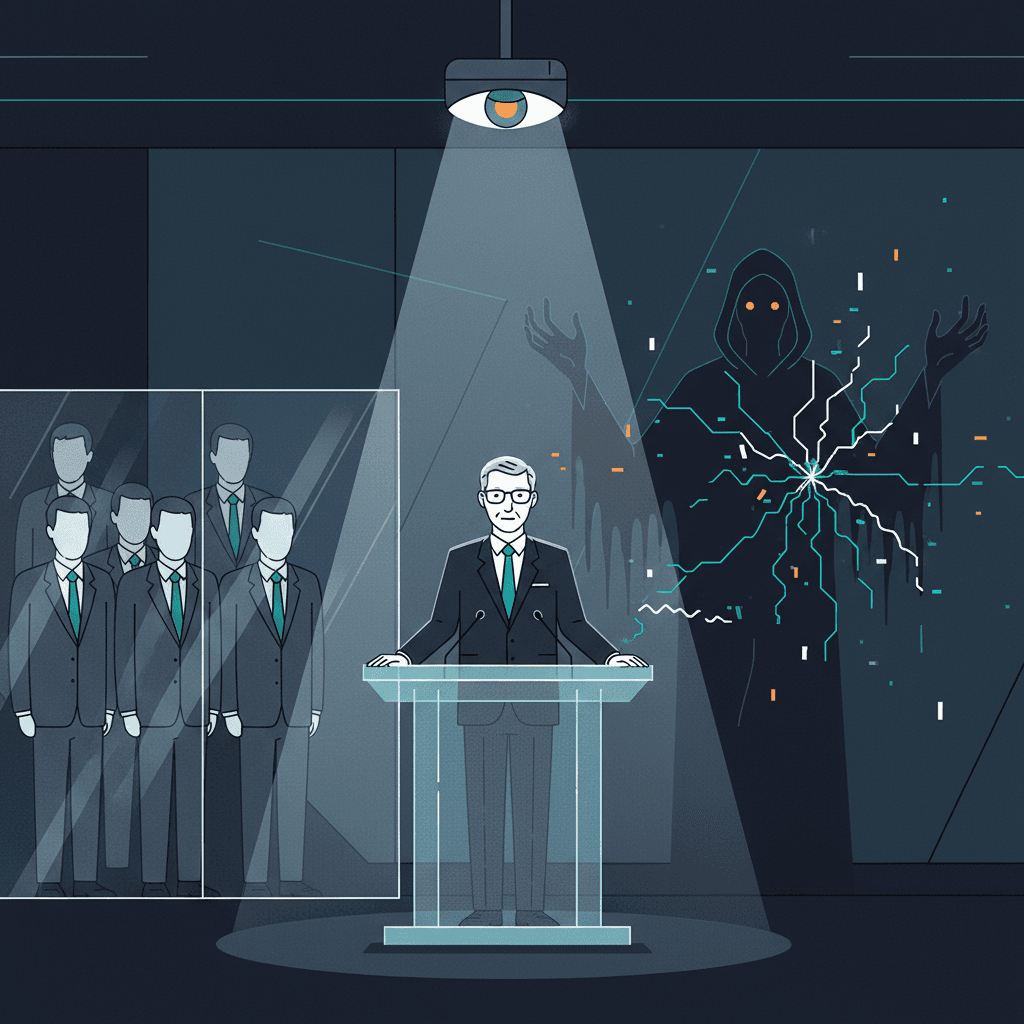

The strategic pivot of OpenAI from a safety-focused research laboratory to a commercial titan has reached a contentious milestone in a federal courtroom.[1] In a recent legal filing aimed at narrowing the scope of testimony in an ongoing high-stakes lawsuit, OpenAI’s legal counsel characterized University of California, Berkeley professor Stuart Russell as a prominent doomer whose warnings about artificial intelligence are speculative and alarmist. This characterization represents a stark departure from the company’s previous public posture, where its leadership frequently leveraged the same existential threats to advocate for global regulatory frameworks. The attempt to discredit Russell is particularly striking given that OpenAI’s CEO, Sam Altman, previously stood alongside him as a co-signatory on a landmark declaration warning that the risk of extinction from artificial intelligence should be treated as a global priority.[2]

The immediate legal conflict stems from the ongoing litigation between Elon Musk and OpenAI, where the core of the dispute rests on whether the company has strayed from its founding mission to develop artificial general intelligence for the benefit of humanity. As Musk’s legal team sought to introduce Russell as an expert witness to testify on the catastrophic risks associated with the unbridled pursuit of powerful AI systems, OpenAI moved to block the testimony. In their motion, the company’s lawyers argued that Russell has made a career of delivering dystopian lectures that lack a factual or scientific basis for a jury to consider. By labeling one of the world’s most cited computer scientists as a doomer, OpenAI is attempting to frame the conversation around AI safety as a fringe philosophical movement rather than a rigorous scientific discipline. This legal maneuver suggests a calculated effort to distance the company’s commercial ambitions from the very safety guardrails it once helped define.

This aggressive legal strategy creates a notable contradiction when compared to the historical record of OpenAI’s executive rhetoric.[2] In May 2023, the Center for AI Safety released a single-sentence statement that became a defining document for the industry: Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.[3][4][5][6] Sam Altman was one of the first and most prominent signatories of this statement, joining Stuart Russell and other Turing Award winners in a unified front. At the time, Altman’s endorsement was seen as a sign of mature leadership, signaling that OpenAI was acutely aware of the dangers its own technology might pose. However, the company’s current legal filings dismiss these exact same concerns as alarmist fantasies when presented by an independent expert in a court of law. This suggests that the narrative of existential risk may have been a useful tool for building a brand of responsible stewardship, but it has become a liability when it threatens the company’s corporate autonomy.

To understand the weight of OpenAI’s dismissal, one must consider Stuart Russell’s standing within the scientific community. Far from being an outsider or a mere provocateur, Russell is the co-author of the definitive textbook on artificial intelligence used in thousands of universities worldwide. His research into the alignment problem—the challenge of ensuring that an autonomous system’s goals remain subservient to human values—has been foundational to the field. Russell’s work on human-compatible AI argues that the danger is not necessarily malevolence in machines, but rather the competence of a system pursuing a misaligned objective with devastating efficiency. By branding such a central figure in the academic community as a doomer, OpenAI risks alienating the scientific establishment and undermining the legitimacy of the very safety research it claims to conduct internally. The move signals to the broader industry that technical expertise in AI safety is only valued when it aligns with the commercial and legal interests of the developers.

The implications of this shift extend beyond a single courtroom battle and into the realm of national and global policy.[7][6] For years, critics have accused major AI labs of engaging in regulatory capture—using the threat of future catastrophes to push for regulations that only the largest and most well-funded companies can afford to follow. If OpenAI is now characterizing safety experts as doomsday prophets in court, it suggests that the company’s previous calls for regulation may have been more about controlling the competitive landscape than addressing fundamental risks. This perceived hypocrisy has already sparked backlash from civil society organizations and industry watchdogs who argue that OpenAI is silencing the same voices it once amplified to gain public trust. As the company continues to dissolve its internal safety teams and see the departure of key alignment researchers, the legal attack on external experts like Russell serves as a confirmation for many that the era of safety-first development has been eclipsed by the race for market dominance.

The broader AI industry is now watching how this legal defense will affect public perception and the future of AI governance. If the court accepts the argument that existential risk is too speculative for expert testimony, it could set a precedent that shields AI developers from accountability regarding the long-term impacts of their products. Furthermore, it highlights a growing ideological rift between the pioneer researchers who built the field and the executives now steering it toward trillion-dollar valuations. The label of doomer, once a badge of caution among scientists concerned about the rapid pace of change, is being weaponized as a tool of disqualification. This creates a chilling effect for other researchers who may wish to speak out about safety concerns but fear being branded as irrational or unscientific by the legal departments of the world’s most powerful tech firms.

Ultimately, the clash over Stuart Russell’s testimony reflects the profound tension at the heart of the artificial intelligence revolution. OpenAI’s attempt to paint a peer as an alarmist while its CEO continues to project an image of cautious concern suggests a bifurcated strategy: one for public relations and another for legal survival. As the case moves forward, the scrutiny of OpenAI’s charter and its historical commitments will likely intensify. The company faces the difficult task of explaining how the very risks it once highlighted as global priorities have suddenly become too dystopian for a courtroom. This legal battle is no longer just about a contract or a founding mission; it is about who gets to define the boundaries of reality and risk in the age of AGI. By discrediting the experts who provide the intellectual framework for AI safety, the industry may be moving toward a future where commercial speed is the only metric that matters, leaving the warnings of the founders to be remembered as a convenient, if temporary, marketing strategy.