OpenAI hits 10 gigawatt compute milestone years early to lead the global AI race

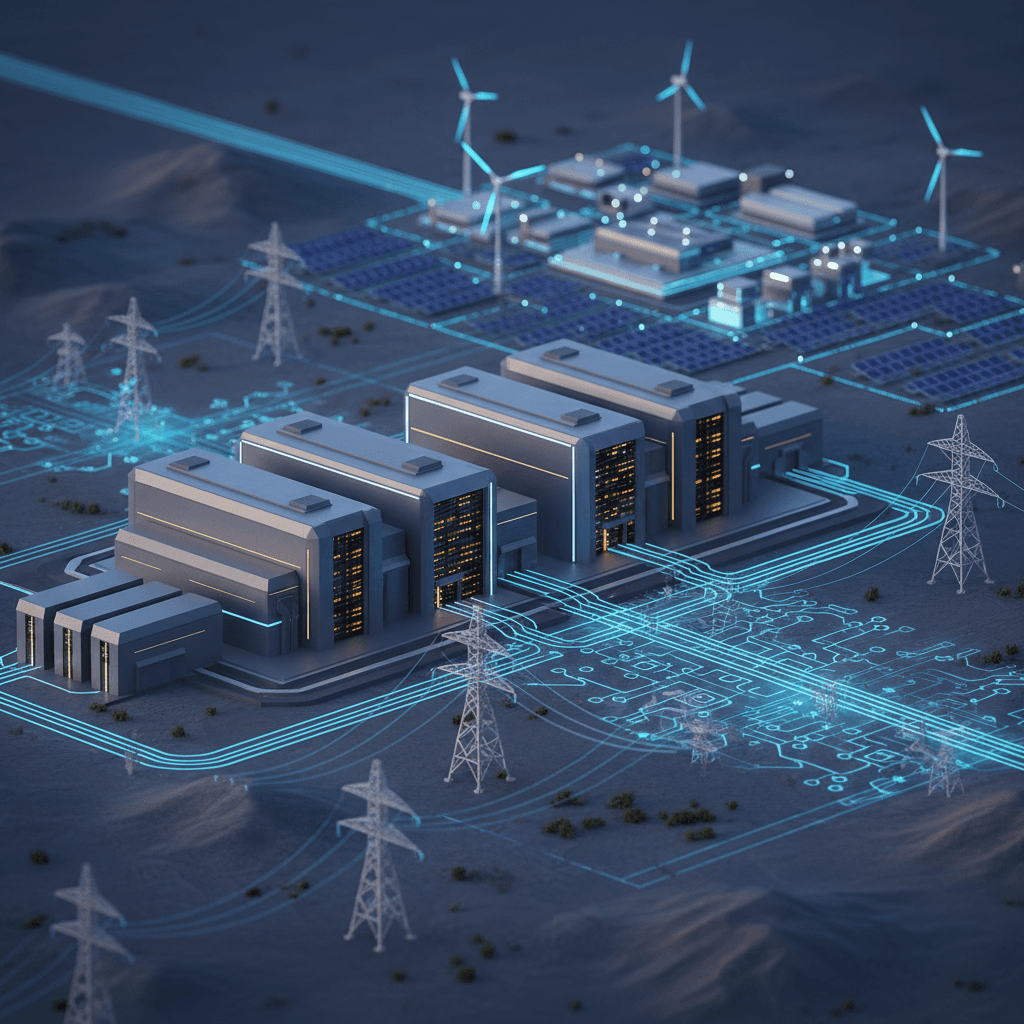

By reaching its 10-gigawatt goal early, OpenAI shifts the AI race toward massive energy procurement and industrial-scale data center construction.

April 30, 2026

OpenAI has announced that it successfully reached its ambitious goal of securing 10 gigawatts of artificial intelligence compute capacity in the United States, achieving the milestone several years ahead of its original target.[1][2][3] This achievement represents a massive acceleration in the physical build-out of the infrastructure required to train and run next-generation AI models.[4][5][6] Originally, the company had projected that reaching this level of power capacity would take until nearly the end of the decade.[4][3] By hitting the mark early, OpenAI has signaled a fundamental shift in the global AI race, moving the competition beyond software benchmarks and into the realm of industrial-scale energy procurement and data center construction.[7][4] The 10-gigawatt figure is difficult to overstate in its scale, representing enough electricity to power approximately 7.5 million homes or the equivalent of the peak summer demand for a city the size of New York. This rapid scaling underscores the company’s belief that the future of artificial intelligence is inextricably linked to the availability of massive, dedicated power sources and high-performance hardware.

The early realization of this compute goal was made possible through a series of unprecedented multi-billion-dollar partnerships that have effectively reshaped the technology landscape. Central to this strategy is the Stargate initiative, a massive infrastructure venture originally conceived as a collaborative effort involving major financial and technological players.[8][3] While it began as a targeted project, Stargate has evolved into an overarching framework for the company’s global data center strategy, drawing in hundreds of billions of dollars in investment.[2][3] Key partners include Oracle and SoftBank, the latter of which has played a critical role in financing the rapid expansion.[3][9] Furthermore, a landmark agreement with NVIDIA has secured a pipeline of millions of advanced GPUs, with a dedicated investment of up to $100 billion specifically aimed at deploying at least 10 gigawatts of high-performance systems.[10][5] This partnership focuses on the latest hardware platforms, including the next-generation Vera Rubin architecture, which is designed to handle the massive training requirements of future models aiming toward superintelligence.

Beyond its traditional hardware alliances, the company has significantly diversified its infrastructure portfolio by deepening ties with Amazon. In a strategic pivot that follows the relaxation of an exclusive agreement with its long-term backer, Microsoft, OpenAI has secured two gigawatts of its total capacity through a massive partnership with Amazon Web Services.[2] This deal includes a commitment to spend $100 billion on cloud resources over the next eight years and integrates specialized hardware such as Amazon’s custom Trainium chips.[2] By broadening its base of providers, the company is building a more resilient supply chain for its compute needs while simultaneously pushing the boundaries of custom silicon development. These collaborations are not merely about leasing server space; they represent a co-optimization of hardware and software roadmaps, where data center designs are being tailored specifically for the unique workloads of frontier AI models.

The physical footprint of this 10-gigawatt network is concentrated across several key regions in the United States, with a flagship site established in Abilene, Texas.[6][11] This location serves as the model for the "AI factory" concept, where massive clusters of GPUs are powered by dedicated energy sources. Other significant developments are underway in states such as New Mexico, Wisconsin, Michigan, and Ohio. However, the build-out has not been without significant logistical and regulatory challenges.[3] In some regions, the company has had to moderate its pace or even abandon specific expansion sites due to skyrocketing energy costs and stringent local regulatory constraints.[3] The sheer volume of power required is so great that it has forced utilities and policymakers to rethink grid resilience. In Texas alone, the projected power draw from these facilities could eventually represent a substantial percentage of the entire state's grid capacity, leading to complex negotiations over energy efficiency and the potential for the company to fund its own generation and transmission infrastructure to avoid raising prices for local residents.

From a competitive standpoint, securing this level of compute capacity functions as a formidable strategic moat.[4] In the current industry environment, the ability to train a more advanced model is largely a function of how much compute one can throw at the problem. By locking in 10 gigawatts of capacity through long-term contracts and massive capital commitments, OpenAI is attempting to ensure that it maintains a structural advantage over its rivals.[4] This "compute-first" philosophy suggests that the next phase of AI development will be determined by who owns the most physical infrastructure rather than who has the most clever algorithms alone. The financial obligations underlying these deals are staggering, with costs estimated at tens of billions of dollars per gigawatt.[4] While much of this capital is provided by partners like NVIDIA and SoftBank, the commercial weight of these agreements ties the company’s future directly to its ability to monetize increasingly powerful models and maintain its lead in the enterprise market.

The implications for the broader energy and semiconductor industries are equally profound.[7] The drive toward 10 gigawatts has already begun to redefine the semiconductor landscape, elevating companies that specialize in Ethernet networking, energy-efficient rack design, and custom silicon. This includes significant collaboration with Broadcom to develop custom chips and networking solutions that can support the high-density requirements of modern data centers.[4] As these facilities consume electricity at the scale of entire industrial complexes, they are driving a convergence between the tech sector and the energy sector.[7] There is an increasing focus on finding sustainable ways to power these "intelligence factories," as the environmental impact of such massive consumption remains a point of intense public and regulatory scrutiny. The transition to renewable energy sources and the development of more efficient cooling technologies have become mission-critical components of the overall infrastructure strategy.

Looking ahead, the achievement of the 10-gigawatt milestone is seen as only a beginning.[12] Internal projections suggest an even more audacious long-term target of 250 gigawatts of capacity within the next decade. Such a scale would represent a significant portion of the total power consumption of the United States and would likely require a total overhaul of how energy is generated and distributed. The company’s leadership has framed this expansion as essential for the "Age of Abundant Intelligence," arguing that the economic and societal benefits of advanced AI will far outweigh the massive costs and logistical hurdles of building the infrastructure. By hitting its initial target years ahead of schedule, the company has demonstrated an aggressive commitment to this vision, forcing the rest of the industry to keep pace with a build-out that is unprecedented in the history of modern computing. This race for gigawatts has become the new frontier of technology, where the winners will be those who can most effectively bridge the gap between digital intelligence and the physical realities of power and steel.

Sources

[2]

[3]

[6]

[9]

[10]

[12]