NVIDIA Launches Open Source Agent Toolkit to Securely Deploy Autonomous AI Across the Enterprise

NVIDIA’s new open-source Agent Toolkit addresses the enterprise trust crisis by providing secure infrastructure for autonomous digital workforces.

March 19, 2026

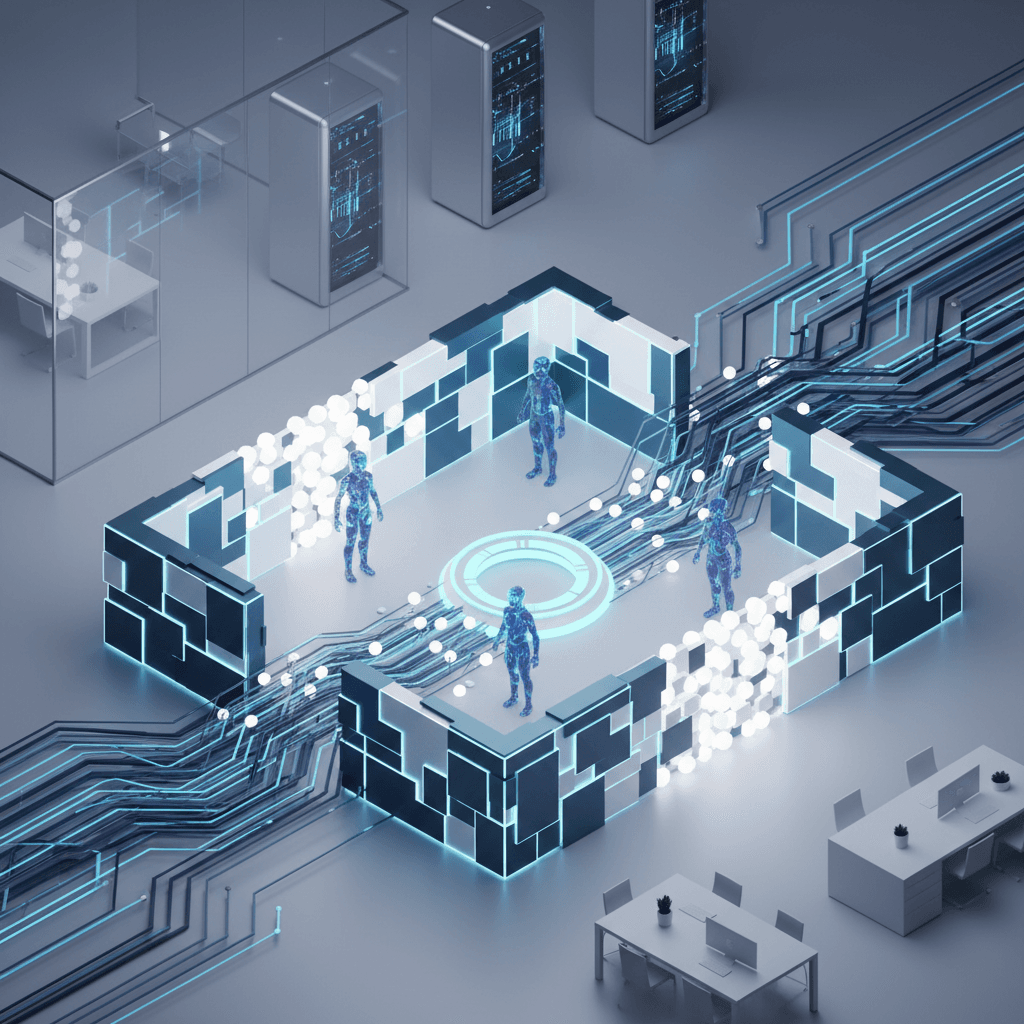

The landscape of enterprise artificial intelligence shifted fundamentally at the GTC 2026 conference in San Jose, where NVIDIA Chief Executive Officer Jensen Huang addressed the primary obstacle preventing corporations from fully embracing autonomous systems: the crisis of trust. While large language models have spent years proving their reasoning capabilities in controlled environments, the transition to autonomous agents capable of independent action within corporate networks has been stalled by concerns over data leakage, legal liability, and unpredictable behavior. Huang’s response to this industry-wide hesitation is the NVIDIA Agent Toolkit, an open-source software stack unveiled on March 16 that aims to standardize the safety protocols required to let AI agents work autonomously across internal enterprise systems.[1] By providing a modular architecture that separates an agent’s reasoning from its execution environment, NVIDIA is attempting to provide the missing infrastructure layer that converts experimental AI into a reliable digital workforce.

At the center of this new initiative is NVIDIA OpenShell, an open-source runtime designed to serve as a high-security perimeter for what NVIDIA terms claws—individual, specialized AI agents.[2][3][4][1] In the current enterprise environment, most AI interactions are ephemeral and human-gated, meaning a person must review every output before it is acted upon. The Agent Toolkit seeks to move beyond this manual oversight by using OpenShell to enforce policy-based security, network isolation, and privacy guardrails at the software level. This runtime environment functions as a sophisticated sandbox, ensuring that an agent tasked with resolving a customer service ticket or optimizing a supply chain cannot accidentally access sensitive payroll data or exceed its predefined administrative privileges. By creating a hardware-rooted enforcement plane, NVIDIA allows IT departments to define exactly what an agent can see and where it can send information, effectively treating AI agents as managed employees with strictly controlled access credentials rather than unmonitored scripts.

To further bridge the gap between consumer-facing AI and regulated corporate environments, NVIDIA also introduced NemoClaw. This secure software stack is designed to layer enterprise-grade privacy and governance onto OpenClaw, which has emerged as a dominant open-source framework for personal AI agents. According to Huang, OpenClaw has become the operating system for personal intelligence, but it lacked the rigorous auditing and data-protection features necessary for the boardroom. NemoClaw solves this by integrating a privacy router that intercepts and scrubs sensitive internal data before it is sent to external frontier models for processing. This hybrid approach allows a company to utilize the reasoning power of massive cloud-based models while ensuring that the underlying proprietary data never leaves the corporate firewall. To validate this security model, NVIDIA has partnered with cybersecurity leaders including Cisco, CrowdStrike, and Microsoft Security to ensure that OpenShell and NemoClaw are compatible with existing enterprise defense platforms, signaling a move toward an integrated security ecosystem where AI safety is not a standalone product but a fundamental feature of the corporate network.

The economic argument for this new toolkit is centered on NVIDIA AI-Q, an open agent blueprint for deep research that was also launched at the event.[5][1] One of the significant barriers to scaling AI agents has been the compounding cost of inference, especially when agents must perform hundreds of iterative steps to solve a complex research task. AI-Q utilizes a hybrid architecture that employs expensive frontier models only for high-level orchestration, while offloading the heavy lifting of data retrieval and analysis to NVIDIA’s open Nemotron-3 models. Internal benchmarks shared at GTC indicate that this hybrid strategy can reduce query costs by more than 50 percent without sacrificing accuracy.[1] In fact, AI-Q has already claimed top positions on the DeepResearch Bench leaderboards, outperforming several proprietary systems. For enterprise buyers who have been wary of the unpredictable consumption-based pricing associated with autonomous agents, the promise of halved operational costs combined with higher precision provides a compelling financial roadmap for deployment.

Hardware integration remains a critical component of the NVIDIA strategy, even as the company pivots toward open-source software. The Agent Toolkit is optimized for the newly announced Vera Rubin platform, which includes the Vera CPU and the Rubin GPU architecture.[6] These chips are purpose-built for the unique demands of agentic AI, which requires high-speed memory and low-latency processing to handle the continuous loops of perception and action that characterize autonomous behavior. While the software stack itself is designed to be hardware-agnostic, NVIDIA’s deep optimization ensures that the most efficient and secure versions of these agents will run on their own silicon. The Vera CPU, in particular, was highlighted as being specifically tuned for the orchestration tasks that dominate agentic workflows, providing the necessary compute overhead to run security guardrails and privacy routers in real time without bottlenecking the agent’s performance.

The industry response to the toolkit has been immediate, with 17 major software platforms already announcing integration plans. Adobe is adopting the toolkit to power long-running creative and marketing agents that can autonomously manage campaigns while staying within brand and privacy guidelines. Salesforce is integrating Nemotron models and Agent Toolkit software into its Agentforce platform, allowing employees to use Slack as a primary conversational interface to manage a fleet of autonomous workers. Other partners, such as SAP, ServiceNow, and Siemens, are leveraging the AI-Q blueprint to automate complex industrial and business workflows that previously required constant human intervention. By enlisting these enterprise giants, NVIDIA is positioning itself as the foundational layer of a new era of software where every application is not just a tool for a human to use, but a platform for agents to inhabit and operate.

The broader implications for the AI industry are significant, as this move reflects a shift from a focus on model size to a focus on model utility and safety. As the era of the chatbot matures into the era of the agent, the competitive advantage is moving toward companies that can provide the most robust governance and the lowest cost of operation. NVIDIA’s decision to keep much of this stack open-source is a tactical choice to drive industry standards toward their own hardware ecosystem. By providing the blueprints and the runtimes for free, they are lowering the barrier to entry for every developer while simultaneously ensuring that the resulting infrastructure is optimized for NVIDIA chips. This strategy effectively positions the company as the architect of the intelligent age, defining the rules of engagement for how AI will act in the real world.

Ultimately, the GTC 2026 announcements suggest that the technical capability of AI is no longer the bottleneck—the bottleneck is the human infrastructure required to manage it. Jensen Huang’s vision of an agentic inflection point rests on the idea that in the near future, every employee will manage a team of specialized AI agents.[1] If the NVIDIA Agent Toolkit succeeds in making those agents safe to deploy, it could trigger the most significant productivity shift in the corporate world since the introduction of the internet. By focusing on liability, data control, and cost, NVIDIA is addressing the practical realities of the modern enterprise, attempting to turn the unpredictable potential of autonomous AI into a manageable, scalable, and above all, safe corporate asset. The success of this initiative will be measured not by the complexity of the models themselves, but by the number of enterprises that finally feel comfortable letting their AI agents go to work without a human supervisor watching every move.