New study reveals AI benchmarks ignore 92 percent of the U.S. labor market

CMU and Stanford researchers find that technical benchmarks ignore 92 percent of the workforce, neglecting essential real-world social skills.

March 8, 2026

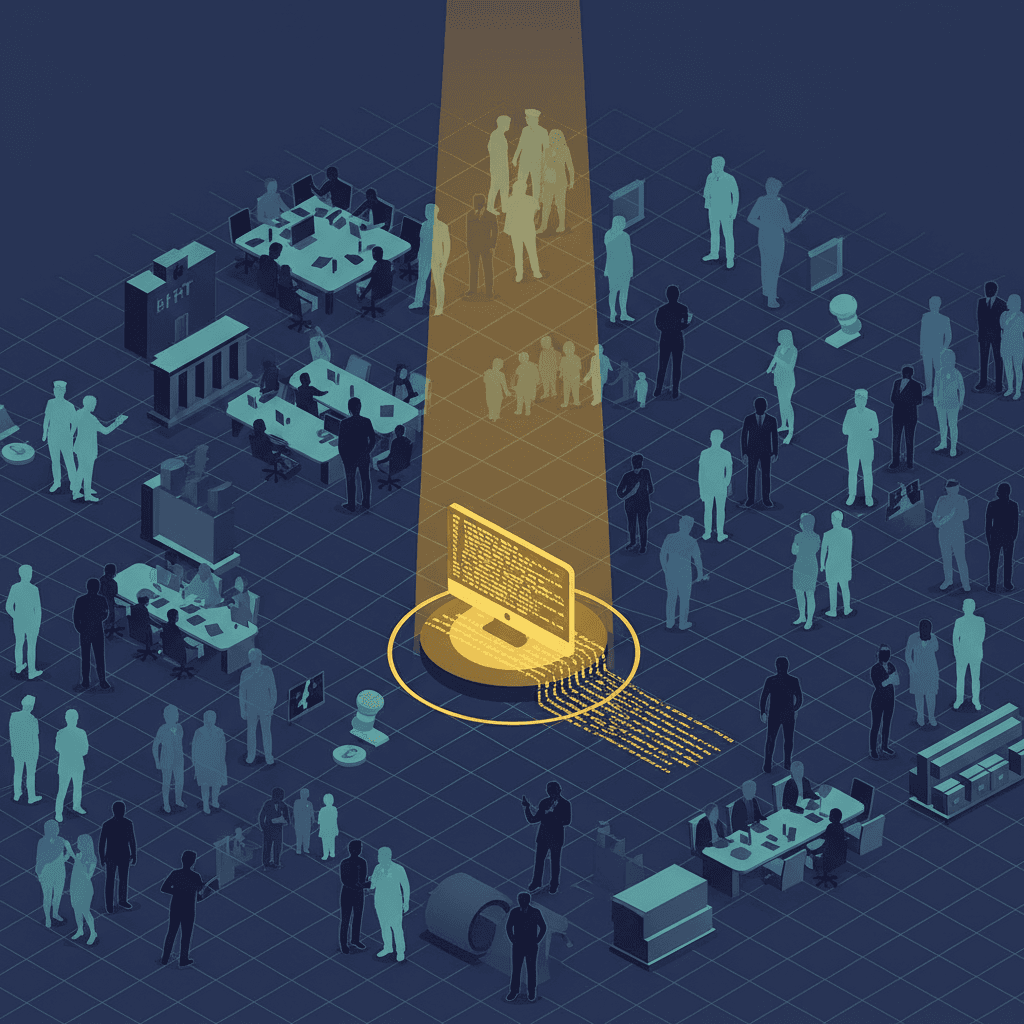

The current landscape of artificial intelligence development is characterized by a profound disconnect between the metrics used to measure success and the actual needs of the global economy. While the tech industry celebrates the rapid advancement of autonomous AI agents capable of writing sophisticated code or solving complex mathematical proofs, a groundbreaking study from researchers at Carnegie Mellon University and Stanford University suggests that these milestones are being achieved within a narrow, self-referential bubble. By systematically analyzing the largest available collection of AI agent benchmarks against the realities of the United States labor market, the research team found that 92 percent of the workforce is effectively invisible to the evaluation standards that drive the industry’s most ambitious projects. This misalignment suggests that while AI agents are becoming hyper-competent at a small subset of technical tasks, they remain largely untested and potentially unsuited for the vast majority of roles that sustain the modern economy.

The study, which examined 43 major agent benchmarks encompassing more than 72,000 individual tasks, utilized the U.S. government’s O*NET database to map these evaluations to 1,016 real-world occupations.[1] The results reveal a lopsided priority system where researchers favor domains that offer methodological convenience over those that offer the greatest economic or societal impact.[2][1][3] Currently, agent development targets the computer and mathematical domain almost exclusively.[1][4][3][5] While this field is the primary driver of AI innovation, it represents only 7.6 percent of total U.S. employment.[1][5] Despite this small footprint, it commands the lion's share of research attention, with over 8,600 tasks dedicated to it in the analyzed benchmarks.[1] This focus has created a "golden cage" for AI development, where progress is measured in a vacuum of high-digitization and deterministic outcomes that rarely reflect the messy, multi-dimensional nature of general labor.

This concentration in technical domains has left economically vital and highly digitized sectors like management and law profoundly underserved.[4][3][1][5] The researchers found that management work, which the study identifies as being 88 percent digital in nature and critical for capital allocation, receives barely one percent of total benchmark attention.[3] Similarly, the legal profession, characterized by its high average wages and reliance on document analysis and precedents, is represented by a mere 71 tasks across the entire benchmark ecosystem. This disparity is not merely a matter of missing data but a fundamental failure to account for the skills that define these roles.[3] When the researchers broke down professional skills into a taxonomy of information intake, mental processes, interpersonal interaction, and work outcomes, they found that current benchmarks focus almost entirely on "Getting Information" and "Working with Computers."[1][3] These two narrow activities cover less than five percent of the skills required in the actual labor market, while critical capabilities such as "Interacting with Others" and "Communicating with Supervisors" are almost entirely ignored by evaluation frameworks.[3]

The root of this obsession with coding and technical tasks appears to be a systemic preference for "verifiability."[6][2] Programming tasks are popular among researchers because they provide a clear, binary signal of success: code either runs and passes a test suite, or it does not. In contrast, the strategic advice given by a manager or the nuanced negotiation of a legal contract does not offer an immediate or easily automated feedback loop. This has led the AI field into a "methodological convenience" trap, where developers optimize for what they can easily measure rather than what matters most for productivity gains.[2][6] The study highlights that in sectors like sales or administrative support—which represent nearly 35 million American workers—the number of benchmark tasks is disproportionately low or, in the case of food preparation and transportation, nonexistent.[2] By ignoring these sectors, the AI industry risks building agents that are technically brilliant at solving abstract logic puzzles but practically useless in the collaborative, interpersonal environments where the majority of value is created.

Furthermore, the study introduces a sobering look at the "autonomy frontier," measuring how agent performance degrades as tasks move away from simple computer-based instructions toward complex real-world workflows.[2][3] Even in the technical domains where agents are most comfortable, their ability to act autonomously falls off a cliff as procedural complexity increases.[2] This suggests that the high success rates reported on narrow benchmarks like SWE-bench or AgentBench may be misleading indicators of an agent’s readiness for the workforce. Most current agents are uni-dimensional, designed to handle a single type of task in a controlled environment. However, real professional work requires navigating rich, multi-domain workflows that involve long-term planning, social coordination, and the ability to handle ambiguity—traits that are largely absent from the current evaluation paradigms.

The implications for the AI industry are significant, as billions of dollars in venture capital and corporate research are being funneled into a roadmap that may be fundamentally misaligned with the economic reality of the 21st century. If AI is to fulfill its promise as a general-purpose technology that boosts productivity across the board, the criteria for "intelligence" must be expanded to include the 92 percent of the labor market currently being left behind. The researchers advocate for a new generation of "socially relevant" benchmarks that prioritize coverage, realism, and granular evaluation.[5][7] This would require a shift away from synthetic, LLM-generated tasks that often lack the authentic structure of professional work, toward evaluations that assess not just the final output but the intermediate reasoning and interpersonal steps an agent takes to achieve a goal.[8]

To bridge this gap, the industry must develop better ways to measure non-deterministic outcomes and soft skills. The study’s use of the O*NET database provides a potential blueprint for this transition, allowing developers to ground their research in the actual skill distributions of various professions. By moving beyond the terminal and into the boardroom, the clinic, and the legal office, AI development can begin to address the massive economic potential of sectors that are currently "invisible" to it. The current trajectory suggests a future where we have perfect coding assistants but no tools to help a nurse coordinate patient care or a logistics manager navigate a supply chain crisis.

In conclusion, the Carnegie Mellon and Stanford study serves as a critical call to action for the AI community. The obsession with coding benchmarks has provided a useful starting point for measuring agentic reasoning, but it has also created a distorted view of what constitutes progress.[3] As AI agents move from experimental prototypes to enterprise tools, the metrics for their success must reflect the diverse and complex nature of human labor. Until evaluation frameworks are aligned with the actual structure of the U.S. labor market, the true economic impact of artificial intelligence will remain capped by the industry’s own narrow definitions of utility. Reaching the other 92 percent of the workforce will require more than just faster models; it will require a fundamental reimagining of what we want AI agents to do and how we measure their worth in a human-centric economy.