Netflix Launches Open-Source VOID AI to Erase Video Objects and Simulate Complex Physics

Netflix’s open-source VOID framework uses physics-based reasoning to seamlessly erase video objects and their secondary environmental interactions.

April 4, 2026

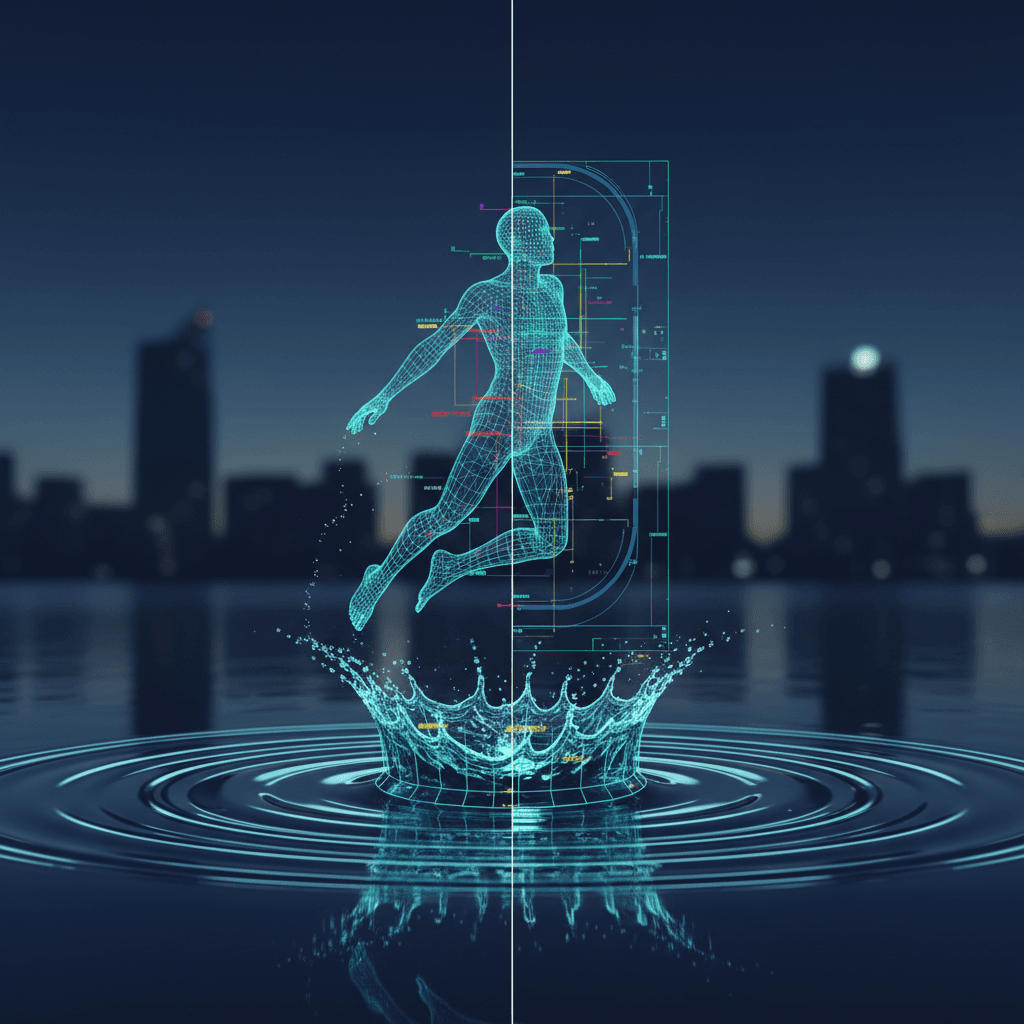

Netflix has released a transformative open-source artificial intelligence framework named VOID, an acronym for Video Object and Interaction Deletion, which fundamentally changes the landscape of digital video editing.[1] While previous generations of video inpainting tools focused on the aesthetic removal of objects—filling the empty space with background textures or light—VOID is the first major framework to address the underlying physics of a scene.[1] By utilizing a sophisticated dual-stage reasoning process, the system can identify not just the primary object to be removed but also the secondary physical effects that object has on its environment.[1] This means that if a digital editor removes a person jumping into a swimming pool, the AI does not just erase the individual; it also erases the resulting splash and reconstructs the surface of the water as if it had remained undisturbed.[2] This capacity to rewrite the "physics" left behind represents a leap forward in computer vision, moving from simple visual replacement to complex causal reasoning within a three-dimensional temporal space.[1]

The technical core of VOID is built upon a 5-billion-parameter CogVideoX transformer model, but its most significant innovation lies in the introduction of what researchers call a quadmask.[3][1] Unlike traditional binary masks that only distinguish between pixels to keep and pixels to delete, the quadmask utilizes four distinct semantic values.[1] These values represent the primary object for removal, the overlapping regions, the background to be preserved, and, crucially, the affected regions.[1] This fourth category allows the model to understand the causal chain of events in a video.[1] For example, if a person is holding a guitar and the person is removed, the framework identifies the guitar as an "affected" object.[1] The AI then calculates a counterfactual trajectory for the guitar, causing it to fall naturally to the ground under the influence of gravity rather than leaving it floating in mid-air or removing it entirely.[1] This reasoning is facilitated by a vision-language model pipeline that uses high-level causal logic to predict how the remaining elements of a scene should behave in the absence of the excised object.[1]

To achieve this level of physical plausibility, the development team at Netflix and the Sofia University researchers trained the model using massive synthetic datasets designed to teach the AI the laws of motion and interaction. These datasets, known as HUMOTO and Kubric, consist of paired counterfactual videos.[4][5][1] One video shows a physical interaction—such as a ball striking a stack of blocks or a human lifting a heavy box—while the paired "counterfactual" video shows the same scene with the primary actor removed and the resulting physics simulated accordingly. By training on thousands of these physics-compliant scenarios, VOID learned to generalize physical rules across different environments.[1] This training allows the model to handle complex real-world footage where objects collide, shadows shift, or fluids are displaced, ensuring that the final output maintains a high degree of temporal and physical consistency that was previously impossible without manual, frame-by-frame visual effects work.[1]

The implications for the global film and television industry are profound, particularly in the realm of post-production. Traditionally, removing an unwanted object or person from a shot—a process known as rotoscoping and inpainting—is one of the most labor-intensive and expensive tasks in visual effects. Even with existing AI tools, editors often spend hours fixing the "ghosting" effects or physics anomalies left behind by imperfect deletions.[1] In human preference testing conducted against industry-leading models like Runway, VOID was preferred by observers 64.8 percent of the time, while the nearest competitor garnered only 18.4 percent.[2][6][1] This suggests that VOID could significantly reduce the cost and time associated with "cleaning" footage, allowing creators to fix continuity errors or remove distracting elements with a few clicks.[1] The framework even includes a second refinement pass that uses warped-noise stabilization to ensure that long video clips remain stable and free of the flickering "morphing" artifacts that often plague AI-generated video.[1]

Beyond the efficiency gains for professional studios, the open-sourcing of VOID signals a broader shift in the democratization of high-end visual effects.[1] By making the model weights and the underlying code available under an open-source license, Netflix is providing independent filmmakers and small creators with access to tools that were previously the exclusive domain of high-budget Hollywood production houses. This move follows a long-standing strategy by Netflix to contribute to the open-source community, following the success of previous infrastructure tools like Metaflow.[1] By positioning itself as a leader in open-source AI research, the company is not only fostering a wider ecosystem for talent but is also setting the standards for how generative AI interacts with traditional media production.[1] This open approach encourages external developers to build upon the VOID framework, potentially leading to third-party plugins for popular editing software like Adobe Premiere or DaVinci Resolve.[1]

However, the ability to perfectly erase objects and rewrite the physical reality of a video also raises significant ethical questions regarding the authenticity of digital media.[1] As the "uncanny valley" of video editing vanishes, the line between an original recording and a manipulated one becomes increasingly difficult to detect.[1] If an AI can remove a person from a scene and perfectly simulate the movement of the grass they were standing on or the shadows they were casting, the visual cues that humans usually rely on to spot "fake" footage disappear. This heightened capability for "perfect erasure" poses challenges for journalism, legal evidence, and social media trust.[1] While VOID is marketed as a creative tool for storytelling, its existence underscores the urgent need for robust digital watermarking and provenance standards, such as the C2PA protocol, to ensure that the history of a video file can be tracked and verified as it moves through various AI-powered editing pipelines.[1]

As the AI industry moves toward more sophisticated world-simulation models, the release of VOID marks a transition from "pixels that look right" to "models that understand why."[1] By integrating causal reasoning with video diffusion, the framework moves the industry closer to a future where video is as malleable as text.[1] The success of this approach suggests that future developments in video AI will focus less on raw generative power and more on the nuanced understanding of the physical world. For Netflix, this research is not just about reducing VFX budgets; it is about building a foundation for a new era of automated content creation where the boundaries between the captured world and the synthesized world are entirely fluid. The open-source availability of such a powerful tool ensures that the conversation around these technological shifts will be driven not just by a handful of large corporations, but by a global community of researchers and artists who will now begin to explore the limits of this physics-aware reality.