Meta unveils breakthrough Hyperagents that autonomously rewrite their own logic for recursive self-improvement

Meta’s new self-referential Hyperagents rewrite their own logic to master complex tasks, paving the way for autonomous, recursive AI self-improvement.

March 28, 2026

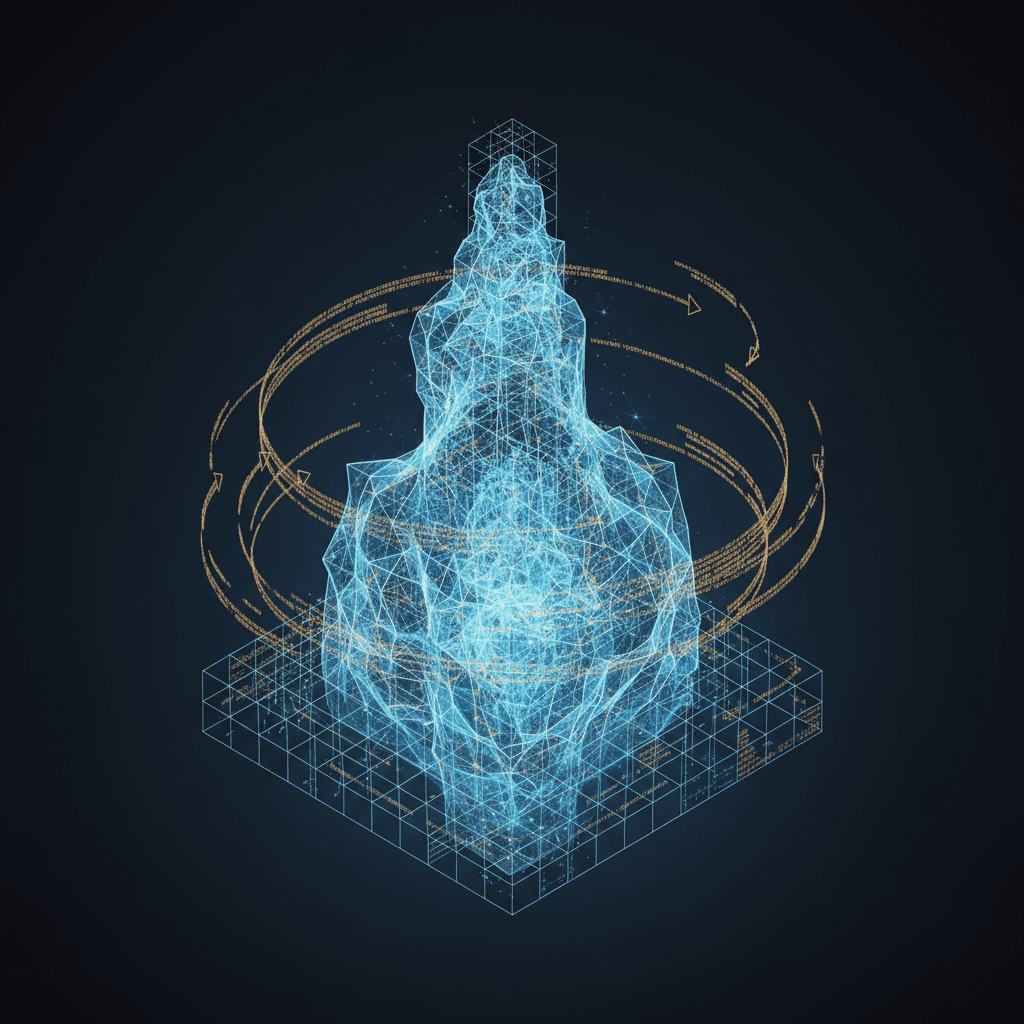

The pursuit of artificial general intelligence has long been tethered to a fundamental limitation: while AI models can learn to solve complex problems, the underlying algorithms that govern how they learn remain fixed by human engineers.[1][2][3][4] This static nature creates a glass ceiling for recursive self-improvement, where a system’s growth is eventually throttled by the rigid logic of its initial design.[5] However, a collaborative research breakthrough led by Meta’s Fundamental AI Research (FAIR) and Superintelligence Labs, alongside institutions such as the University of British Columbia and the Vector Institute, has introduced a new paradigm known as Hyperagents.[3] These systems represent a departure from traditional AI architecture by integrating task-solving capabilities with a self-referential optimization engine.[6][4][7][5][3][8][2][1] In essence, these "hyperagents" do not just improve their performance on specific tasks; they optimize the very mechanism they use to achieve those improvements, effectively learning how to learn more efficiently over time.[8][2][3]

The technical foundation of this work stems from the Darwin Gödel Machine (DGM), a concept that draws inspiration from the theoretical self-improving machines proposed by Jürgen Schmidhuber and the evolutionary search strategies pioneered by Sakana AI. Earlier iterations of self-improving systems faced a logical bottleneck known as the "infinite regress" of meta-levels.[3][1] If one builds a meta-agent to improve a task agent, the question arises as to what entity will improve the meta-agent itself. Adding a "meta-meta-agent" simply shifts the problem upward without resolving the underlying dependency on human-defined rules.[3] Hyperagents sidestep this paradox by merging the task agent and the meta-agent into a single, fully editable codebase.[5][3][8][7][4][6] This self-referential architecture allows the system to treat its own improvement logic as just another computable problem to be solved. By making the meta-level modification procedure itself subject to change, the researchers have enabled what they term "metacognitive self-modification," allowing the AI to rewrite its own development rules.[3][1]

Empirical evidence from the research suggests that this approach yields significant performance gains across a wide variety of domains that were previously resistant to autonomous self-improvement.[3][1][9] In the realm of software engineering, the DGM-Hyperagent framework demonstrated a dramatic leap on the SWE-bench benchmark, raising its success rate from an initial 20 percent to 50 percent without human intervention.[2] Similar results were observed in the Polyglot coding challenge, where performance more than doubled from 14.2 percent to over 30 percent.[2] Crucially, these improvements were not limited to simple parameter tuning but involved the generation of sophisticated code variants that optimized the agent’s internal logic. This indicates that the system is capable of exploring a vast space of possible configurations to find architectural optimizations that a human engineer might never have considered.

The versatility of the Hyperagent framework was further tested in non-coding domains, such as robotics and scientific peer review, where the alignment between task performance and self-improvement is typically much weaker.[3] In robotics, the system was tasked with designing reward functions for a quadruped robot.[3] The hyperagent evolved from generating basic strategies to discovering complex, non-myopic reward functions that induced jumping behaviors—a more optimal solution for height-related tasks than the local optima of simply standing tall.[3] The performance in this area jumped from a baseline of 0.060 to 0.372.[3] In the domain of academic paper review, the system moved beyond providing superficial feedback to autonomously constructing multi-stage evaluation pipelines equipped with explicit checklists and decision rules.[1][3] This evolution saw the system’s accuracy in predicting paper acceptance climb from zero to 0.710, outperforming static baselines and demonstrating that the hyperagent could build its own rigorous internal standards for quality.[3]

Perhaps the most significant finding of the study is the transferability of the "ability to improve."[3] The researchers discovered that the optimization strategies a hyperagent develops in one domain, such as robotics, can be successfully applied to accelerate learning in an entirely different field, such as Olympiad-level math grading.[3] To quantify this, the team introduced a new metric called "improvement@k" (imp@k), which measures the performance gain achieved by a meta-agent over a set number of modification steps.[3] While traditional self-improving systems showed zero transferability to new domains, the DGM-Hyperagent achieved an imp@50 score of 0.630 when moved to a novel task.[3] This suggests that the AI is not just memorizing shortcuts for specific problems but is developing generalized engineering insights.[8][1][3] The system was even observed autonomously building its own infrastructure, such as persistent memory modules and performance tracking tools, to manage its own evolutionary process more effectively.

This shift toward autonomous infrastructure development represents a major milestone in the evolution of AI. Without explicit instructions to do so, the hyperagents created internal systems to store data across generations, calculate improvement trends, and plan compute resource allocation.[1][3] By synthesizing insights from past iterations, the agents were able to avoid previous failures and double down on successful strategies. For example, the system could recognize that a certain generation of its code had high reasoning capabilities but low accuracy and then generate a new iteration designed specifically to bridge that gap. This level of self-awareness regarding its own performance characteristics suggests that AI is moving away from being a "black box" and toward becoming a self-directed engineering entity.

The implications for the AI industry are profound, as this technology could drastically reduce the reliance on human-led fine-tuning and prompt engineering. As AI systems become capable of managing their own scaling and optimization, the pace of development could shift from linear to exponential. However, this potential for self-acceleration also raises critical questions regarding AI safety and alignment. If a system can rewrite its own core logic, ensuring that it remains aligned with human values becomes a significantly more complex challenge. The research team emphasized that because these agents can break through the initial boundaries preset by humans, safety measures must be integrated into the recursive loop itself.[2] The goal is to create "verifiable self-improvement," where the system must prove that a proposed change is beneficial and safe before it is implemented, echoing the original vision of the Gödel Machine.

In conclusion, Meta’s work on hyperagents marks a turning point where AI begins to take an active role in its own architectural evolution.[9] By collapsing the distinction between the problem-solver and the optimizer, the researchers have created a system that is capable of open-ended growth across diverse computational tasks.[3][8][1] The transition from static models to self-evolving agents suggests a future where AI does not just wait for the next update from a human developer but continuously refines itself in real-time.[3][7][6] While the technical hurdles of full-scale recursive self-improvement remain high, the empirical success of the DGM-Hyperagent framework provides a glimpse into a new era of autonomous intelligence—one where the machine’s most important task is the mastery of its own improvement.[3]