Meta Oversight Board warns crowdsourced moderation fails to stop rapid and sophisticated AI disinformation

Meta’s Oversight Board warns that crowdsourced moderation is too slow and vulnerable to counter sophisticated AI-generated disinformation.

March 27, 2026

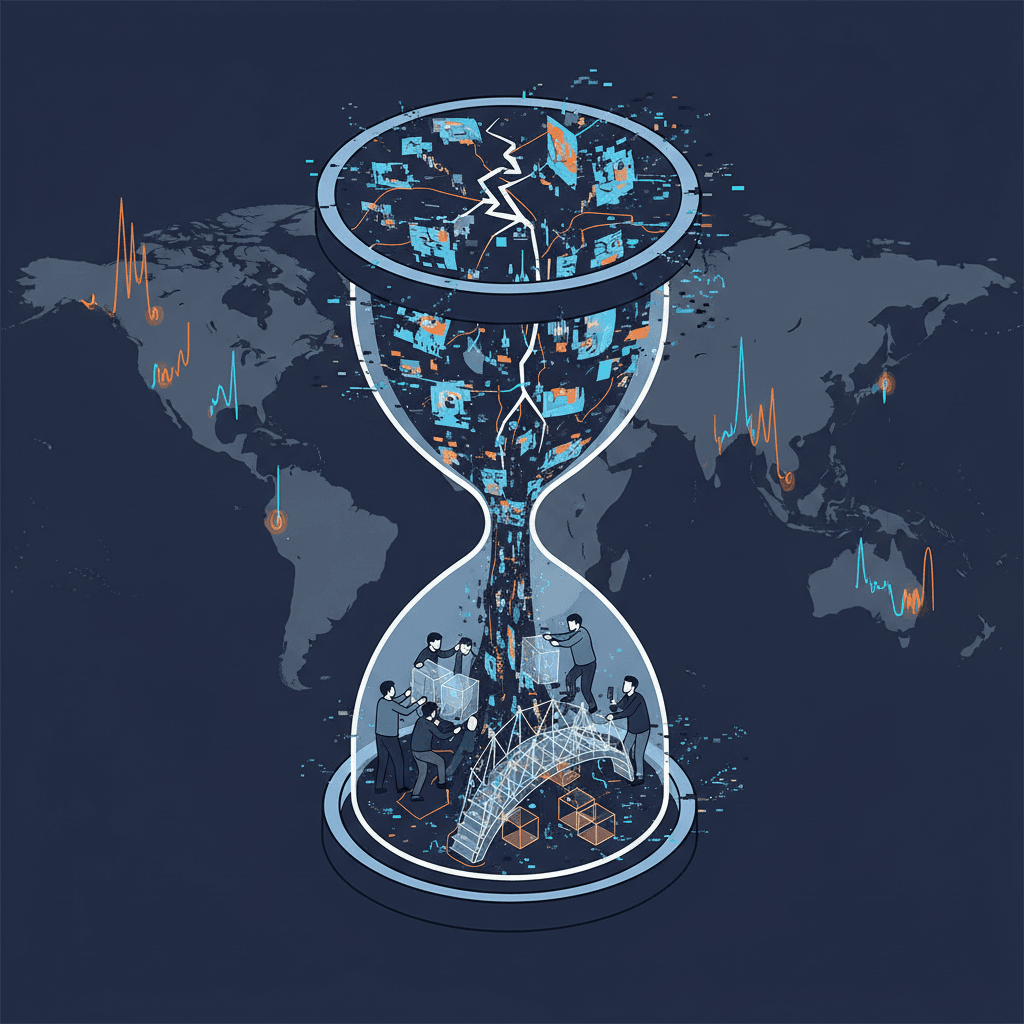

Meta’s pursuit of a decentralized, crowdsourced solution to content moderation is facing a severe reality check from its own semi-independent regulatory body.[1][2] The Oversight Board, tasked with reviewing the tech giant’s policy decisions, has issued a comprehensive warning regarding the planned global expansion of Community Notes.[3][2][4][1][5][6] The board’s findings suggest that while the program is designed to empower users and reduce the company’s direct role in policing speech, it remains fundamentally overmatched by the speed, scale, and sophistication of artificial intelligence-generated disinformation. According to the advisory opinion, the system is currently too slow and too susceptible to organized manipulation to serve as a reliable defense against the modern information landscape.[3] This critique arrives as Meta attempts to pivot away from traditional, third-party fact-checking partnerships in favor of a model that prioritizes community consensus—a shift that the Oversight Board argues could have dangerous real-world consequences if implemented without significant safeguards.[4][2]

The core of the problem lies in the structural mismatch between the rapid-fire nature of AI content generation and the sluggish consensus-building required for crowdsourced corrections. In its analysis of the pilot program, the board highlighted that the process of community labeling is often paralyzed by its own design.[2] For a note to appear publicly on a post, it must achieve a high level of agreement among contributors who have historically held differing viewpoints.[4] While this is intended to ensure neutrality and prevent partisan dogpiling, it creates a massive latency gap. Research into similar systems on other platforms suggests that the average delay before a correction becomes visible can range from twenty-six to more than sixty-five hours.[3] In the context of viral disinformation or deepfakes during a breaking news event, this delay renders the correction effectively useless, as the misleading content usually reaches its peak audience long before the community can agree on a label. The board noted that AI-powered tools now allow bad actors to create and manage vast networks of accounts at a scale that can easily overwhelm these community-driven efforts, creating a flood of disinformation that the current system simply cannot process.

The data gathered during the initial phases of the program in the United States paints a stark picture of its current limitations compared to professional moderation.[3] According to the oversight body’s report, only about six percent of all proposed community notes ever reach the threshold required for public display.[3] This low success rate results in a massive coverage gap. In one instance cited by researchers, professional fact-checkers were able to apply labels to tens of millions of posts across a specific region in a six-month period, whereas the community-driven system produced fewer than one thousand visible notes during a similar timeframe in the American market. This disparity suggests that relying on a small pool of volunteer contributors is a vastly inadequate substitute for a professionalized, scaled operation. Furthermore, because Meta has confirmed there are currently no punitive consequences for users whose posts receive a community note—such as distribution strikes or monetization penalties—the system acts more as a footnote than a deterrent, failing to provide the enforcement teeth necessary to discourage the spread of harmful falsehoods.

The Oversight Board’s most urgent warnings focus on the risks of expanding this program into volatile geopolitical environments. Meta was advised to exercise extreme caution or outright avoid deploying the system in countries with repressive human rights records, ongoing armed conflicts, or high-stakes electoral cycles.[1][4][2][6] In these contexts, the "community" is rarely a neutral group of truth-seekers; instead, it is often composed of polarized factions that can be easily mobilized for coordinated harassment or state-sponsored censorship. The board expressed concern that bad actors could use the consensus mechanism to suppress legitimate information by flooding the system with negative ratings, effectively silencing dissent under the guise of community moderation. There is also a significant risk to the contributors themselves. In countries where press freedom is restricted, participating in a public-facing fact-checking initiative could expose individuals to state retaliation, and the board found that Meta’s current privacy protections for these volunteers are insufficient for high-risk regions.

Beyond the immediate tactical failures, the report identifies a broader strategic vulnerability in the way Meta manages its information environment.[3][2][7] By reducing its reliance on traditional fact-checkers, the company is increasingly dependent on the reliability of the broader information ecosystem—a dependency that the board calls fragile.[2] In regions where the local media is weak or state-controlled, there is no objective baseline of truth for community members to reference when writing or rating notes. This creates a feedback loop where the community notes system simply mirrors existing societal divisions rather than clarifying them. The board pointed out that AI-generated "pink slime" news outlets—automated websites that churn out low-quality, biased content designed to look like local news—further complicate this by providing a veneer of legitimacy that community contributors might inadvertently rely upon. Without a robust layer of professional verification, the crowdsourced model risks becoming a tool that amplifies confusion rather than resolving it.

The implications for the wider AI and social media industry are profound, signaling that the "hands-off" approach to moderation may be reaching its limit. As generative AI makes it nearly costless to produce high-fidelity deceptive media, the burden of proof is shifting onto platforms in a way that volunteer-led systems are not equipped to handle. The Oversight Board recommended that Meta not view community-based moderation and professional fact-checking as mutually exclusive, but rather as complementary tools that must be part of a multi-layered defense. The board issued seventeen specific recommendations, including the development of a more sophisticated risk matrix to guide global expansion and the implementation of stricter automated detection for AI-generated manipulation. For an industry that has spent years attempting to automate or decentralize the difficult work of content moderation, the message from the supervisory body is clear: technology and crowdsourcing are not a panacea for the complex human and political challenges of disinformation.

In its conclusion, the oversight body emphasized that Meta’s responsibility to protect its users cannot be outsourced to the community without significant institutional support and human oversight. The warning serves as a pivotal moment for the company as it navigates the tension between protecting freedom of expression and preventing tangible real-world harm. If Meta proceeds with a global rollout of the program in its current state, it risks creating a system that is easily gamed by the very actors it seeks to restrain. The board’s analysis suggests that for a platform with billions of users, a moderation strategy that relies on the speed of a consensus-seeking crowd to fight the speed of an algorithm is fundamentally flawed. To truly address the threat of AI-driven disinformation, the industry may need to return to more resource-intensive, professionalized methods of verification, rather than hoping that a decentralized network of volunteers can hold the line against an automated tide of falsehoods.