Know3D leverages multimodal AI to eliminate blind spots in 3D generation from single photos

New research leverages multimodal AI to eliminate 3D blind spots by giving creators precise, text-driven control over hidden geometry

April 4, 2026

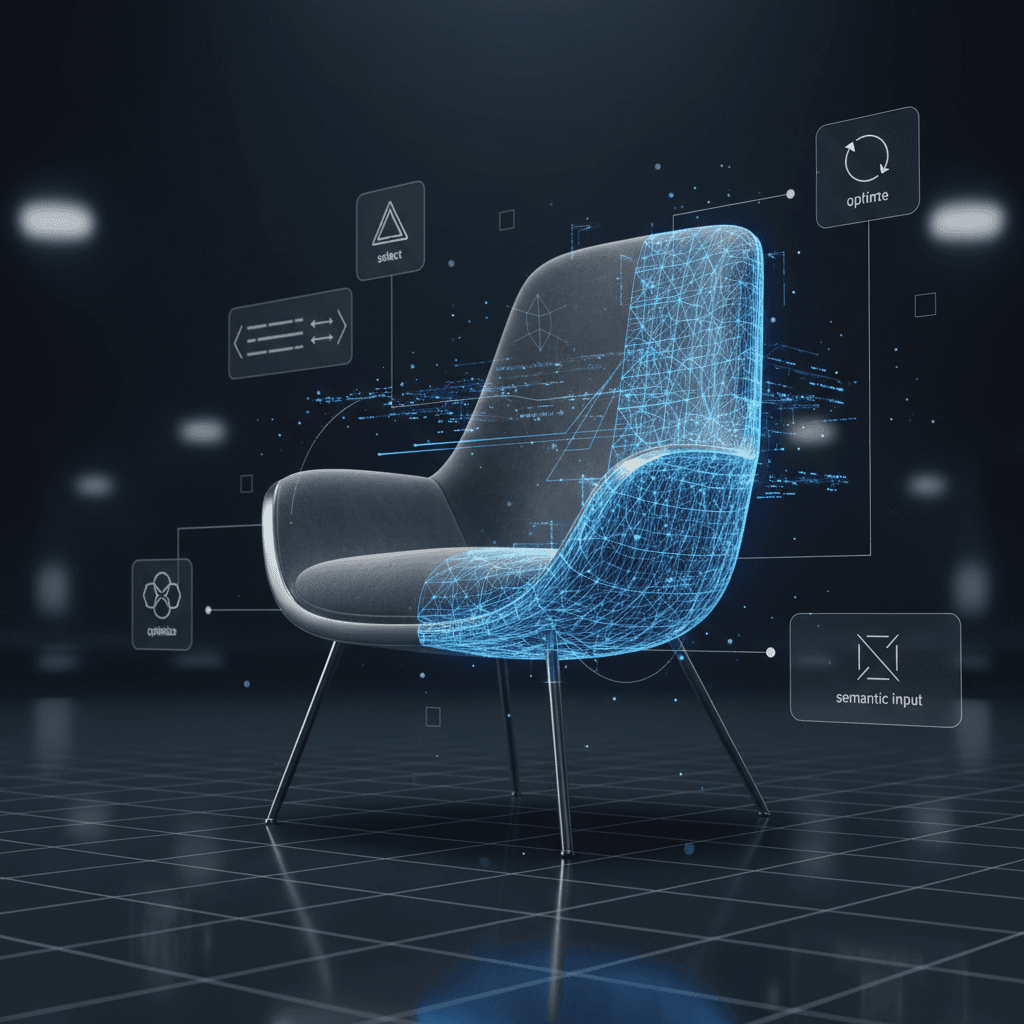

Generating a complete three-dimensional object from a single two-dimensional photograph has long been one of the most significant hurdles in the field of computer vision. While artificial intelligence has made massive strides in recreating the visible portions of an object with startling accuracy, the hidden regions—specifically the back side of an object—remain a persistent "blind spot." In most current single-view 3D reconstruction models, the AI is essentially forced to gamble, hallucinating the unseen geometry based on statistical averages. This often results in "Janus" effects, where an object might appear to have two front faces, or it produces generic, blurry textures that lack any meaningful detail or structural logic. A new research framework called Know3D is now fundamentally changing this dynamic by tapping into the vast world knowledge embedded in multimodal large language models to give users precise semantic control over these previously invisible regions.[1][2][3][4][5][6][7]

The core innovation of Know3D lies in its ability to transform what was once a stochastic and uncontrollable process into a deliberate, user-guided workflow.[4][3][1] In traditional 3D generation pipelines, the model relies on a limited set of 3D training data to guess what might be behind a chair, a teapot, or a building. Because 3D datasets are significantly smaller and less diverse than their 2D counterparts, these models frequently struggle with "hallucination" errors, where the back of the object is physically implausible or entirely mismatched with the front.[2] Know3D solves this by integrating the commonsense reasoning of Vision-Language Models (VLMs) into the generation process.[1][2][6][8] By using simple text commands, a user can now specify exactly what should exist on the hidden side of an asset, such as adding a hidden drawer to a wooden chair or placing a specific logo on the back of a coffee cup.

Technically, the Know3D framework functions through a sophisticated multi-stage architecture that uses a diffusion model as an intermediate "bridge" between high-level semantic understanding and low-level geometric reconstruction.[9][2][5][1] At the heart of the system is a multimodal foundation model—specifically a fine-tuned version of Qwen-Image-Edit—which combines the reasoning capabilities of Qwen2.5-VL with a Diffusion Transformer (DiT).[9] Instead of simply generating a separate image of the back side and trying to stitch it onto the front, Know3D employs a technique known as latent hidden-state injection.[1][4][7] During the denoising process of the diffusion model, the researchers extract intermediate hidden states from the Multimodal Diffusion Transformer layers.[1][9] These latent states are rich with spatial and structural priors that describe the object’s three-dimensional logic in a way that raw images cannot.

This injection process allows the system to bypass the errors typically introduced by a "decode-then-re-encode" loop.[1] In many previous attempts to solve the back-side problem, models would generate a 2D image of the back and then use that image as a secondary input for 3D reconstruction. However, if that generated 2D image contained even minor perspective or lighting errors, those flaws would be magnified during the 3D conversion. Know3D avoids this pitfall by directly feeding the "neural thoughts" of the VLM into the 3D generator through a parallel cross-attention branch. This ensures that the generated back side remains geometrically consistent with the front view while strictly adhering to the user’s textual instructions.[1][2]

The practical implications for the 3D industry are extensive, particularly in fields where rapid asset prototyping is essential. In the gaming and film industries, artists often spend hours manually modeling the occluded parts of assets that were originally designed from concept art or single-perspective sketches. With Know3D, the initial 3D mesh can be generated with a semantically accurate back side in a fraction of the time. For example, if a designer has an image of a vintage dresser, they can prompt the AI to ensure the back side includes realistic structural bracing or even a "hidden compartment" that matches the period-accurate style of the front. This level of granularity effectively turns a "black box" generation process into a professional-grade creative tool.

Extensive experimental results conducted by the research team demonstrate that Know3D significantly outperforms existing state-of-the-art models in both geometric fidelity and semantic alignment. Testing on the HY3D-Bench dataset showed that Know3D achieved higher scores in metrics like PSNR and SSIM, which measure visual quality, as well as lower Chamfer Distance, which measures the accuracy of the underlying 3D shape.[9] Perhaps more importantly, the framework excelled in semantic consistency metrics such as ULIP and Uni3D.[9] These scores indicate that the objects generated by Know3D are not just "pretty" but are also more likely to be perceived by humans as "correct" interpretations of the original 2D prompt. The model proved capable of synthesizing diverse and structurally sound components, such as changing the material of a chair's backrest or adding complex appendages to biological models, without breaking the overall identity of the object.

Furthermore, the research highlights a shift in how AI developers are approaching the "scarcity" problem of 3D training data. By leveraging the "world knowledge" of large language models—which have been trained on billions of images and trillions of words—Know3D effectively uses human language as a proxy for 3D intuition. If the model knows what a "backpack" is and how it typically hangs on a "chair," it can use that linguistic and visual common sense to reconstruct a plausible 3D interaction even if it has never seen a 3D model of that specific combination before. This suggests that the future of 3D AI may not depend solely on collecting more 3D scans, but on better integrating the multimodal reasoning capabilities of existing AI giants.

However, the researchers also acknowledge current limitations, noting that the structural robustness of the generated assets is still heavily dependent on the quality of the underlying multimodal foundation models. If a VLM fails to comprehend a particularly complex or abstract instruction, the resulting 3D shape may still exhibit distortions.[2] As foundation models continue to evolve, however, these edge cases are expected to diminish. The success of Know3D marks a pivotal moment in 3D generation, moving the industry away from "lucky guesses" and toward a future where every angle of a digital object is as controllable as the pixels on a screen.

In the broader context of the AI industry, Know3D represents a move toward truly multimodal workflows where text, images, and 3D geometry are no longer treated as separate silos. The ability to "talk" to a 3D model during its creation phase represents a significant step toward making 3D content creation accessible to non-experts, while still providing the precision required by professional developers. As these technologies are integrated into standard design software, the barrier between a 2D concept and a functional 3D asset will continue to dissolve, allowing for a more fluid and intuitive creative process across the digital landscape. This research effectively bridges the gap between abstract human intent and concrete geometric reality, transforming the way we build the virtual worlds of tomorrow.