Hackers Infiltrate LiteLLM Supply Chain to Steal Sensitive Credentials from Global AI Organizations

Malicious code in the LiteLLM proxy exposes critical cloud credentials, highlighting new security risks for autonomous AI agents

March 24, 2026

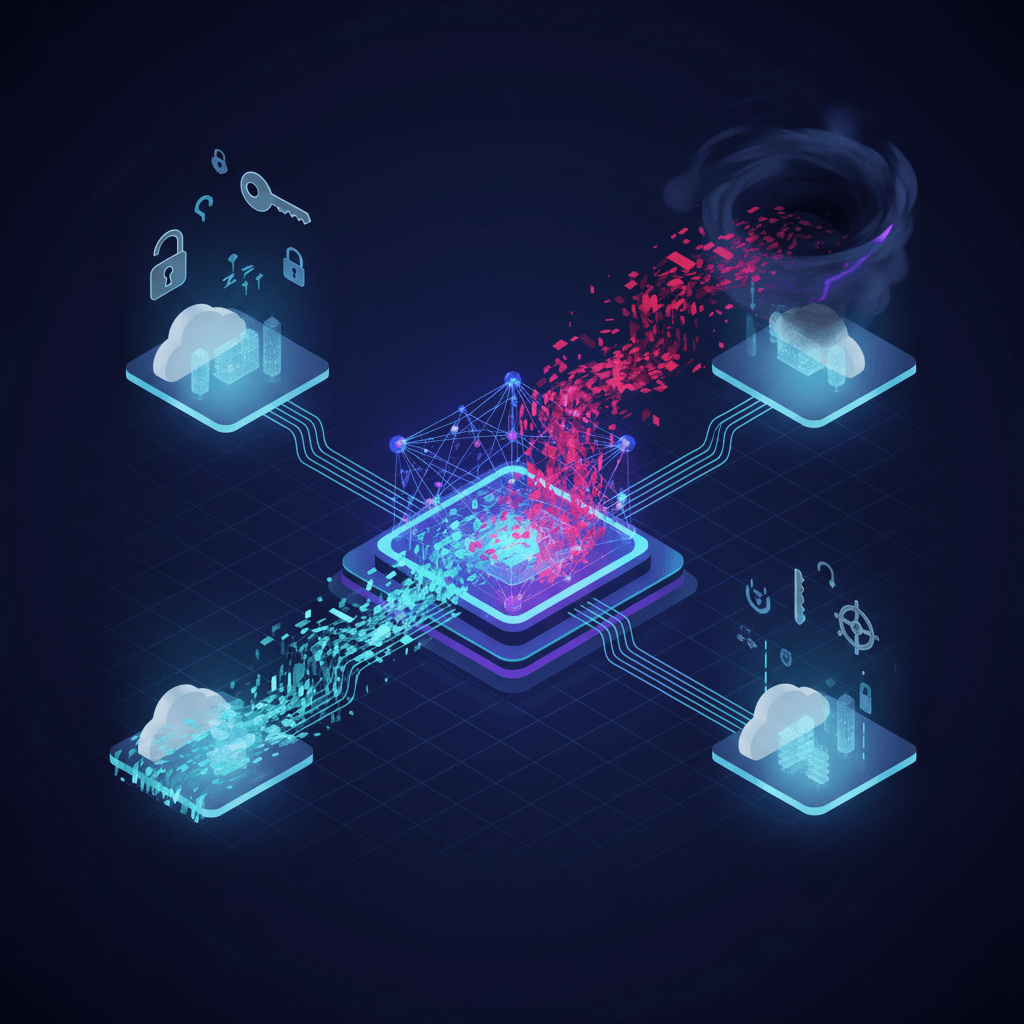

The security landscape for artificial intelligence infrastructure has shifted dramatically following a sophisticated supply chain attack on LiteLLM, a critical open-source proxy used by thousands of organizations to manage and route API calls across more than one hundred different large language models. The compromise, which involved the injection of credential-stealing malware into official distribution channels, has sent shockwaves through the AI development community. As a tool that effectively serves as the central nervous system for AI-integrated applications, the breach of LiteLLM represents one of the most significant security failures in the generative AI era. Security researchers and industry leaders, including NVIDIA AI Director Jim Fan, have characterized the event as a harbinger of a new class of cyberattacks specifically designed to target the unique vulnerabilities of AI agents and the cloud environments that host them.

The attack was executed through a classic supply chain maneuver, targeting the Python Package Index where the malware was bundled into two specific versions of the library, namely 1.82.7 and 1.82.8.[1][2] Investigators found that the threat actors, a group identified as TeamPCP, managed to gain unauthorized access to the publisher's credentials, likely through an account takeover or a compromise of the maintainer's continuous integration and delivery pipeline.[1] Once control was established, the attackers uploaded the malicious versions, which featured a hidden file named litellm_init.pth.[2] This specific file extension is particularly dangerous in the Python ecosystem because it allows for immediate code execution upon the installation of the package, meaning the malware would run even before a developer attempted to import or use the library in their code.

The payload contained within these compromised versions was designed for comprehensive data exfiltration. Upon activation, the malware conducted a deep sweep of the host system to harvest a wide array of sensitive information. This included SSH keys, cloud provider credentials, cryptocurrency wallet files, and the various API keys that LiteLLM is intended to manage.[1] Of particular concern was the malware’s ability to locate and extract Kubernetes configuration files, which are frequently stored in default directories on developer machines and in production environments. By obtaining these configurations, the attackers were able to move laterally across cloud systems, pivoting from a single compromised workstation into entire Kubernetes clusters where high-value data and computing resources are concentrated.

The sophistication of the exfiltration process further highlights the professional nature of the attack. The harvested data was encrypted using RSA-4096 algorithms to prevent detection by network-level security tools that might look for plain-text secrets leaving the network. The encrypted packages were then sent to a lookalike domain controlled by TeamPCP, designed to mimic legitimate service endpoints and blend in with normal traffic. This method allowed the breach to remain undetected for an extended period, as many security systems are not configured to flag outgoing traffic to domains that appear structurally similar to trusted AI service providers.

Contextualizing the attack reveals a broader and more concerning trend in the cybersecurity world.[3] The compromise of LiteLLM appears to be part of a coordinated campaign by TeamPCP targeting the software supply chain's most trusted components. This same group was recently linked to security breaches involving other foundational tools such as the vulnerability scanner Trivy and the application security platform Checkmarx.[1] In the case of LiteLLM, the attackers reportedly exploited a misconfiguration in the Trivy GitHub Actions environment to steal a privileged access token.[2] This token gave them the necessary permissions to manipulate the CI/CD pipeline and publish malicious releases. The irony of using a security tool as the primary vector to infect an AI gateway has not been lost on industry analysts, who point out that the very tools meant to protect the ecosystem are now being weaponized to dismantle it.

The implications for the AI industry are profound, particularly concerning the rise of autonomous AI agents.[3] Modern AI applications increasingly rely on agents that have the authority to execute code, browse the web, and interact with internal databases. When an AI proxy like LiteLLM is compromised, it provides threat actors with a direct line into the "brain" of these agents. Jim Fan’s warning emphasizes that we are entering an era where AI agents represent a massive, expanded attack surface. Because these agents are designed to be helpful and follow instructions, a hijacked proxy can feed them malicious prompts or redirect their outputs to unauthorized destinations. The LiteLLM hack demonstrates that the security of an AI agent is only as strong as the proxy it uses to communicate with the world.

Furthermore, the scale of the LiteLLM ecosystem makes the potential impact difficult to quantify. With over 97 million monthly downloads and thousands of dependent projects, including major frameworks from Google and Microsoft, the "blast radius" of the malware could extend to almost every major tech firm and research institution currently building in the generative AI space. The fact that the attackers also defaced the BerriAI GitHub repository and initially closed security reports using compromised accounts illustrates the total control they exerted over the project’s infrastructure. This level of compromise undermines the fundamental trust that the open-source community relies on to build and share complex software.

In the wake of the incident, security experts are urging a total shift in how AI-centric organizations manage their dependencies. The standard practice of pulling the "latest" version of a package is now being viewed as an unacceptable risk for critical infrastructure. Recommendations now focus on pinning specific, verified versions of libraries and using hash verification to ensure that the code on a local machine matches the known-good source. Additionally, the practice of granting broad, cluster-wide permissions to AI service accounts is being re-evaluated in favor of zero-trust architectures where every action taken by an AI agent is strictly monitored and limited to the minimum necessary scope.

Current remediation efforts involve the total quarantine of the affected LiteLLM versions on the Python Package Index, effectively blocking further downloads. However, for organizations that have already installed the malicious versions, the advice is clear: rotating every credential that was present on the compromised system is the only way to ensure safety. This includes not just the AI API keys, but also cloud infrastructure secrets, database passwords, and SSH keys. As the industry moves forward, the LiteLLM hack serves as a stark reminder that the rapid adoption of AI must be matched by equally rapid advancements in supply chain security and cloud-native defense strategies. The transition from simple chatbots to complex, agentic systems requires a new security paradigm that assumes every link in the chain—from the model to the proxy to the cloud—is a potential target for sophisticated global actors.