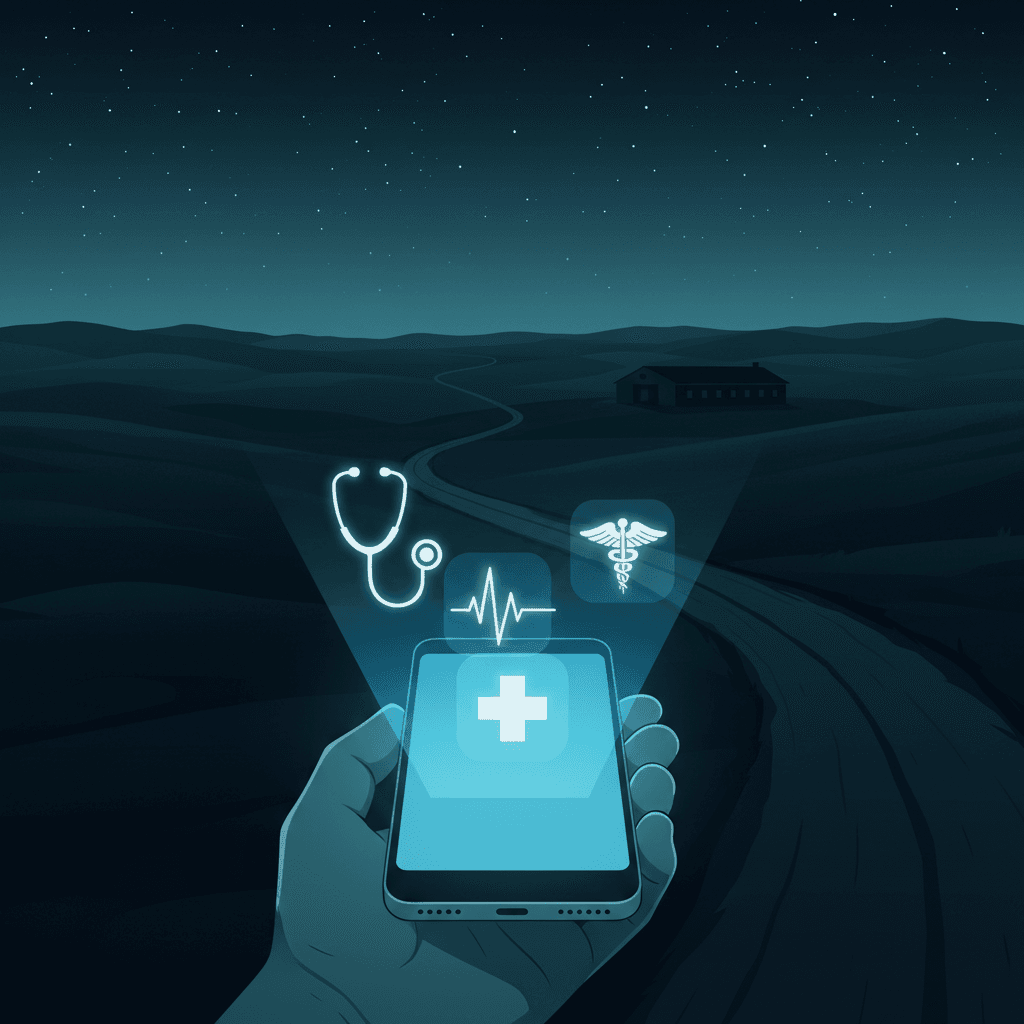

ChatGPT becomes a primary medical entry point for millions living in hospital deserts

New data reveals millions in hospital deserts are turning to AI for after-hours triage and complex insurance navigation.

April 6, 2026

The rapid integration of artificial intelligence into the personal lives of millions has reached a critical inflection point in the healthcare sector, as revealed by a comprehensive data release from OpenAI.[1][2][3][4] The report, which analyzed anonymized usage patterns among its 800 million weekly active users, highlights a staggering shift in how the public navigates medical uncertainty.[5] According to the findings, ChatGPT now processes millions of health-related queries every week in the United States alone, with a disproportionate volume of these interactions originating from geographical regions known as hospital deserts.[5][2][6] Specifically, the data shows that users in these underserved areas—defined as being more than a thirty-minute drive from a general medical or children’s hospital—submit an average of 600,000 healthcare-related messages every week.[2] This migration toward AI-driven assistance suggests that for a significant portion of the population, digital tools are no longer just supplemental resources but have become primary points of entry for medical information.

The phenomenon is driven by more than just proximity to physical clinics; it is deeply tied to the limitations of traditional clinical operating hours.[6] OpenAI’s analysis indicates that seven in ten healthcare-related conversations on its platform occur outside of standard business hours, which typically run from 8 a.m. to 5 p.m.[2][6] This suggests that when urgent health questions arise during the night or on weekends—times when primary care offices are closed and emergency room visits are often prohibitively expensive or physically distant—patients are increasingly turning to large language models for immediate guidance. The data paints a picture of an accidental triage system where AI acts as a 24/7 consultant for millions who feel excluded from or inconvenienced by the traditional medical infrastructure. While the company maintains that its technology is not a replacement for professional medical advice, the sheer volume of after-hours traffic indicates that the public is using the tool to fill a functional void in available care.[4]

The geographic distribution of these queries further underscores the systemic gaps in the American healthcare system. States with significant rural populations and fewer medical facilities per capita are seeing the highest rates of reliance on AI. Wyoming currently leads the nation in the share of healthcare-related messages originating from hospital deserts, followed closely by Oregon, Montana, South Dakota, and Vermont.[2][6] In these states, where a simple visit to a specialist can require hours of travel, the ability to instantly decode medical jargon or explore potential symptoms represents a significant change in patient autonomy. For individuals in these regions, the digital divide is being bridged by a tool that offers instant, low-cost information, even if it lacks the clinical certainty of a face-to-face examination. This trend highlights a growing reality for the AI industry: as models become more capable, they are being drafted into roles that were never intended by their creators, serving as a frontline resource in regions where the physical healthcare infrastructure is in retreat.

Beyond the immediate search for symptom relief, the data reveals that users are leveraging AI to navigate the bureaucratic complexities of the medical industry.[5][7] Approximately 1.5 million to 2 million messages per week focus specifically on health insurance, ranging from requests to compare different coverage plans to assistance in decoding complex medical bills and navigating the claims process.[8][3][5][6] This administrative use case highlights the secondary burden of the healthcare system—not just the illness itself, but the financial and logistical hurdles that follow. Users are employing AI to spot errors in billing, understand eligibility requirements, and even draft appeals for denied insurance claims.[4] This suggests that the value of AI in this space is as much about navigating the business of health as it is about understanding the science of it. The complexity of modern insurance has reached a level where a significant portion of the public feels they need an AI assistant to ensure they are receiving the coverage they pay for.

The implications for the AI industry and the medical community are profound, particularly regarding the ethics of accuracy and the risk of hallucinations. OpenAI and other tech leaders continue to issue stern disclaimers that their models are not medical devices and should not be used for diagnosis. However, a survey included in the report found that three in five U.S. adults have used AI tools for health-related questions in the past three months, with 55 percent of those individuals using the technology specifically to check or explore symptoms.[3][2][8] The disconnect between official corporate policy and actual consumer behavior presents a major challenge for regulators and developers alike. If millions are already using AI as a de facto diagnostic tool, the pressure on companies to ensure "clinical-grade" accuracy becomes an existential concern. The risk of an AI providing a misleading or flatly incorrect response is magnified in a healthcare context, where the stakes are measured in human lives rather than simple text errors.

To address these concerns, the AI industry is undergoing a period of rapid professionalization and specialization. OpenAI has recently hired a string of high-profile healthcare veterans, including co-founders of major medical networks and digital health experts, to lead its health products division. This internal shift suggests a long-term strategy to move beyond general-purpose chatbots and toward highly refined, medically-vetted tools that can operate within a more formal regulatory framework. There is also a notable shift in the physician community; data from the American Medical Association indicates that physician adoption of AI for administrative and clinical support has nearly doubled in recent years. Doctors are increasingly using these tools for real-time monitoring, triage, and summarizing patient histories, which suggests that the future of medicine will not be a choice between human and machine, but rather a collaboration between the two.

The regulatory landscape is also shifting to meet this new reality.[5] Agencies such as the FDA are currently re-evaluating how to govern AI-powered consumer tools that provide medical information.[5] There is a delicate balance to strike between fostering innovation that could save lives in underserved areas and protecting the public from the dangers of automated misinformation. Proposals are currently circulating that would clarify the pathway for AI-powered medical devices and define the scope of regulation for services that support, rather than replace, physician judgment.[1] The goal is to create a system where AI can act as a "healthcare ally"—a term used by OpenAI in its report—by helping users interpret data and navigate access gaps without overstepping into the realm of unauthorized medical practice.

Ultimately, the data from hospital deserts reveals that the public's need for accessible information is outpacing the speed of traditional healthcare reform. As clinics close and costs rise, the gravitational pull toward free, instant, and 24/7 digital assistance will only grow stronger. The 600,000 weekly queries from medical deserts represent more than just data points; they are a clear signal of where the current system is failing and where technology is being called upon to act as a safety net. Whether the AI industry can successfully manage this responsibility remains to be seen, but the era of the chatbot as a primary health resource has undeniably arrived. The future of healthcare accessibility may no longer be defined solely by the number of hospital beds in a county, but by the quality and safety of the AI models available on its residents' phones.