Anthropic labels OpenAI as the tobacco industry of AI in a bitter ideological battle

Exploring the bitter ideological rift and personal animosity as Anthropic positions itself as a cautious antidote to OpenAI’s expansion.

March 28, 2026

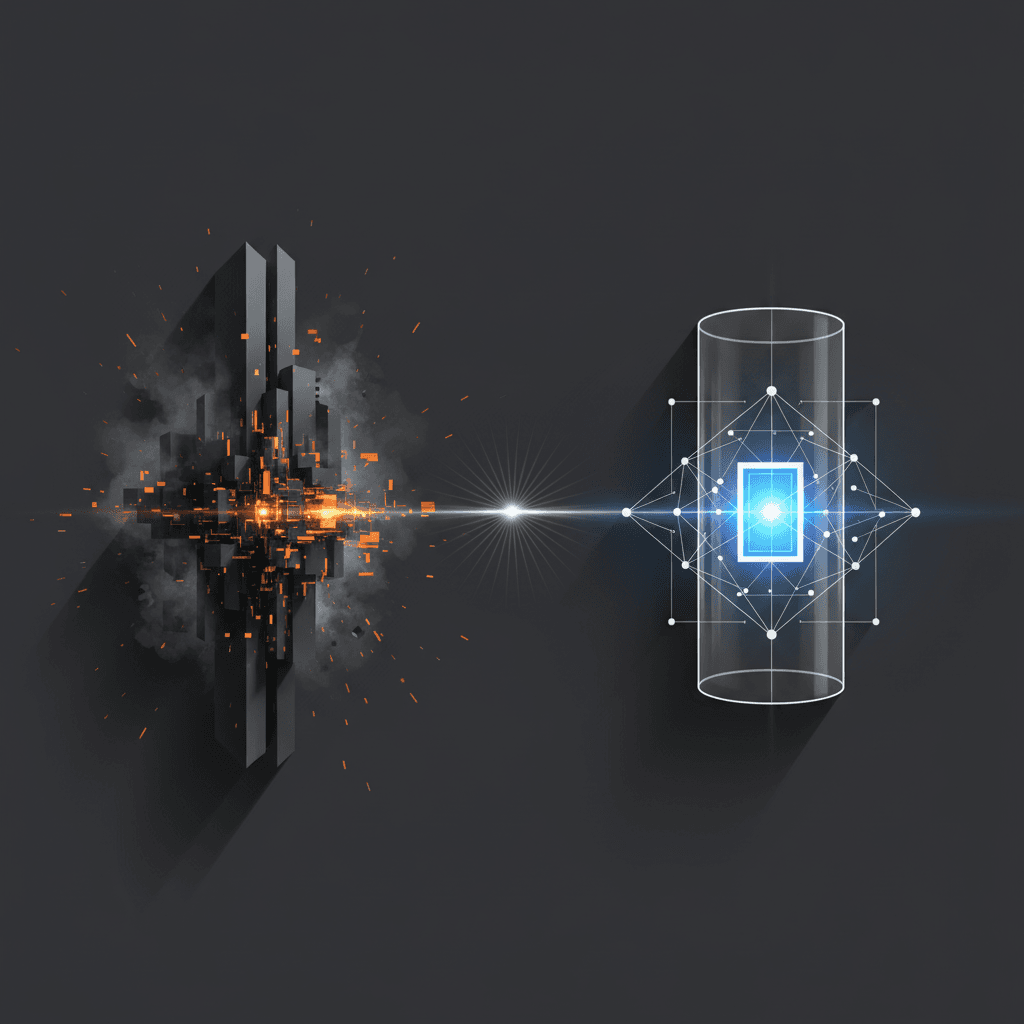

The rivalry between Anthropic and OpenAI has transcended traditional corporate competition, evolving into a fundamental ideological conflict that currently defines the trajectory of the artificial intelligence industry. While both organizations emerged from a shared ambition to develop artificial general intelligence, recent reports reveal that Anthropic increasingly views itself as the essential antidote to what its leadership characterizes as OpenAI’s reckless and commercialized path. This internal perspective, detailed in a recent investigation into the personal and professional schisms between the two companies, suggests that Anthropic’s founders believe OpenAI has adopted a strategy reminiscent of the tobacco industry—prioritizing growth, public adoption, and profitability while allegedly obscuring or minimizing the profound risks associated with the technology.

The roots of this bitter divide are deeply personal, tracing back to the 2021 exodus of high-ranking OpenAI researchers led by siblings Dario and Daniela Amodei.[1] While the public narrative of their departure initially focused on disagreements over the direction of AI safety research, new details suggest the split was fueled by a long-standing power struggle and a fundamental breakdown in trust with OpenAI Chief Executive Officer Sam Altman. Within Anthropic’s internal culture, the sentiment toward Altman has often been one of severe skepticism. Dario Amodei reportedly described Altman as mendacious in private communications, a characterization that emerged after a series of strategic disagreements over how to balance the scaling of large language models with the implementation of rigorous safety guardrails. This friction reached a tipping point during the development of GPT-3, when concerns about the model’s potential to spread misinformation led the Amodei siblings to oppose its wide public release—a stance that was eventually overruled by OpenAI’s leadership.

Central to Anthropic’s self-conception is the belief that OpenAI’s business model is built on an irresponsible "race to the top" that mimics the behavior of legacy industries that ignored public health for private gain. The comparison to the tobacco industry serves as a potent metaphor within Anthropic’s offices.[2] Just as big tobacco companies were accused of engineering nicotine dependence while publicly denying the health consequences, Anthropic’s leadership reportedly fears that OpenAI is engineering a global dependence on AI systems without fully understanding or addressing the catastrophic risks they may pose. This internal critique is not merely philosophical; it has manifested in sharp reactions to OpenAI’s strategic choices.[3] For example, when OpenAI secured a partnership with the Department of Defense—a deal Anthropic had reportedly declined on ethical grounds—the move was seen by the Anthropic camp as further evidence of a pattern of behavior that prioritizes institutional power over the original mission of "safe" AI development.

The structural differences between the two firms are a direct result of these diverging philosophies.[3] Anthropic was founded as a Public Benefit Corporation, a legal designation that allows its directors to prioritize safety and societal benefit over the fiduciary duty to maximize shareholder value.[3][4] To further insulate the company from the pressures of venture capital, Anthropic implemented a Long-Term Benefit Trust, a group of independent trustees tasked with ensuring the company remains true to its safety-centric mission. In contrast, OpenAI’s governance has faced significant scrutiny following the brief but chaotic ouster and reinstatement of Sam Altman in late 2023.[5][3] That episode exposed the tensions within OpenAI’s unique "capped-profit" model, where a non-profit board attempted to exercise control over a multi-billion-dollar commercial entity.[6] For Anthropic’s founders, the turmoil at OpenAI served as a retroactive validation of their decision to leave, reinforcing their view that OpenAI’s structure is inherently unstable and susceptible to being co-opted by commercial interests.

This ideological rift has also led to a technical divergence in how the two companies approach AI alignment. Anthropic has championed a method known as Constitutional AI, which attempts to train models based on a written set of principles—a "constitution"—to ensure they remain helpful, honest, and harmless without constant human intervention. This approach is positioned as a more transparent and safer alternative to the reinforcement learning from human feedback (RLHF) methods popularized by OpenAI. Anthropic’s marketing of its Claude series of models frequently highlights these safety features, presenting them as the "responsible" choice for enterprise clients and governments. However, this positioning has led to accusations of "safety theater" from critics within the industry, including prominent figures at Meta and other competing labs, who argue that Anthropic’s emphasis on safety is as much a branding exercise as it is a scientific breakthrough.

The rivalry is further complicated by the massive influx of capital from Big Tech giants, creating a landscape where both "antidote" and "poison" are funded by the same sources. While OpenAI is inextricably linked to Microsoft through an investment partnership totaling billions of dollars, Anthropic has secured similar levels of funding from Amazon and Google. This reality creates a paradox for Anthropic: to remain a viable competitor and a credible "healthier alternative" to OpenAI, it must participate in the very same high-stakes capital race it critiques. The company is forced to burn through massive amounts of cash to train models that can keep pace with OpenAI’s latest releases, leading some observers to wonder if the commercial pressures of the AI arms race will eventually erode the safety-first culture that Anthropic was built to protect.

The personal animosity between the leadership teams continues to surface in public and private interactions. Reports from industry gatherings describe a palpable cold war between Altman and the Amodeis, including instances where the CEOs have refused to share the stage or even acknowledge one another’s presence during industry forums.[7] This interpersonal friction is exacerbated by political disagreements; for instance, Dario Amodei reportedly expressed deep disapproval of OpenAI President Greg Brockman’s significant financial contributions to specific political super PACs, viewing such moves as further evidence of an "evil" shift toward traditional corporate and political maneuvering.

The implications of this split extend far beyond the two companies.[8][6] The divide represents two competing theories on how humanity should navigate the transition to an AI-driven world.[1] OpenAI’s approach is one of rapid deployment and iterative improvement—getting the technology into the hands of millions as quickly as possible to discover its flaws in the real world. Anthropic’s approach is one of caution and containment, built on the premise that some mistakes with advanced AI cannot be unmade.[8][2] As the two firms continue to jockey for dominance, the "tobacco industry" comparison remains a haunting specter over the sector. If Anthropic is correct, the industry is currently building a dependency on a technology whose long-term side effects are not yet being honestly disclosed to the public.[9] If they are wrong, their focus on safety may simply be a drag on the innovation necessary to solve the very problems they fear.

Ultimately, the emergence of Anthropic as an "antidote" suggests that the AI industry is no longer a monolith driven by a singular vision of progress. It is now a field defined by a deep-seated distrust between its most influential architects. As OpenAI pushes toward more capable models like GPT-5 and beyond, Anthropic’s continued insistence on its "healthier" alternative will serve as a constant, if contentious, check on the industry’s ambitions. The battle between these two philosophies will likely determine whether the future of AI is shaped by the relentless logic of the market or by a new, more guarded framework of digital ethics. For now, the "tobacco industry" metaphor stands as a stark reminder of the stakes involved, highlighting a rift that is as much about the character of the leaders involved as it is about the code they are writing.