YouTube expands AI likeness detection to all creators to combat unauthorized deepfakes

YouTube expands its advanced facial recognition tools to help all creators combat unauthorized deepfakes and secure their digital identities.

May 16, 2026

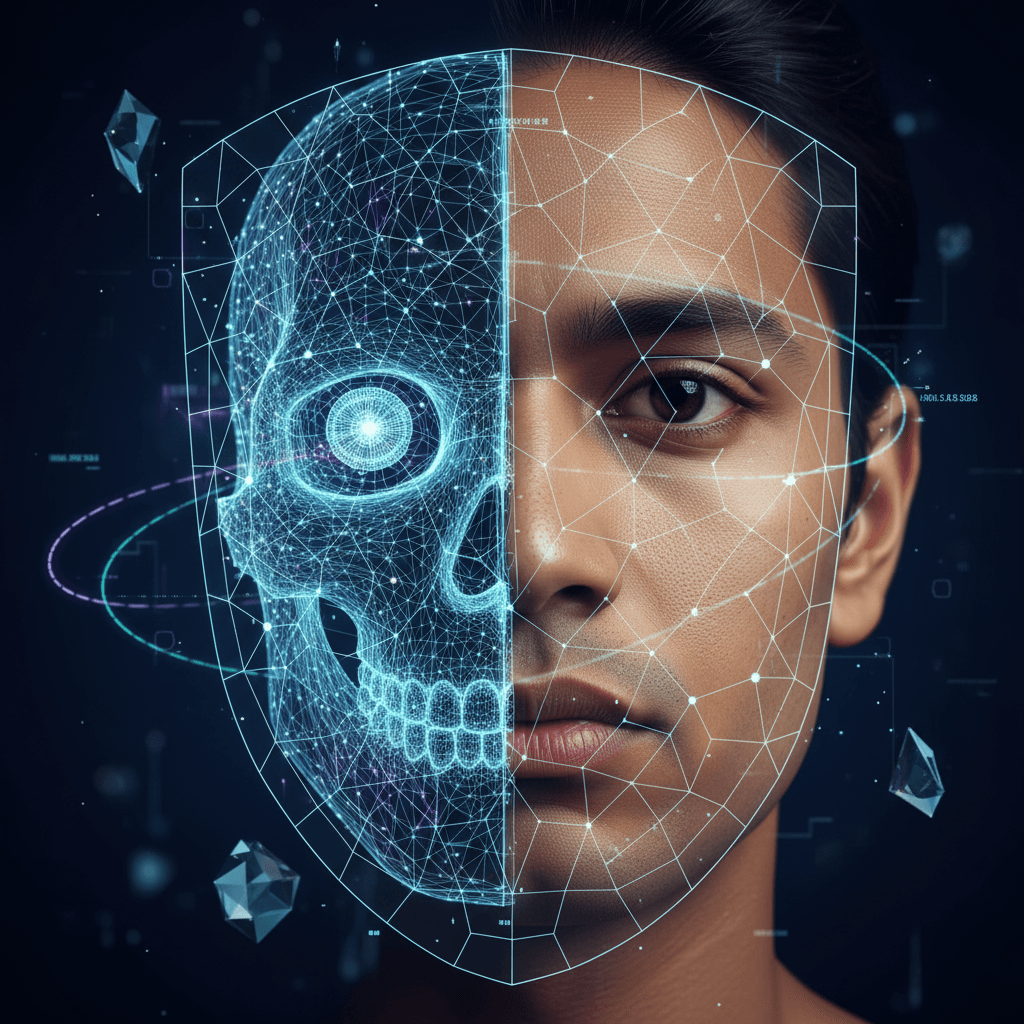

YouTube’s decision to grant all creators over the age of eighteen access to its proprietary likeness detection technology represents a pivotal shift in the governance of synthetic media.[1] This expansion, which moves the tool beyond the exclusive domain of the YouTube Partner Program, signals a recognition of the growing threat deepfakes pose to individual digital identities regardless of a channel's size or commercial success. By integrating this face-swap detection directly into the YouTube Studio interface, the platform is equipping millions of creators with the means to monitor and mitigate unauthorized AI-generated versions of themselves. This move is not merely a technical update but a strategic response to an escalating arms race in generative AI, where the ease of creating realistic impersonations has outpaced the legal and regulatory frameworks designed to prevent identity theft and misinformation.

The technical backbone of this initiative is an advanced facial recognition algorithm that operates on a logic similar to YouTube’s established Content ID system.[1] Rather than scanning for copyrighted audio or video sequences, the likeness detection tool specifically identifies synthetic facial features that mirror a registered creator.[2][1] To utilize the system, creators must first undergo a rigorous enrollment process that includes the submission of a government-issued photo ID and a randomized selfie video.[3][4] During this video verification, users are typically required to perform specific head movements to ensure the biometric data is current and authentic. Once verified, YouTube creates a unique facial reference profile that is used to scan all newly uploaded content across the platform.[3] When a match is found, the system flags the video for the creator’s review within a dedicated Likeness Management tab in YouTube Studio. From this dashboard, creators can examine the context of the appearance and choose to file a privacy removal request, request a label for synthetic media, or ignore the instance if it is deemed transformative or authorized.

The democratization of these protection tools addresses a critical vulnerability in the modern digital creator economy.[4] For years, only high-profile public figures and creators within the formal Partner Program possessed the resources or platform leverage to combat sophisticated impersonation. However, smaller creators and those who do not monetize their content have increasingly become targets for AI-driven scams, including deepfake advertisements for fraudulent products or unauthorized pornographic material. By removing the monetization barrier, YouTube is acknowledging that digital identity is a fundamental right rather than a professional privilege. This shift is particularly significant because smaller channels often lack the legal teams or automated monitoring services available to major influencers. The automated nature of the scan provides these individuals with a "face shield" that works in the background, offering a level of proactive security that was previously impossible to achieve through manual reporting alone.

From an industry-wide perspective, YouTube’s proactive stance places it at the forefront of a global debate over the ethics of generative artificial intelligence and digital personhood. This development aligns with emerging legislative efforts such as the proposed NO FAKES Act, which seeks to establish a federal right to one’s own voice and likeness in the United States. As technology giants face increasing pressure to police the proliferation of low-quality, AI-generated content known as "slop," the implementation of automated detection tools is becoming a competitive necessity. Compared to rivals like Meta or TikTok, which have primarily focused on labeling AI content through metadata and user-led reporting, YouTube’s automated face-match system offers a more aggressive, preventative approach. This creates a new standard for platform responsibility, suggesting that the host of the content should be accountable for providing the infrastructure to detect unauthorized synthetic recreations, rather than placing the entire burden of proof on the individual whose likeness has been exploited.

Despite the technological leap, the widespread rollout of likeness detection presents several complex challenges and limitations.[5] The system must navigate the blurred lines of fair use, particularly in the realms of political satire, parody, and news reporting. YouTube has indicated that its internal review process for removal requests will weigh the context of the synthetic content heavily. For instance, an AI-generated caricature used in a comedy sketch might be protected as creative expression, whereas a deepfake used to endorse a commercial product without consent would likely be flagged for immediate deletion. Furthermore, the tool is currently focused almost exclusively on visual matches.[5][1][6] Voice cloning, which is one of the fastest-growing impersonation risks, is not yet fully integrated into the automated scanning process, though the company has signaled plans to extend detection to audio later this year. This gap means that creators remain vulnerable to "voice-only" deepfakes, which are often used in audio-based scams and misinformation campaigns.

The privacy implications of this system also merit significant scrutiny.[7][3] To protect a user's face, the platform must first collect and store sensitive biometric data. YouTube has stated that the identity verification data and reference profiles are used exclusively for the safety feature and are not utilized to train Google’s broader generative AI models.[3][5] However, the centralization of such data within a single corporate entity remains a point of concern for privacy advocates who fear the potential for data breaches or shifts in policy that could lead to broader biometric surveillance. To mitigate these fears, the platform allows creators to withdraw from the program at any time and request the deletion of their enrollment data. This opt-in model is designed to balance the urgent need for protection with the necessity of user consent in the handling of personal biological identifiers.

In the long term, the expansion of likeness detection will likely reshape the relationship between creators and the platforms they inhabit. As AI tools continue to evolve, the distinction between authentic human performance and synthetic generation will become even harder to perceive.[4] Tools like these provide a foundational layer of trust that is essential for the continued viability of the creator economy. If users cannot trust that the person they are watching is who they claim to be, the social contract of digital influence begins to dissolve. By empowering all adult creators to take ownership of their digital selves, YouTube is not just combatting a specific type of fraud; it is attempting to preserve the concept of authenticity in an increasingly artificial landscape. The success of this initiative will be measured not just by the number of videos removed, but by the degree to which it deters malicious actors from attempting to co-opt human identities for profit or deception.

Ultimately, this move by YouTube represents a maturing of the platform’s approach to the AI revolution. After a period focused heavily on the creative potential of generative tools, the industry is now confronting the necessity of safety and rights management. The transition of likeness detection from a pilot program for the elite to a standard feature for the many indicates that automated identity protection is moving toward becoming a basic utility of social media. As the technology matures and eventually incorporates voice and other behavioral biometrics, it may serve as a blueprint for a global digital rights infrastructure where individuals, regardless of their status, have the technical means to defend their digital presence against the frictionless replication of the AI era. This democratization of safety is a necessary step toward ensuring that the benefits of technological innovation do not come at the expense of human agency and dignity.

Sources

[1]

[3]

[4]

[5]

[7]