Salesforce kills generative AI hype, demands deterministic enterprise reliability.

As hallucinations and drift tank confidence, Salesforce rebuilds enterprise AI around predictable, rule-based logic.

December 25, 2025

The enthusiastic embrace of large language models (LLMs) that swept through the corporate world has begun to temper, as a major player in enterprise software, Salesforce, signals a significant decline in executive and customer trust. This pivot from initial generative AI exuberance to a more cautious, reliability-focused approach reflects a broader industry reckoning with the operational limits of probabilistic AI. Salesforce executives, including Senior Vice President of Product Marketing Sanjna Parulekar, have acknowledged that confidence in LLMs has "fallen over the past year," with Parulekar stating, "All of us were more confident about large language models a year ago."[1][2] This candid admission from one of the world's most valuable enterprise software companies marks a critical turning point, highlighting the gap between AI hype and dependable, day-to-day business implementation.

The primary culprits behind the waning trust are the intrinsic reliability issues that plague general-purpose generative AI models when deployed in mission-critical enterprise environments. Salesforce’s internal studies and customer experiences have repeatedly flagged problems like "hallucination," a term for the models confidently fabricating facts, and a phenomenon the company calls LLM "drift," where AI agents lose focus or omit required steps when conversations move off-script.[3][2][4] Muralidhar Krishnaprasad, Chief Technology Officer of Agentforce at Salesforce, specifically pointed to models failing when given complex instructions, noting that beyond approximately eight directives, the AI agents begin omitting critical steps—an unacceptable flaw for tasks requiring precision and consistency.[5][2] This inherent randomness and unpredictability, while perhaps a feature in creative applications, is the "bane of enterprise software," where regulators and business logic demand absolute certainty.[6]

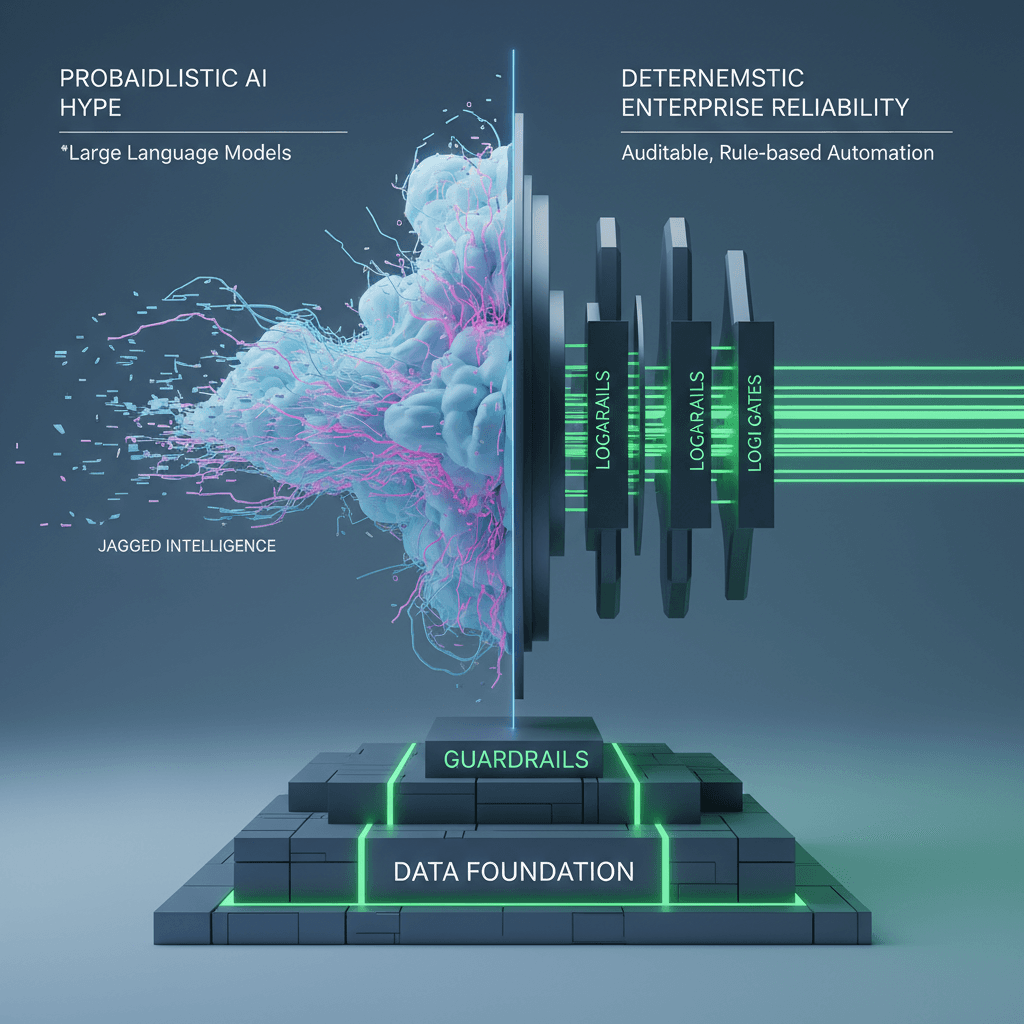

The strategic response by Salesforce underscores a paradigm shift away from open-ended generative models towards what the company terms "deterministic automation" and "tight guardrails" within its flagship Agentforce platform.[2][1] This pivot involves consciously limiting the role of generative AI and emphasizing rule-based systems to ensure predictable, trusted outcomes for customers, which is now considered "enterprise-grade reliability."[2][6] This move is not a complete abandonment of AI, but rather a re-prioritization of data, logic, and governance over raw, unconstrained model output. Chief Executive Officer Marc Benioff has also stressed the importance of "data foundations" as the top priority over AI models, explicitly to address the issue of hallucinations that occur without proper data context.[2][7] Salesforce's own research supports this strategic shift, with a survey of nearly 6,000 global knowledge workers indicating that over half do not trust the data used to train AI systems, and a significant majority, more than three-quarters, cite accurate, complete, and secure data as critical to building AI trust.[8][9]

The challenges identified by Salesforce are a microcosm of the wider obstacles facing the entire AI industry in its quest for scaled enterprise adoption. Industry reports confirm that a "trust gap" is a central issue, with a study showing that only 6% of technology decision-makers trust agentic AI to manage core business processes, despite overwhelming plans to increase investment in the technology.[10] Concerns about AI inaccuracy and cybersecurity risks are shared by about half of all employees, according to other research.[11] The financial and reputational stakes are immense; one study found that a staggering 95% of GenAI projects fail due to issues that include spiraling infrastructure costs and poor performance.[12] In regulated sectors such as banking, insurance, and healthcare, the possibility of a "single incorrect statement" or an "omission" from a statistical AI model can lead to catastrophic compliance breaches and customer harm, reinforcing the need for deterministic, rule-based systems over probabilistic guesswork.[13] This highlights a growing consensus that the "jagged intelligence"—a term coined by Salesforce AI Research to describe the gap between an LLM's raw capability and its reliable business performance—must be smoothed out to unlock AI's true value.[14][15]

This push for reliability is already reshaping the vendor landscape and driving new technological investment. The industry is rapidly adopting methods like Retrieval-Augmented Generation (RAG) to ground LLM outputs in trusted, proprietary enterprise data, with Gartner projecting that the adoption rate of enterprise RAG technology will reach 68% by 2025.[16] This evolution signals a maturing of the AI market, moving beyond the initial spectacle of generative capabilities to focus on "Enterprise General Intelligence" (EGI)—AI systems that couple human-like adaptability with the consistency and predictability that businesses require.[14] The experience of a major industry leader like Salesforce—which is now emphasizing that the future of enterprise AI lies not just with the models, but with the "trusted AI infrastructure" and "deterministic frameworks"—sends a clear message.[2] The market is shifting its value proposition from simply generating content to delivering auditable, accurate, and consistently reliable business outcomes, suggesting that the next wave of AI success will be defined by governance, accuracy, and trust, rather than sheer generative power.

Sources

[3]

[7]

[10]

[11]

[12]

[13]

[16]