Naver unveils Seoul World Model to stop AI hallucinations by grounding video in physical reality

How Naver’s Seoul World Model uses 1.2 million Street View images to eliminate AI hallucinations and ensure spatial consistency

March 29, 2026

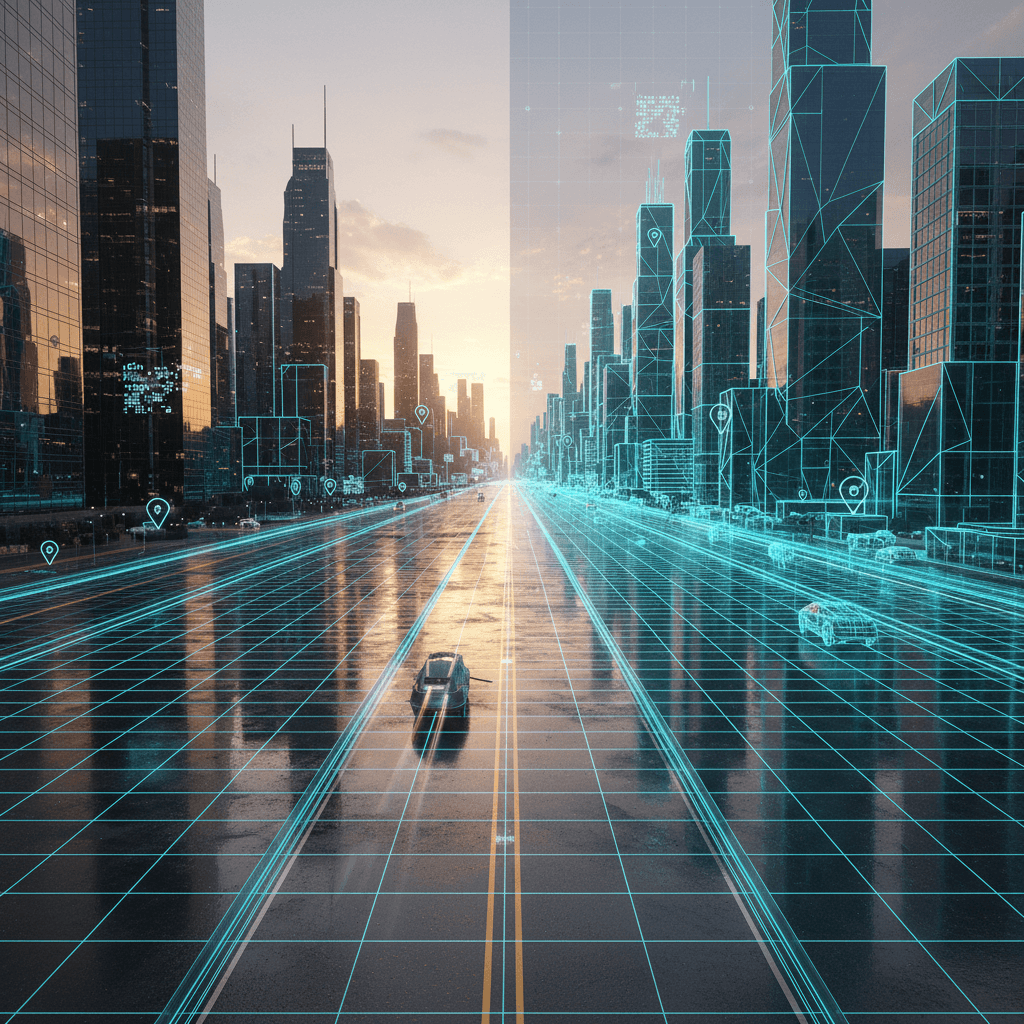

The evolution of generative artificial intelligence has reached a critical juncture where the ability to create visually stunning imagery is no longer enough. For industries such as autonomous driving, robotics, and urban planning, the primary hurdle has shifted from aesthetic quality to physical and spatial reliability. While contemporary video generation models like OpenAI’s Sora have demonstrated an uncanny ability to "dream" realistic scenes, they often fail the test of physical consistency, with buildings morphing as the camera turns and roads leading into impossible geometries. South Korean internet giant Naver has unveiled a solution to this problem with its Seoul World Model (SWM), a pioneering video world model that leverages over one million actual Street View images to ground AI generation in real-world geometry, effectively preventing the software from hallucinating the structure of entire cities.

The fundamental innovation of the Seoul World Model lies in its move away from pure pixel-based prediction toward a framework anchored in geographic reality. Most existing video models are trained on massive datasets of ungrounded video-text pairs, meaning they learn what a city looks like but not how it is built. Consequently, when asked to simulate a long drive through a metropolitan area, these models eventually lose track of the spatial relationship between objects. Naver’s researchers addressed this by building SWM on a dataset of 1.2 million panoramic images captured across Seoul, integrated with a Retrieval-Augmented Generation (RAG) system.[1] This architecture allows the model to query specific geographic coordinates and camera trajectories, retrieving real-world appearance and geometric references to guide every frame it produces.[1]

By using real Street View data as a foundation, SWM introduces a level of spatial consistency that has previously eluded the AI industry. When the model generates a video of a vehicle turning a corner, it does not have to guess what the building behind that corner looks like; it can reference the actual geometric layout of that specific intersection. This prevents the "shifting world" effect common in other generative models, where backgrounds drift or background architecture subtly changes its shape as the perspective shifts. This grounding is achieved through a technique called cross-temporal pairing, which forces the model to ignore transient objects like moving cars or pedestrians and focus instead on the persistent spatial structures of the city. The result is a simulation that can maintain accuracy over multi-kilometer trajectories without the accumulation of errors that typically causes AI-generated worlds to dissolve into nonsense.

Beyond its reliance on Seoul’s data, one of the most significant breakthroughs of the Seoul World Model is its ability to generalize. According to Naver’s research, the model does not merely memorize the streets of the South Korean capital; it learns the underlying logic of urban environments. This allows the model to achieve zero-shot generalization, meaning it can simulate videos of other global cities—such as San Francisco or Tokyo—without requiring any additional fine-tuning or location-specific training data. This capability suggests that the model has internalized a sophisticated "world logic" regarding how light interacts with glass, how shadows fall across asphalt, and how three-dimensional structures behave under various camera movements. This leap in generalization is a major milestone for the development of "World Models," a concept championed by AI visionaries like Yann LeCun, who argue that true intelligence requires an understanding of the physical world’s constraints.

The implications for the autonomous vehicle industry are particularly profound. Traditional training for self-driving cars relies heavily on expensive, manually collected sensor data or synthetic environments built in game engines like Unreal Engine. While synthetic environments are useful, they often lack the messy complexity and visual diversity of the real world. SWM offers a middle ground: a "neural simulator" that is as visually rich as the real world but as flexible as a video game. Developers can use the model to generate rare "edge case" scenarios—such as a massive flood on a specific street or an unusual obstacle in a busy intersection—to test how an autonomous system’s sensors would react. Because the model allows for text-prompted scenario control, researchers can even simulate fantastical or high-stress events, such as a giant creature appearing between skyscrapers, to push the limits of visual perception algorithms.

In the broader context of the AI industry, Naver’s approach highlights a growing divide between models designed for entertainment and those designed for utility. While the former can afford to be "hallucinogenic" for the sake of creativity, the latter must be tethered to reality. The Seoul World Model represents a shift toward "Physical AI," where the goal is to create digital twins that are indistinguishable from reality in both appearance and behavior. By integrating RAG with video generation, Naver has provided a blueprint for how other specialized models might be built, perhaps leading to "Hospital World Models" for medical robotics or "Factory World Models" for industrial automation.

The technical architecture of SWM also addresses the issue of data sparsity. Real-world street-view captures are often taken at intervals of five to twenty meters, which is too far apart for smooth video training.[1] Naver’s team developed an Intermittent Freeze-Frame strategy and a 3D-aware Variational Autoencoder (VAE) to interpolate these gaps, synthesizing smooth, continuous motion from sparse snapshots. This allows the model to navigate freely through the city, supporting trajectories that go beyond simple forward driving to include looking around corners, changing lanes, or performing U-turns. This flexibility is essential for creating truly immersive and interactive digital twins that can be used for urban planning or virtual tourism.

As the race to develop General World Models intensifies, Naver’s Seoul World Model serves as a reminder that the quality of data and the method of grounding are just as important as the scale of computation. While Western tech giants like OpenAI and Google have focused on the breadth of their training sets, Naver has demonstrated the power of depth by mastering a single, massive metropolitan environment and using that mastery to unlock global capabilities. The move from "imagining" a city to "simulating" one marks a fundamental change in how AI understands human environments.

Ultimately, the development of the Seoul World Model suggests that the next generation of AI will be characterized by its ability to respect the laws of physics and the permanence of geography. By stopping the hallucination of cityscapes, Naver has moved the industry closer to a future where AI can be used not just to generate videos, but to safely pilot robots through the streets we walk every day. The success of SWM in maintaining consistency over long distances and its surprising ability to translate its knowledge to unseen cities sets a new benchmark for what is possible in the field of large-scale spatial simulation. This progress reinforces the idea that the most effective way to teach an AI about the world is to give it a map, not just a dream.

Sources

[1]