GAM System Conquers AI's Context Rot, Enabling Unprecedented Long-Term Memory

Its "just-in-time" agentic approach defeats "context rot," granting AI unprecedented long-term memory for complex, coherent conversations.

November 30, 2025

A novel memory architecture for artificial intelligence agents, named General Agentic Memory (GAM), is showing remarkable success in overcoming a critical limitation in large language models known as "context rot." Developed by a Chinese research team, GAM employs a unique approach that preserves the full history of interactions, enabling AI agents to maintain coherence and accuracy over much longer conversations than previously possible.[1] This new framework has demonstrated significant performance improvements over existing methods, including the widely used Retrieval-Augmented Generation (RAG) technique, particularly in complex, memory-intensive benchmarks.

The core challenge GAM addresses is the degradation of an AI's performance as conversations or tasks become longer and more complex. This phenomenon, dubbed "context rot," occurs because current AI models have a finite "context window," a limit on the amount of information they can actively consider at any one time. As an interaction grows, details mentioned earlier are pushed out of this window, leading the AI to forget key facts, misinterpret user intent, or lose the thread of the conversation.[2] Traditional methods have tried to solve this by creating summaries of past interactions, but this "ahead-of-time" compression inevitably leads to information loss. Details that seem unimportant at the time of summarization might become crucial later, but by then they have been permanently condensed or discarded. This limitation highlights a fundamental issue: simply expanding the context window doesn't equate to better long-term memory or understanding.

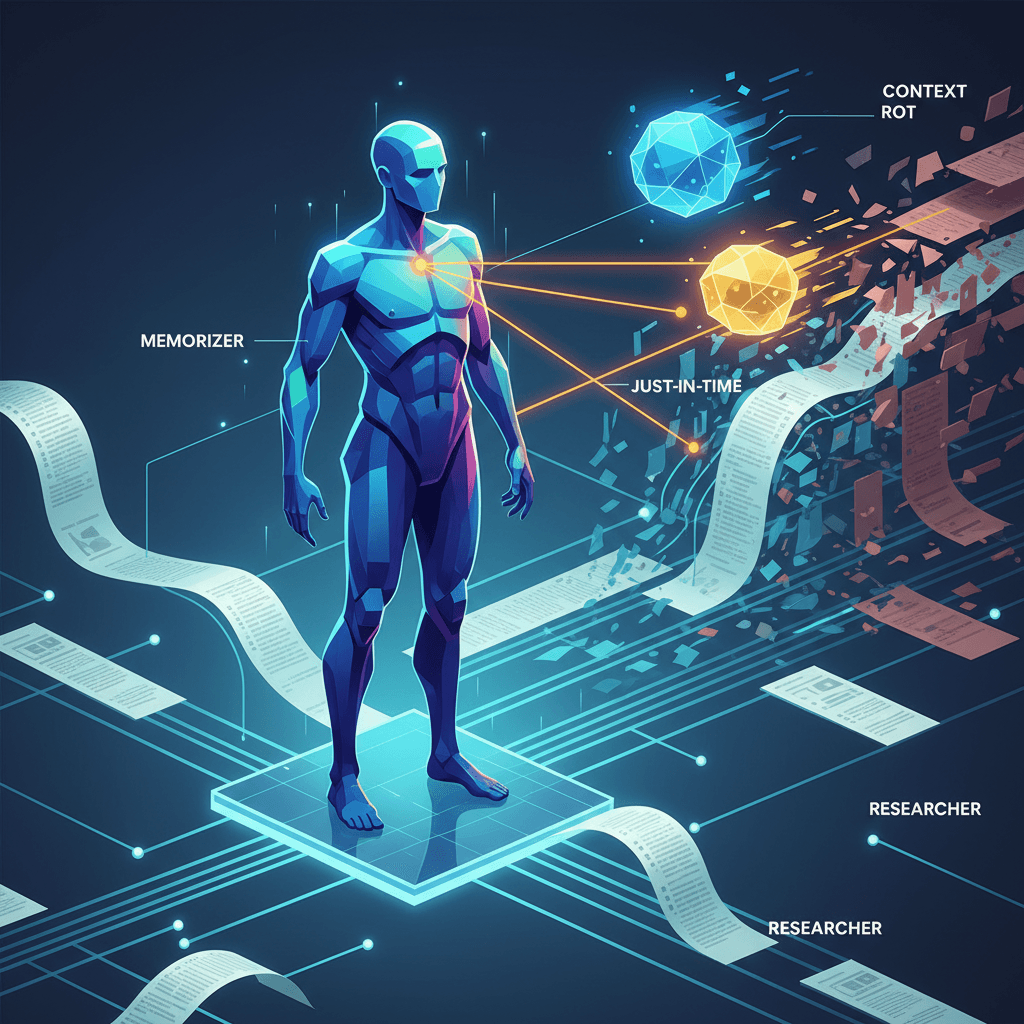

General Agentic Memory introduces a paradigm shift by adopting a "just-in-time" compilation principle. Instead of compressing information in advance, GAM preserves the complete, unaltered history of an interaction in a database called a "page-store."[3][4][5] This is managed by a dual-agent architecture consisting of a "Memorizer" and a "Researcher."[3][6][7][5] The Memorizer works in the background, creating a lightweight summary or "map" of the conversation while archiving the full, high-fidelity history.[3][5] The second agent, the Researcher, activates only when the AI needs to recall specific information.[3][5] Guided by the Memorizer's map, the Researcher performs a "deep research" loop—iteratively planning, searching, and reflecting—to retrieve the exact details needed from the complete history.[3][5] This allows the system to construct a precise, optimized context at the exact moment it's required, avoiding the pitfalls of premature summarization.

The performance of GAM in rigorous testing has underscored the effectiveness of its design. In benchmarks designed to test long-term memory and complex reasoning, GAM has substantially outperformed existing systems. Notably, on multi-hop reasoning tasks like the RULER benchmark, which require an agent to track how information changes over many steps, GAM achieved over 90% accuracy, a task where most baseline models failed completely.[3][8] Furthermore, in the HotpotQA benchmark, GAM maintained stable and high performance even when dealing with enormous context lengths of up to 448,000 tokens, directly demonstrating its resilience against context rot.[3][8] This stands in contrast to standard RAG systems, which can struggle as the volume of retrieved information grows. RAG operates more like a read-only system, retrieving chunks of information that can be fragmented or lack broader context, and it doesn't inherently learn from interactions.[9] GAM’s agentic, read-write approach, however, allows for a more dynamic and intelligent retrieval process that mimics a more human-like ability to search and synthesize a deep well of memory.[9]

The implications of this new architecture for the AI industry are significant. As AI agents are deployed in more complex, long-running tasks—from sophisticated customer support to autonomous scientific research—the ability to maintain a reliable and complete memory becomes paramount. By mitigating context rot, GAM opens the door to more capable and dependable AI assistants that can handle intricate, multi-step workflows without losing track of crucial information. This development suggests a future where AI memory systems move from a static, pre-compilation model to a more dynamic, search-centric one. The success of General Agentic Memory signals a pivotal evolution in how we design AI, shifting the focus from simply storing information to knowing precisely how to find and use it when it matters most.