Enterprises Abandon Data Perfection to Master the Practical Last Mile of Agentic AI

Forget data perfection and drive ROI by operationalizing agentic AI within the messy, real-world systems of the modern enterprise.

May 12, 2026

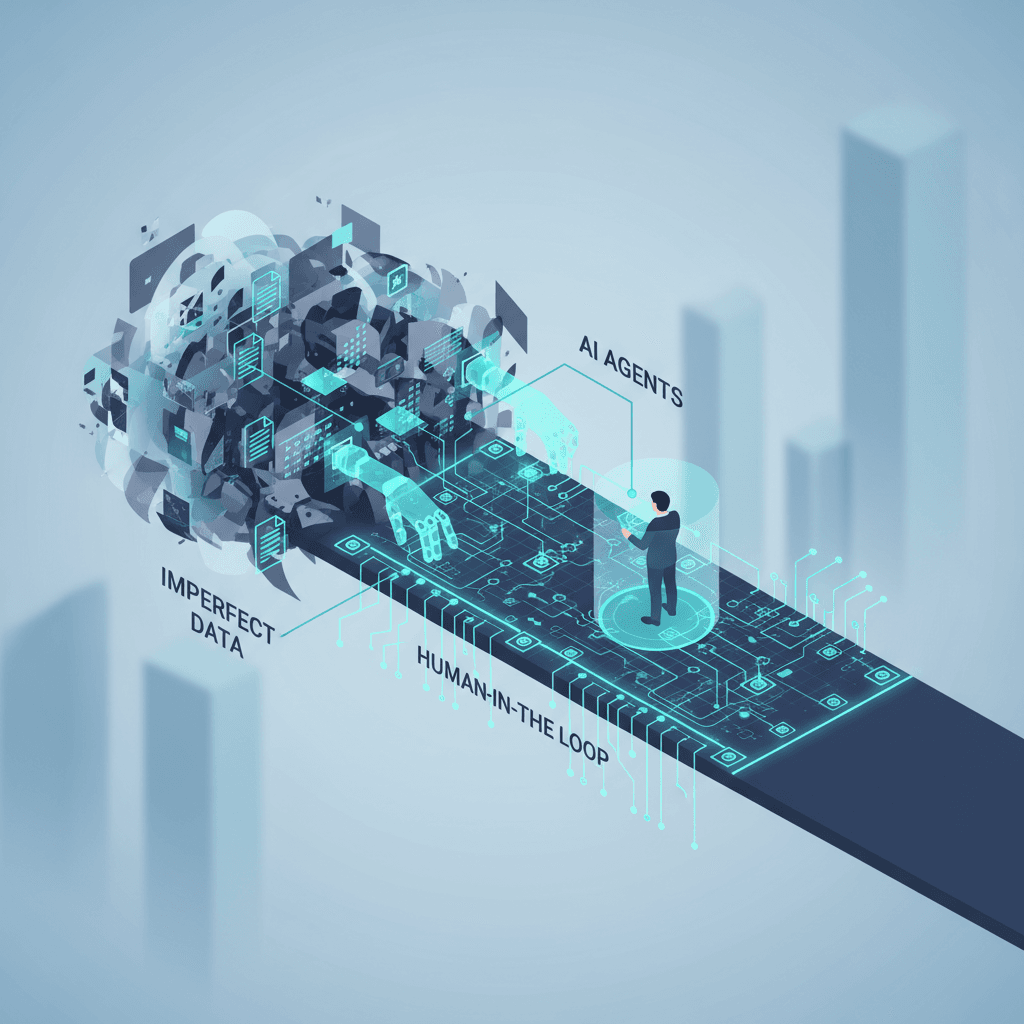

The narrative surrounding artificial intelligence has long been dominated by a perfectionist philosophy, suggesting that organizations must undergo years of rigorous data scrubbing and massive infrastructure overhauls before they can realize the true value of generative technologies.[1] Joe Rose, the president of strategic technology provider JBS Dev, is challenging this consensus, arguing that the industry is approaching a critical juncture where the focus must shift from the raw capability of massive models to the practical last mile of implementation.[1] According to Rose, the enterprise sector is currently hindered by several myths that stall progress, particularly the belief that data must be pristine to be useful.[1] As the market moves beyond the initial phase of experimentation, the real value of artificial intelligence lies not in chasing the next model breakthrough, but in operationalizing existing capabilities within the messy, imperfect environments of the modern enterprise while maintaining a sharp focus on cost sustainability.

One of the most persistent barriers to AI adoption is the misconception that data quality must be flawless before any meaningful workloads can begin. For years, vendors and consultants have suggested that multi-year data transformation programs and the creation of massive, unified data lakes are the necessary precursors to innovation. Rose contends that this traditional view overlooks the inherent strengths of large language models, which are remarkably resilient to noise and "half-written" prompts.[1] The current generation of tooling is better equipped than ever to handle poor-quality data, often performing sophisticated extraction and reasoning on documents that would stymie traditional analytics.[1] In practice, this means that companies can begin extracting value immediately by layering AI over their existing systems rather than waiting for an idealized state of data architecture.[2] For example, in the medical sector, generative systems have proven capable of handling billing reconciliation tasks where records were a chaotic mix of PDFs and images with overlapping or misplaced fields, such as doctor names appearing in patient records.[1] By utilizing simple prompts and advanced text extraction, these models can scope and clean data in real-time, allowing for agentic approaches that compare records against complex insurance contracts to ensure billing accuracy.[1]

The shift toward what Rose describes as the last mile of AI involves a transition from passive chatbots to active, agentic systems that can perform multi-step workflows. Many business leaders find themselves confused by the technical jargon surrounding agentic AI, but the reality is simpler than the marketing suggests. In its most practical form, agentic AI is essentially a large language model that has been empowered to generate API calls instead of just text.[3] This capability allows the system to evaluate data, make a decision, and then trigger a specific action within a company's existing software ecosystem, such as updating a CRM or modifying a logistics schedule. This operationalization represents a significant move away from the traditional "build it and forget it" software development model.[1] Because AI outputs are non-deterministic, these systems require continuous oversight and the integration of a human-in-the-loop to handle unpredictable results.[1] Success in this last mile depends heavily on an organization’s underlying architecture; those with clean, API-driven systems will find it much easier to deploy agents that can navigate between different platforms and automate complex, high-friction tasks without requiring a total system rebuild.[3]

As the industry moves forward, the conversation is expected to pivot from the radical leaps in model capability that defined the last few years toward a more grounded focus on cost sustainability and portability.[1] The current rate of data center construction and the astronomical capital expenditures required to train and run frontier models are not indefinitely sustainable.[1] Rose predicts that the next major breakthrough will not be a model that is ten times larger, but rather the ability to run sophisticated workloads locally on a laptop or a mobile phone. There is a growing recognition that the body of data available for training—essentially the entire public internet—has largely been exhausted. Without a new, massive influx of unique data, the era of exponential gains in model intelligence through scale alone may be reaching a plateau. Consequently, the challenge for the next several years will be optimization: finding ways to achieve high-level reasoning with smaller, more efficient models that reduce the "GPU tax" and make AI economically viable for a wider range of everyday business applications.

This economic reality has significant implications for how companies choose to invest in AI, specifically regarding the debate between buying software-as-a-service (SaaS) and building internal solutions. While many organizations are tempted to wait for their existing SaaS providers to roll out "bolt-on" AI features, Rose suggests a more controversial path: leveraging existing cloud infrastructure to build custom agentic workloads.[1] Most enterprises already have a presence in major cloud environments like AWS, Azure, or Google Cloud, which now offer all the necessary tools to implement AI agents without the need for additional software licenses or extensive retraining. By building on top of their own data and infrastructure, companies can maintain greater control over their intellectual property and avoid the high costs often associated with specialized AI-ready SaaS platforms. This approach allows for an incremental strategy where automation can grow from a modest 20 percent of a workflow to 80 percent or more over time, proving value through specific, measurable outcomes rather than speculative, large-scale investments.[1]

Ultimately, the successful integration of AI into the enterprise requires a pragmatic shift in perspective that values agility and ROI over technical perfection. The myth of perfect data has acted as a paralysis agent for too many organizations, preventing them from utilizing the very tools that could help them organize and understand their information more effectively. By focusing on the last mile—the actual integration of AI into daily operations and its movement toward edge devices—businesses can stop chasing the hype of the next model release and start building sustainable, high-impact solutions. The future of the AI industry is not defined by the size of the next data center, but by the ability of organizations to make these powerful tools work within the flawed, real-world systems they use every day. As the initial excitement around generative AI matures into a pursuit of long-term operational excellence, the leaders will be those who prioritize architectural integrity, cost-conscious implementation, and the practical application of intelligence where it is needed most.