Adobe debuts Firefly Quick Cut to transform raw footage into rough edits via text prompts

Harnessing generative AI to transform raw footage into professional rough cuts, shifting the video editor's role toward creative direction.

February 25, 2026

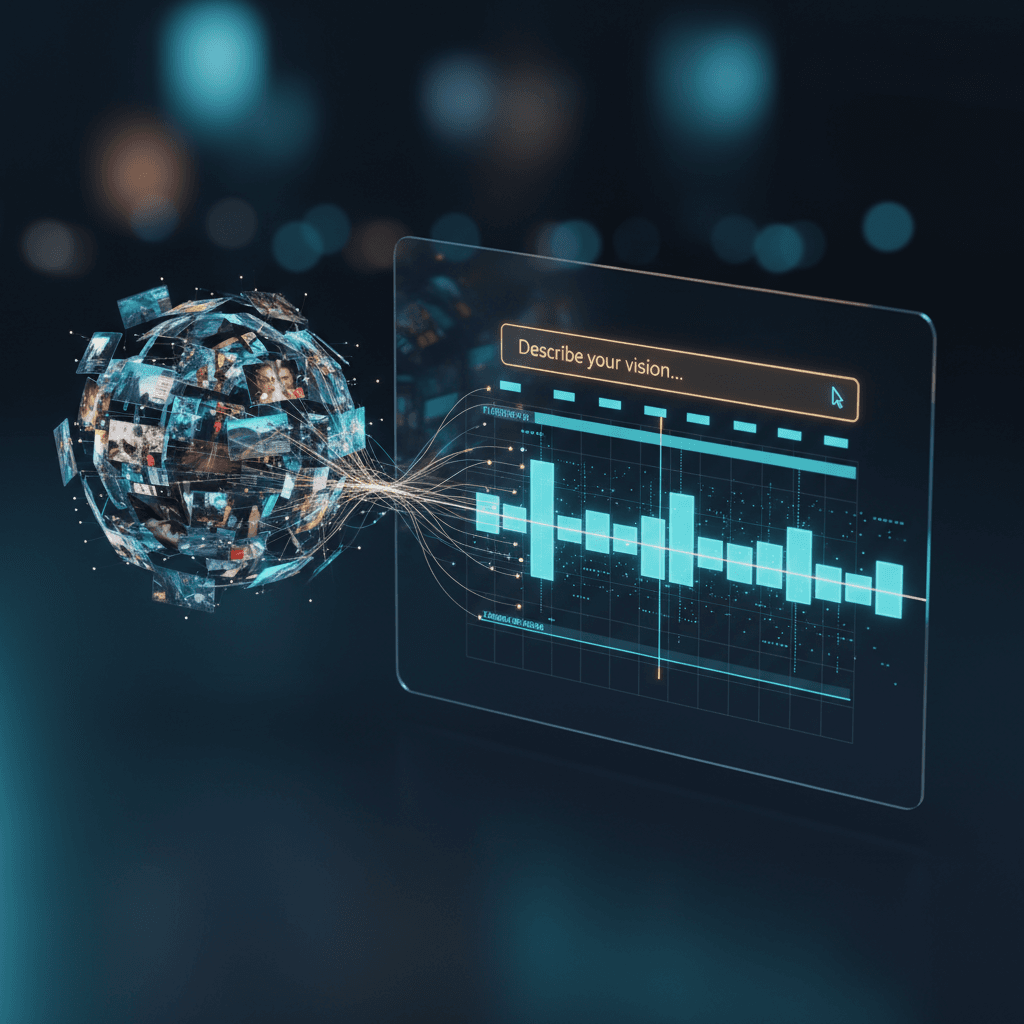

The transition from raw camera footage to a structured narrative has long been regarded as the most taxing phase of the post-production process. For decades, video editors have faced the "empty timeline" syndrome, a period of manual labor characterized by hours of scrubbing through hours of b-roll, synchronizing audio, and identifying the elusive "best takes." Adobe has aimed to bridge this gap with the introduction of its new Firefly-powered Quick Cut tool.[1][2] By leveraging generative artificial intelligence, the tool allows creators to transform a folder of disorganized raw assets into a coherent rough edit through simple natural language prompts.[3][1][4][2] This development marks a significant shift in the creative industry, moving the primary role of the editor from a manual laborer of clips to a high-level creative director.

At its core, Quick Cut is built upon the Firefly Video Model, an architecture designed specifically to understand not just the pixels of a frame, but the narrative intent behind a sequence of images. Unlike previous automated editing tools that relied on simple metadata or silence detection, Quick Cut utilizes deep visual and auditory analysis to categorize footage. When a user uploads their assets, the AI performs a comprehensive scan, identifying key variables such as focus, lighting quality, emotional resonance in facial expressions, and the clarity of spoken dialogue. This "scene intelligence" allows the system to differentiate between a usable take and a technical error, effectively mimicking the first pass of a human assistant editor.

The utility of the tool is most evident in its natural language interface, which facilitates a "text-to-edit" workflow. Instead of dragging and dropping clips, a creator can input a prompt such as "create a fast-paced sixty-second travel vlog highlighting the beach scenes and sunset, with quick cuts that match an upbeat rhythm." The AI then sifts through the provided footage, identifies the relevant visual themes, and assembles a multi-track timeline. This process includes not only the primary "A-roll" but also the intelligent placement of b-roll. For instance, if the AI detects a narrator speaking about a specific product, it can automatically search the uploaded b-roll for shots of that product and overlay them at the appropriate timecodes to maintain visual interest.

One of the most critical aspects of this technology is that it does not produce a "locked" or flattened video file. Instead, the output is a fully editable, non-destructive timeline compatible with professional suites like Premiere Pro. Every cut, transition, and trim point remains adjustable by the human operator. This design philosophy underscores a "human-in-the-loop" approach, where the AI handles the foundational assembly while the editor retains absolute control over the final artistic polish. The system can even suggest generative micro-transitions, using the Firefly Video Model to bridge awkward jump cuts by synthesizing a few frames of movement, thereby smoothing over gaps in the original footage without requiring the editor to resort to stock templates.[3]

The implications for the broader AI and creative industries are profound. By automating the rough cut, Adobe is effectively lowering the barrier to entry for high-quality video production. Marketing teams, social media influencers, and small-scale content creators who previously lacked the time or technical expertise to manage complex edits can now produce polished drafts in minutes. However, this democratization comes with a reshuffling of professional expectations. For junior editors, whose roles have historically involved the very "grunt work" Quick Cut now automates, the focus must shift toward storytelling strategy and narrative pacing. The industry is moving toward a model where the speed of production is no longer limited by manual dexterity, but by the clarity of the creator’s vision and the precision of their prompts.

From a technical standpoint, the integration of multimodal inputs further distinguishes Quick Cut from standalone AI video generators. While competing models like OpenAI’s Sora or Runway’s Gen-3 focus on generating footage from scratch, Adobe’s tool is designed to work with real-world assets.[1] It can ingest scripts or shot lists to guide its assembly logic, ensuring that the resulting edit follows a predetermined narrative arc.[5] This makes it a practical tool for enterprise-level production, where adherence to a specific script is mandatory. Furthermore, because Firefly is trained on commercially safe data and utilizes Content Credentials, professional users can maintain a clear chain of provenance for their work, ensuring that the AI-assisted content meets the legal and ethical standards required for commercial distribution.

The competitive landscape for AI video tools is currently characterized by a race between pure generation and creative utility.[1] While many platforms are focused on the "wow factor" of creating photorealistic video from a single sentence, Adobe has positioned Quick Cut as a pragmatic solution for the existing professional ecosystem. By focusing on the assembly of real footage rather than just the generation of new pixels, the company addresses a specific bottleneck in the professional workflow.[1] This strategy aligns with the broader industry trend of "agentic AI," where tools act as proactive collaborators capable of making complex decisions based on user intent.

As video content continues to dominate digital communication, the ability to rapidly iterate on different narrative directions becomes a competitive necessity. Quick Cut enables what Adobe describes as "experimentation at the speed of thought." An editor could, in theory, generate five different rough cuts of the same project—one dramatic, one fast-paced, one educational—each based on a different text prompt, all within the span of a few minutes. This allows for a level of creative exploration that was previously impossible due to time constraints. The tool essentially provides a "starting line" that is already halfway to the finish, allowing creators to spend their energy on the nuanced decisions that define a masterpiece rather than the mechanical tasks that define a chore.

Ultimately, the launch of the Quick Cut tool represents a milestone in the evolution of digital storytelling. It signals the end of the era where video editing was defined by the physical management of media and the beginning of an era defined by narrative orchestration. While the technology is still in its early stages, the foundational shift is clear: the most powerful tool in the editor’s kit is no longer the blade or the mouse, but the language used to describe the story. As these models continue to refine their understanding of cinematic language and emotional pacing, the gap between a rough cut and a final masterpiece will continue to shrink, forever changing how the world constructs and consumes visual narratives.