Meta’s Pixio breaks scaling law: Simpler vision model outperforms huge rivals.

Meta resurrects simple pixel-filling training to prove smaller models can achieve superior geometric scene understanding.

December 27, 2025

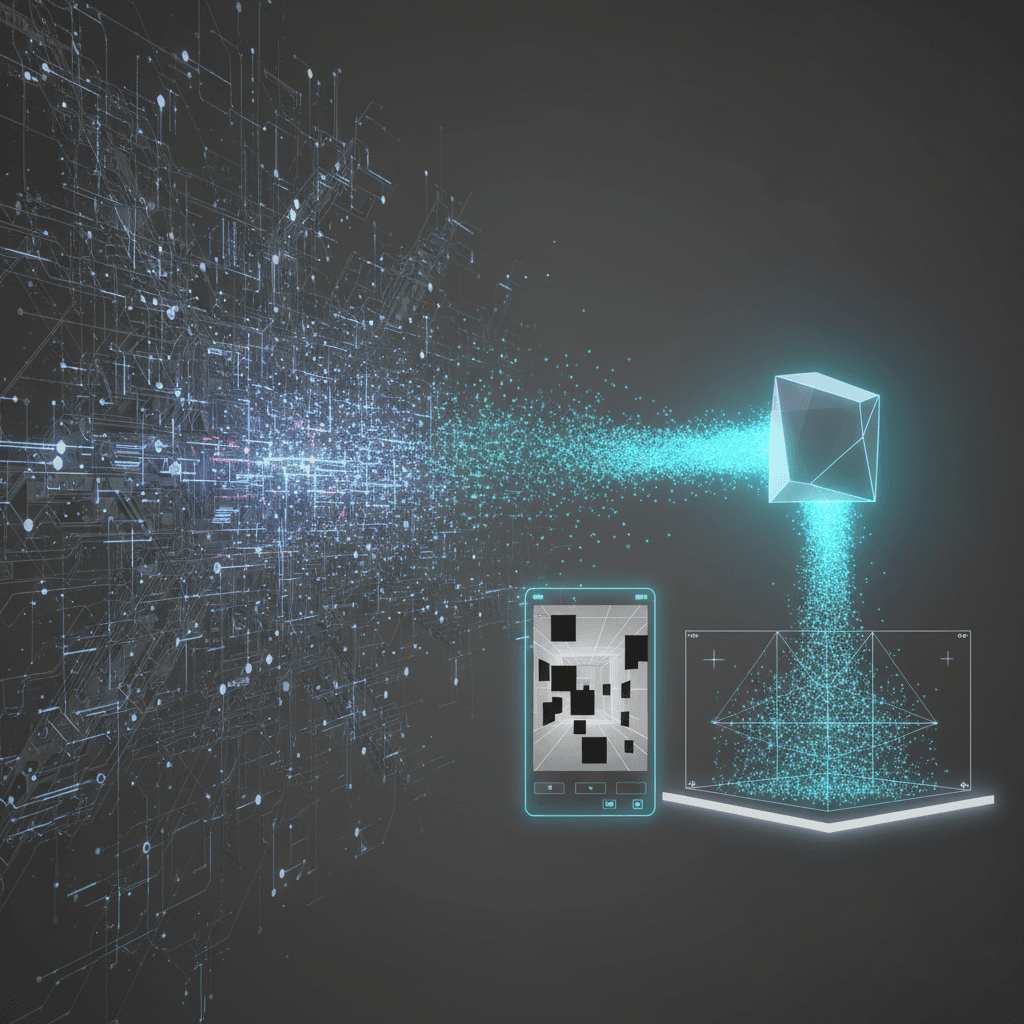

Meta AI has unveiled a new image model, Pixio, whose success is poised to fundamentally challenge the industry’s prevailing wisdom that state-of-the-art performance in computer vision requires ever-larger and more complex neural networks. By resurrecting and refining a once-dismissed training methodology—simple pixel reconstruction—Meta's researchers have demonstrated that a smaller, more parameter-efficient model can outperform competitors with significantly larger architectures and more convoluted training objectives, particularly in critical tasks like monocular depth estimation and feed-forward 3D reconstruction. This breakthrough offers a powerful counter-narrative to the current trend of hyperscaling, suggesting that a smarter, simpler training goal can lead to deeper, more generalized scene understanding, making the resulting models far more practical for real-world deployment.

The foundation of Pixio lies in the Masked Autoencoder, or MAE, a pre-training technique that was recently relegated to the background in favor of models utilizing contrastive or distillation-based learning methods, such as Meta’s own DINOv2 and DINOv3. The MAE approach involves masking or hiding large, random patches of an input image and training the model to predict and restore the missing pixels. While conceptually straightforward, this "pixel filling" task was thought to only teach the model low-level details like texture and color, leaving it incapable of mastering the high-level semantic and geometric reasoning necessary for complex visual understanding. The Meta AI team, however, proved this assumption false by implementing a few minimal yet highly impactful algorithmic updates to the MAE framework. The first critical change was the introduction of a deeper decoder, allowing the reconstruction phase to leverage more detailed information and build a richer, denser representation of the image. They also employed larger masking granularity and used more class tokens to better capture spatial structures and reflections[1]. Crucially, the model’s data diet was upgraded from the standard ImageNet-1K to the massive, web-scale MetaCLIP-2B dataset, a significant factor in its generalization capabilities[1]. The combination of this seemingly "outdated" technique with subtle architectural and data-scaling improvements was the key to unlocking its unexpected prowess in geometric tasks.

Pixio’s superiority is most evident when comparing its performance metrics against complex, state-of-the-art models. The Pixio-H/16 variant, with a relatively modest parameter count of 631 million, was directly compared against the more sizable DINOv3 (841 million parameters) and DINOv2 (1137 million parameters) in dense prediction tasks[1]. The results showcase a decisive victory for the parameter-efficient model. In the challenging task of monocular depth estimation, where the model must infer the depth of every pixel from a single image, Pixio-H/16 achieved a delta-1 metric of 95.5 on the NYUv2 benchmark using the DPT head, significantly outperforming DINOv3's 93.2 and DINOv2's 90.1[1]. A similar gap was observed in feed-forward 3D reconstruction benchmarks, such as on the ScanNet++ v2 dataset, where Pixio’s performance in point reconstruction accuracy and relative depth error consistently surpassed its larger counterparts[1]. These gains are not marginal; they represent a meaningful leap in the model’s ability to interpret and map the three-dimensional geometry of a scene[2]. Beyond raw numbers, the model exhibits a striking qualitative capability to reason about complex visual phenomena. For example, when tasked with reconstructing a heavily masked image, Pixio demonstrated the ability to not only fill in textures but also capture the spatial arrangement of the entire scene, even predicting a mirrored person in a hidden window area—an advanced form of geometric and semantic reasoning that goes far beyond simple pixel interpolation[2].

The implications of Pixio's success reverberate throughout the entire field of computer vision and the broader AI industry. For years, the default strategy for advancing AI performance has been a continuous race for scale, where researchers assumed that increasing the number of model parameters was the primary path to achieving deeper intelligence. This has led to the creation of models with billions of parameters that demand enormous computational resources for both training and inference, severely limiting their accessibility and real-world deployment in resource-constrained environments like mobile devices or smaller enterprises[3][4]. Pixio directly challenges this "bigger is better" dogma, asserting that a refined self-supervised learning objective can be a more potent driver of performance than mere model size[5]. By demonstrating that a model built on simpler principles and fewer parameters can set new performance standards, Meta’s research suggests a necessary shift in focus for future AI development. The paradigm now shifts toward algorithmic efficiency and the choice of pre-training tasks. Instead of prioritizing training methods that simply learn to align image patches or distinguish between views, Pixio proves that a strong focus on *generating* the original image's pixels forces the model to learn the underlying scene structure, object geometry, and spatial relationships with remarkable fidelity[2]. This finding is a strong endorsement for computationally efficient and more pragmatic AI solutions, promising to reduce the barrier to entry for developing and deploying high-performance vision models globally. The industry must now seriously re-evaluate its reliance on brute-force scaling in favor of smarter, task-efficient architectural designs inspired by this compelling work.